|

--- |

|

license: mit |

|

pipeline_tag: document-question-answering |

|

tags: |

|

- donut |

|

- image-to-text |

|

- vision |

|

widget: |

|

- text: "What is the invoice number?" |

|

src: "https://huggingface.co/spaces/impira/docquery/resolve/2359223c1837a7587402bda0f2643382a6eefeab/invoice.png" |

|

- text: "What is the purchase amount?" |

|

src: "https://huggingface.co/spaces/impira/docquery/resolve/2359223c1837a7587402bda0f2643382a6eefeab/contract.jpeg" |

|

--- |

|

|

|

# Donut (base-sized model, fine-tuned on DocVQA) |

|

|

|

Donut model fine-tuned on DocVQA. It was introduced in the paper [OCR-free Document Understanding Transformer](https://arxiv.org/abs/2111.15664) by Geewok et al. and first released in [this repository](https://github.com/clovaai/donut). |

|

|

|

Disclaimer: The team releasing Donut did not write a model card for this model so this model card has been written by the Hugging Face team. |

|

|

|

## Model description |

|

|

|

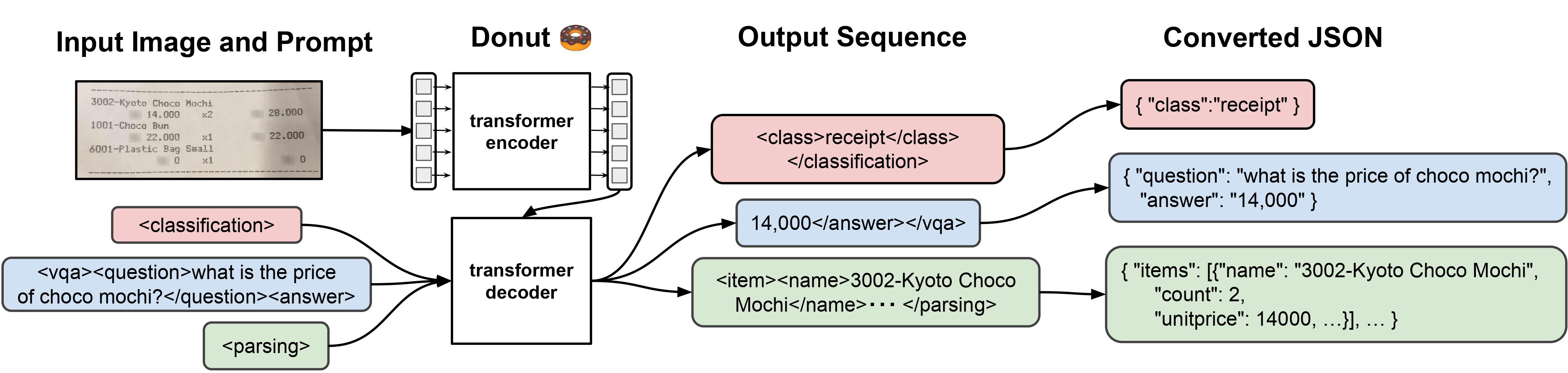

Donut consists of a vision encoder (Swin Transformer) and a text decoder (BART). Given an image, the encoder first encodes the image into a tensor of embeddings (of shape batch_size, seq_len, hidden_size), after which the decoder autoregressively generates text, conditioned on the encoding of the encoder. |

|

|

|

|

|

|

|

## Intended uses & limitations |

|

|

|

This model is fine-tuned on DocVQA, a document visual question answering dataset. |

|

|

|

We refer to the [documentation](https://huggingface.co/docs/transformers/main/en/model_doc/donut) which includes code examples. |