metadata

license: apache-2.0

datasets:

- BelleGroup/train_2M_CN

- BelleGroup/train_3.5M_CN

- BelleGroup/train_1M_CN

- BelleGroup/train_0.5M_CN

- BelleGroup/school_math_0.25M

language:

- zh

GoGPT

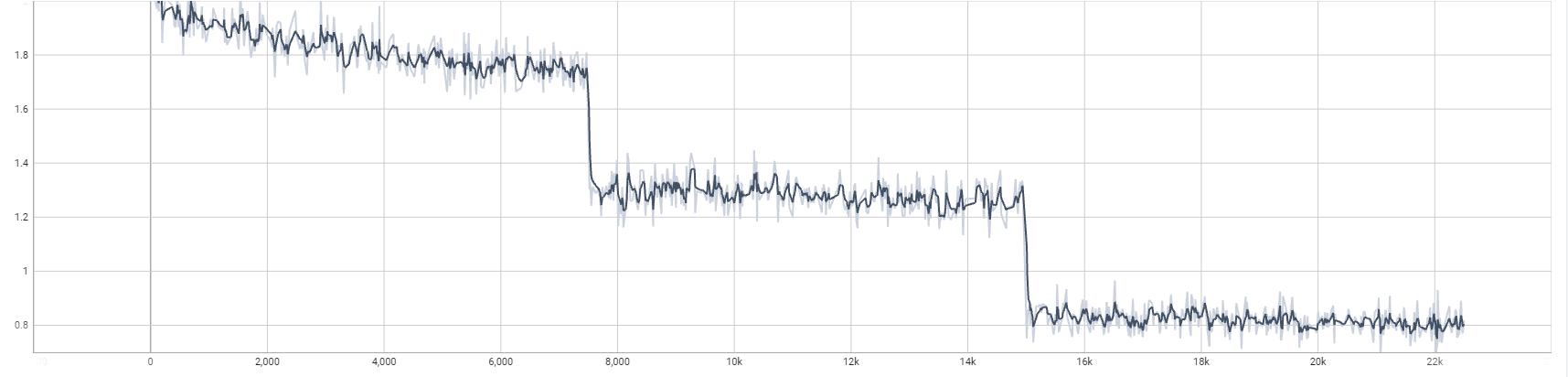

训练第一轮足够了,后续第二轮和第三轮提升不大

- 🚀多样性指令数据

- 🚀筛选高质量中文数据

| 模型名字 | 参数量 | 模型地址 |

|---|---|---|

| gogpt-560m | 5.6亿参数 | 🤗golaxy/gogpt-560m |

| gogpt-3b | 30亿参数 | 🤗golaxy/gogpt-3b |

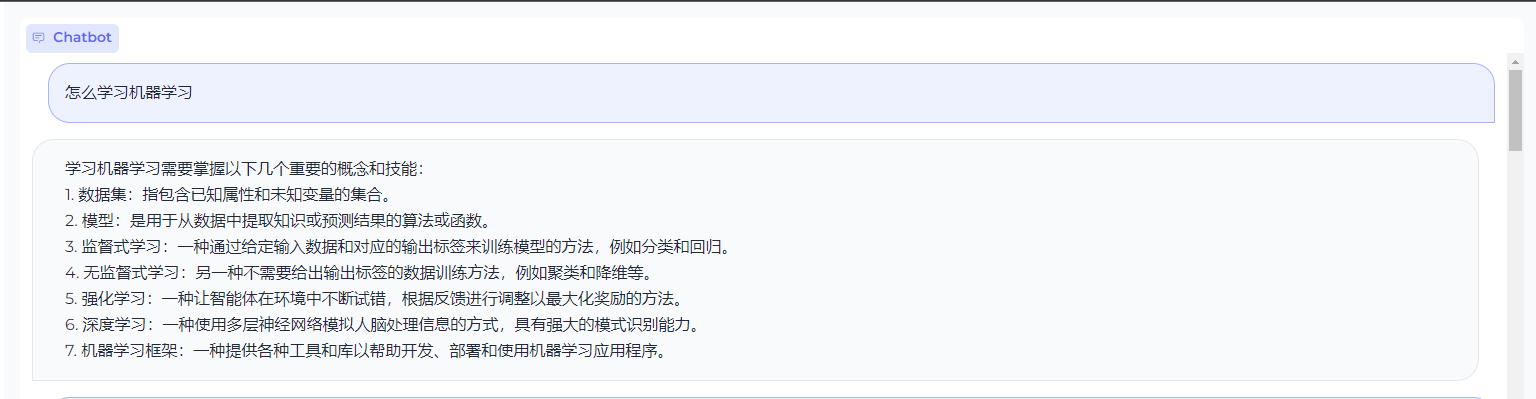

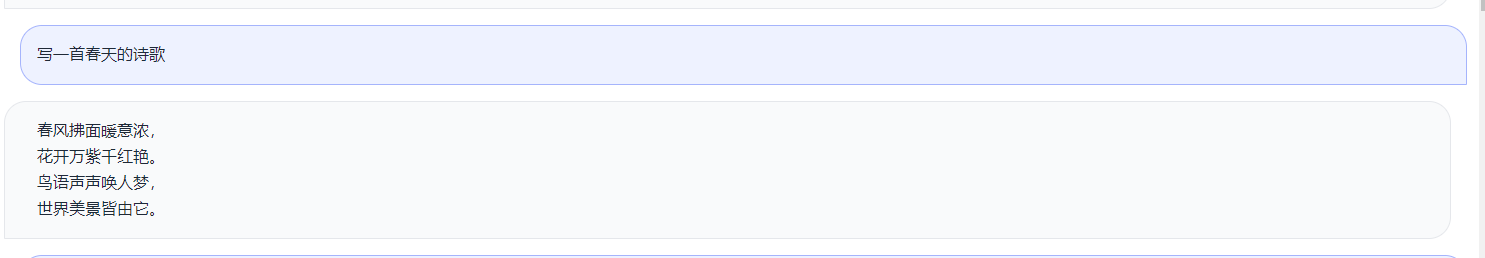

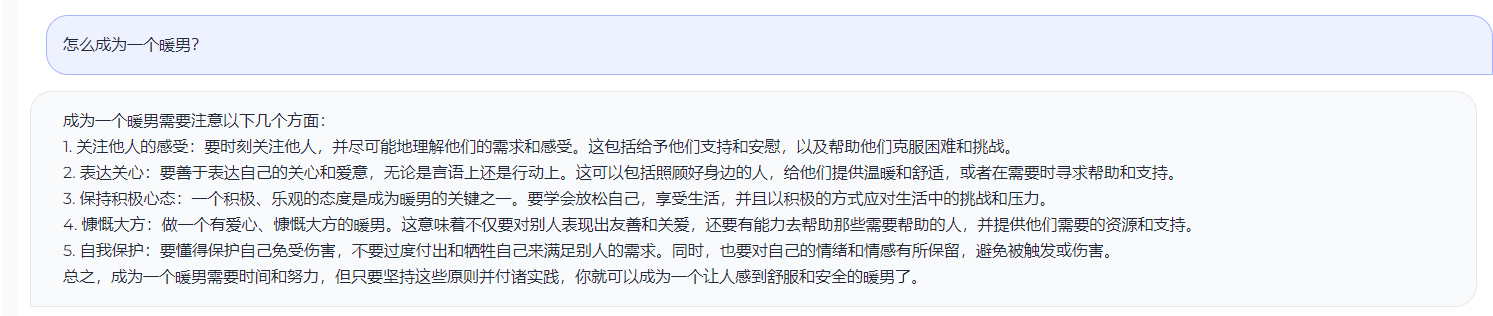

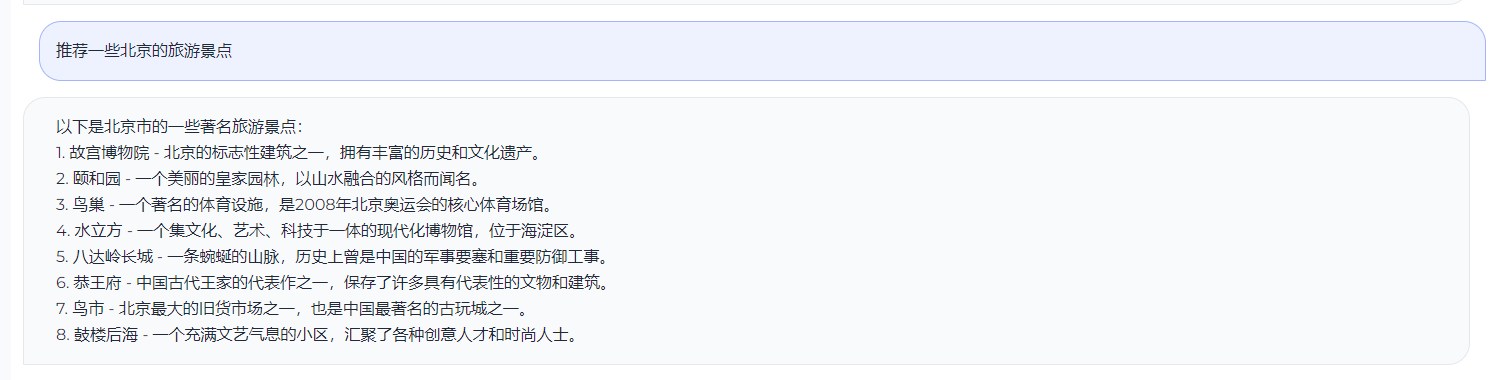

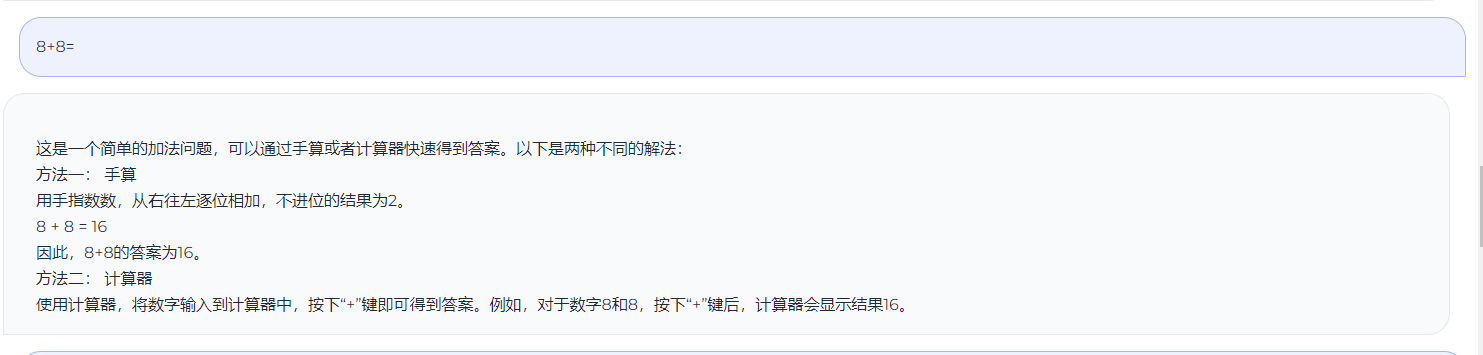

测试效果

TODO

- 进行RLFH训练

- 后续加入中英平行语料

感谢

Open LLM Leaderboard Evaluation Results

Detailed results can be found here

| Metric | Value |

|---|---|

| Avg. | 26.3 |

| ARC (25-shot) | 26.37 |

| HellaSwag (10-shot) | 31.86 |

| MMLU (5-shot) | 25.29 |

| TruthfulQA (0-shot) | 43.12 |

| Winogrande (5-shot) | 50.75 |

| GSM8K (5-shot) | 0.0 |

| DROP (3-shot) | 6.7 |