Datasets:

pretty_name: Lucie Training Dataset

license: cc-by-nc-sa-4.0

language:

- en

- fr

- de

- es

- it

- code

multilinguality:

- multilingual

task_categories:

- text-generation

- text2text-generation

task_ids:

- language-modeling

tags:

- text-generation

- conditional-text-generation

size_categories:

- n>1T

viewer: true

configs:

- config_name: default

data_files:

- path: data/v*/*/*/*/*parquet

split: train

- config_name: en

data_files:

- path: data/v*/natural/en/*/*parquet

split: train

- config_name: fr

data_files:

- path: data/v*/natural/fr/*/*parquet

split: train

- config_name: de

data_files:

- path: data/v*/natural/de/*/*parquet

split: train

- config_name: es

data_files:

- path: data/v*/natural/es/*/*parquet

split: train

- config_name: it

data_files:

- path: data/v*/natural/it/*/*parquet

split: train

- config_name: de,fr

data_files:

- path: data/v*/natural/de-fr/*/*.parquet

split: train

- config_name: es,en

data_files:

- path: data/v*/natural/es-en/*/*.parquet

split: train

- config_name: fr,en

data_files:

- path: data/v*/natural/fr-en/*/*.parquet

split: train

- config_name: it,en

data_files:

- path: data/v*/natural/it-en/*/*.parquet

split: train

- config_name: natural

data_files:

- path: data/v*/natural/*/*/*.parquet

split: train

- config_name: code

data_files:

- path: data/v*/code/*/*/*parquet

split: train

- config_name: code-assembly

data_files:

- path: data/v*/code/assembly/*/*.parquet

split: train

- config_name: code-c

data_files:

- path: data/v*/code/c/*/*.parquet

split: train

- config_name: code-c#

data_files:

- path: data/v*/code/c#/*/*.parquet

split: train

- config_name: code-c++

data_files:

- path: data/v*/code/c++/*/*.parquet

split: train

- config_name: code-clojure

data_files:

- path: data/v*/code/clojure/*/*.parquet

split: train

- config_name: code-dart

data_files:

- path: data/v*/code/dart/*/*.parquet

split: train

- config_name: code-elixir

data_files:

- path: data/v*/code/elixir/*/*.parquet

split: train

- config_name: code-erlang

data_files:

- path: data/v*/code/erlang/*/*.parquet

split: train

- config_name: code-fortran

data_files:

- path: data/v*/code/fortran/*/*.parquet

split: train

- config_name: code-go

data_files:

- path: data/v*/code/go/*/*.parquet

split: train

- config_name: code-haskell

data_files:

- path: data/v*/code/haskell/*/*.parquet

split: train

- config_name: code-java

data_files:

- path: data/v*/code/java/*/*.parquet

split: train

- config_name: code-javascript

data_files:

- path: data/v*/code/javascript/*/*.parquet

split: train

- config_name: code-julia

data_files:

- path: data/v*/code/julia/*/*.parquet

split: train

- config_name: code-kotlin

data_files:

- path: data/v*/code/kotlin/*/*.parquet

split: train

- config_name: code-lua

data_files:

- path: data/v*/code/lua/*/*.parquet

split: train

- config_name: code-mathematica

data_files:

- path: data/v*/code/mathematica/*/*.parquet

split: train

- config_name: code-matlab

data_files:

- path: data/v*/code/matlab/*/*.parquet

split: train

- config_name: code-ocaml

data_files:

- path: data/v*/code/ocaml/*/*.parquet

split: train

- config_name: code-perl

data_files:

- path: data/v*/code/perl/*/*.parquet

split: train

- config_name: code-php

data_files:

- path: data/v*/code/php/*/*.parquet

split: train

- config_name: code-python

data_files:

- path: data/v*/code/python/*/*.parquet

split: train

- config_name: code-r

data_files:

- path: data/v*/code/r/*/*.parquet

split: train

- config_name: code-racket

data_files:

- path: data/v*/code/racket/*/*.parquet

split: train

- config_name: code-ruby

data_files:

- path: data/v*/code/ruby/*/*.parquet

split: train

- config_name: code-rust

data_files:

- path: data/v*/code/rust/*/*.parquet

split: train

- config_name: code-scala

data_files:

- path: data/v*/code/scala/*/*.parquet

split: train

- config_name: code-swift

data_files:

- path: data/v*/code/swift/*/*.parquet

split: train

- config_name: code-tex

data_files:

- path: data/v*/code/tex/*/*.parquet

split: train

- config_name: code-typescript

data_files:

- path: data/v*/code/typescript/*/*.parquet

split: train

- config_name: AmendementsParlement

data_files:

- path: data/v*/natural/*/AmendementsParlement/*.parquet

split: train

- config_name: AmericanStories

data_files:

- path: data/v*/natural/*/AmericanStories/*.parquet

split: train

- config_name: Claire

data_files:

- path: data/v*/natural/*/Claire/*.parquet

split: train

- config_name: Claire-en

data_files:

- path: data/v*/natural/en/Claire/*.parquet

split: train

- config_name: Claire-fr

data_files:

- path: data/v*/natural/fr/Claire/*.parquet

split: train

- config_name: CroissantAligned

data_files:

- path: data/v*/natural/*/CroissantAligned/*.parquet

split: train

- config_name: DiscoursPublics

data_files:

- path: data/v*/natural/*/DiscoursPublics/*.parquet

split: train

- config_name: Europarl

data_files:

- path: data/v*/natural/*/Europarl/*.parquet

split: train

- config_name: Europarl-de

data_files:

- path: data/v*/natural/de/Europarl/*.parquet

split: train

- config_name: Europarl-en

data_files:

- path: data/v*/natural/en/Europarl/*.parquet

split: train

- config_name: Europarl-es

data_files:

- path: data/v*/natural/es/Europarl/*.parquet

split: train

- config_name: Europarl-fr

data_files:

- path: data/v*/natural/fr/Europarl/*.parquet

split: train

- config_name: EuroparlAligned

data_files:

- path: data/v*/natural/*/EuroparlAligned/*.parquet

split: train

- config_name: EuroparlAligned-de,fr

data_files:

- path: data/v*/natural/de-fr/EuroparlAligned/*.parquet

split: train

- config_name: EuroparlAligned-es,en

data_files:

- path: data/v*/natural/es-en/EuroparlAligned/*.parquet

split: train

- config_name: EuroparlAligned-fr,en

data_files:

- path: data/v*/natural/fr-en/EuroparlAligned/*.parquet

split: train

- config_name: EuroparlAligned-it,en

data_files:

- path: data/v*/natural/it-en/EuroparlAligned/*.parquet

split: train

- config_name: Eurovoc

data_files:

- path: data/v*/natural/*/Eurovoc/*.parquet

split: train

- config_name: Eurovoc-de

data_files:

- path: data/v*/natural/de/Eurovoc/*.parquet

split: train

- config_name: Eurovoc-en

data_files:

- path: data/v*/natural/en/Eurovoc/*.parquet

split: train

- config_name: Eurovoc-es

data_files:

- path: data/v*/natural/es/Eurovoc/*.parquet

split: train

- config_name: Eurovoc-it

data_files:

- path: data/v*/natural/it/Eurovoc/*.parquet

split: train

- config_name: FineWebEdu

data_files:

- path: data/v*/natural/*/FineWebEdu/*.parquet

split: train

- config_name: GallicaMonographies

data_files:

- path: data/v*/natural/*/GallicaMonographies/*.parquet

split: train

- config_name: GallicaPress

data_files:

- path: data/v*/natural/*/GallicaPress/*.parquet

split: train

- config_name: Gutenberg

data_files:

- path: data/v*/natural/*/Gutenberg/*.parquet

split: train

- config_name: Gutenberg-de

data_files:

- path: data/v*/natural/de/Gutenberg/*.parquet

split: train

- config_name: Gutenberg-en

data_files:

- path: data/v*/natural/en/Gutenberg/*.parquet

split: train

- config_name: Gutenberg-es

data_files:

- path: data/v*/natural/es/Gutenberg/*.parquet

split: train

- config_name: Gutenberg-fr

data_files:

- path: data/v*/natural/fr/Gutenberg/*.parquet

split: train

- config_name: Gutenberg-it

data_files:

- path: data/v*/natural/it/Gutenberg/*.parquet

split: train

- config_name: HAL

data_files:

- path: data/v*/natural/*/HAL/*.parquet

split: train

- config_name: InterventionsParlement

data_files:

- path: data/v*/natural/*/InterventionsParlement/*.parquet

split: train

- config_name: LEGI

data_files:

- path: data/v*/natural/*/LEGI/*.parquet

split: train

- config_name: MathPile

data_files:

- path: data/v*/natural/*/MathPile/*.parquet

split: train

- config_name: OpenData

data_files:

- path: data/v*/natural/*/OpenData/*.parquet

split: train

- config_name: OpenEdition

data_files:

- path: data/v*/natural/*/OpenEdition/*.parquet

split: train

- config_name: PeS2o

data_files:

- path: data/v*/natural/*/PeS2o/*.parquet

split: train

- config_name: PeS2o-s2ag

data_files:

- path: data/v*/natural/*/PeS2o/*s2ag.parquet

split: train

- config_name: PeS2o-s2orc

data_files:

- path: data/v*/natural/*/PeS2o/*s2orc.parquet

split: train

- config_name: Pile

data_files:

- path: data/v*/natural/*/Pile/*.parquet

split: train

- config_name: Pile-DM_Mathematics

data_files:

- path: data/v*/natural/*/Pile/*DM_Mathematics.parquet

split: train

- config_name: Pile-FreeLaw

data_files:

- path: data/v*/natural/*/Pile/*FreeLaw.parquet

split: train

- config_name: Pile-NIH_ExPorter

data_files:

- path: data/v*/natural/*/Pile/*NIH_ExPorter.parquet

split: train

- config_name: Pile-PhilPapers

data_files:

- path: data/v*/natural/*/Pile/*PhilPapers.parquet

split: train

- config_name: Pile-StackExchange

data_files:

- path: data/v*/natural/*/Pile/*StackExchange.parquet

split: train

- config_name: Pile-USPTO_Backgrounds

data_files:

- path: data/v*/natural/*/Pile/*USPTO_Backgrounds.parquet

split: train

- config_name: Pile-Ubuntu_IRC

data_files:

- path: data/v*/natural/*/Pile/*Ubuntu_IRC.parquet

split: train

- config_name: QuestionsEcritesParlement

data_files:

- path: data/v*/natural/*/QuestionsEcritesParlement/*.parquet

split: train

- config_name: RedPajama

data_files:

- path: data/v*/natural/*/RedPajama/*.parquet

split: train

- config_name: RedPajama-de

data_files:

- path: data/v*/natural/de/RedPajama/*.parquet

split: train

- config_name: RedPajama-es

data_files:

- path: data/v*/natural/es/RedPajama/*.parquet

split: train

- config_name: RedPajama-fr

data_files:

- path: data/v*/natural/fr/RedPajama/*.parquet

split: train

- config_name: RedPajama-it

data_files:

- path: data/v*/natural/it/RedPajama/*.parquet

split: train

- config_name: Stac

data_files:

- path: data/v*/natural/*/Stac/*.parquet

split: train

- config_name: TheStack

data_files:

- path: data/v*/code/*/TheStack/*.parquet

split: train

- config_name: Theses

data_files:

- path: data/v*/natural/*/Theses/*.parquet

split: train

- config_name: Wikipedia

data_files:

- path: data/v*/natural/*/Wikipedia/*.parquet

split: train

- config_name: Wikipedia-de

data_files:

- path: data/v*/natural/de/Wikipedia/*.parquet

split: train

- config_name: Wikipedia-en

data_files:

- path: data/v*/natural/en/Wikipedia/*.parquet

split: train

- config_name: Wikipedia-es

data_files:

- path: data/v*/natural/es/Wikipedia/*.parquet

split: train

- config_name: Wikipedia-fr

data_files:

- path: data/v*/natural/fr/Wikipedia/*.parquet

split: train

- config_name: Wikipedia-it

data_files:

- path: data/v*/natural/it/Wikipedia/*.parquet

split: train

- config_name: Wikisource

data_files:

- path: data/v*/natural/*/Wikisource/*.parquet

split: train

- config_name: Wiktionary

data_files:

- path: data/v*/natural/*/Wiktionary/*.parquet

split: train

- config_name: YouTube

data_files:

- path: data/v*/natural/*/YouTube/*.parquet

split: train

Lucie Training Dataset Card

The Lucie Training Dataset is a curated collection of text data in English, French, German, Spanish and Italian culled from a variety of sources including: web data, video subtitles, academic papers, digital books, newspapers, and magazines, some of which were processed by Optical Character Recognition (OCR). It also contains samples of diverse programming languages.

The Lucie Training Dataset was used to pretrain Lucie-7B, a foundation LLM with strong capabilities in French and English. Code for data preparation can be found in the training respository for Lucie-7B. Due to the licenses of a few subcorpora, the Lucie Training Dataset is released under a CC BY-NC-SA 4.0. A subset available for commercial use will be released soon.

Table of Contents:

- Dataset Description

- Example use in Python

- Citation

- Acknowledgements

- Contact

Dataset Description

This dataset is intended to provide extensive and diverse multilingual data for training Large Language Models (LLMs). Here are some of the principal features of the corpus:

- Data mix:

- The dataset contains more French than English data -- it is in fact one of the biggest collections of French text data that has been preprocessed for LLM training -- with the aim of minimizing anglo-centric cultural biases.

- German, Spanish and Italian are also represented in small amounts.

- Code is included to boost the reasoning capabilities of LLMs.

- Data filtering and deduplication:

- The dataset has been cleaned in an effort to remove very low-quality data.

- Duplicate data samples have been removed to some extent, following best practices.

- Web data has been filtered to minimize potentially toxic content and personally identifying information.

- Ethics:

- Special care has been taken to respect copyright laws and individual privacy. All newspapers, monographies, magazines and legislative documents, as well as most books, are in the public domain (which depends on the author's date of death and the country of publication). Other data are published with permissive licenses (e.g., CC BY or CC BY-SA) or, in very rare cases, CC BY-NC-SA.

- All web data in the dataset come from sites with robots.txt files that do not forbid crawling.

Sample Metadata

In addition to the text field, which provides the content of the sample, each training sample in the corpus contains the following metadata when available:

language: the language of the text sample (note that this information is taken from the original data source and may be incorrect).

Possible values:- the ISO 639-1 code for a given natural language ("en", "fr", "de", "es", or "it"),

- the name of a programming language prefixed by "code:" ("code:python", "code:c++", …), or

- a list of ISO 639-1 codes separated by commas for data containing parallel translations ("fr,en", "de,fr", "es,en", "it,en", or one of those pairs in the opposite order if the languages appear in the opposite order in the text).

source: an identifier for the source(s) of the text sample (Wikipedia, RedPajama, Gutenberg, …). All sources are described in detail below.id: an identifier that is unique among documents from the same source.url(optional): the URL of the original text sample on the web, if available.title(optional): the title of the original text sample, if available.author(optional): the author of the original text sample, if available.Note:

The author name is given in plain text, except in the case of Gutenberg books, where it is the JSON serialized object of the author metadata.date(optional): the publication date of the original text sample, if available.Note:

The text format of the date depends on the source.quality_signals(optional): a list of quality signals for the text sample in JSON format (which could be used for further filtering or sample weighting).Note:

It can include indicators computed by `fasttext` and `CCNet`, statistics about occurrences of characters, words, special characters, etc.extra(optional): extra information about the text sample, in JSON format. This can include metadata about the source subset, the rights, etc.

The list of metadata available for each source is provided (without the text field) in metadata_examples.json.

Dataset Composition

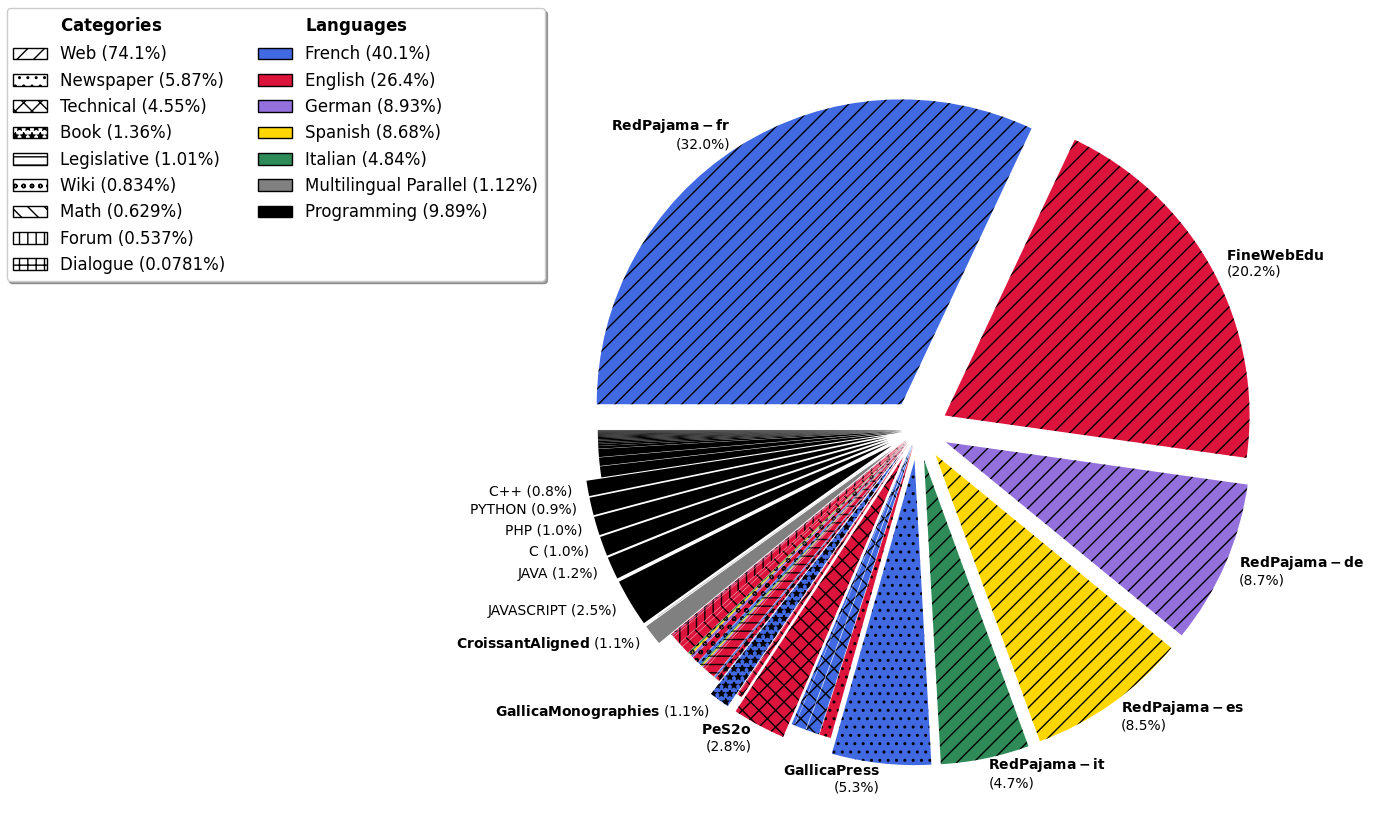

The following figure shows the distribution of the dataset by language (colors) and category (hatch patterns).

The following table provides an overview of the dataset composition, broken down by source and language. Sources are grouped by category. The table provides the numbers of documents, words, tokens, and characters for each subset. All numbers in this table are available in the CSV file dataset_composition.csv. Token counts are computed using the tokenizer for Lucie-7B.

| Subset | Language | M docs | B words | B tokens | B chars | |

|---|---|---|---|---|---|---|

| TOTAL | 2186.562 | 1356.021 | 2314.862 | 8842.200 | ||

| French (fr) | 653.812 | 583.687 | 928.618 | 3619.672 | composition details | |

| English (en) | 554.289 | 412.202 | 611.894 | 2553.541 | composition details | |

| code | 125.769 | 51.306 | 228.954 | 630.749 | composition details | |

| German (de) | 165.915 | 105.609 | 206.610 | 764.779 | composition details | |

| Spanish (es) | 171.651 | 123.857 | 200.825 | 759.457 | composition details | |

| Italian (it) | 99.440 | 62.051 | 112.031 | 404.454 | composition details | |

| fr-en | 410.032 | 17.016 | 25.494 | 107.658 | composition details | |

| it-en | 1.901 | 0.100 | 0.151 | 0.638 | ||

| es-en | 1.961 | 0.103 | 0.143 | 0.631 | ||

| de-fr | 1.792 | 0.0908 | 0.141 | 0.621 | ||

Category: Web | ||||||

| RedPajama | French (fr) | 640.770 | 477.758 | 741.023 | 2974.596 | composition details |

| German (de) | 162.779 | 103.078 | 201.371 | 747.631 | composition details | |

| Spanish (es) | 169.447 | 121.751 | 197.125 | 746.984 | composition details | |

| Italian (it) | 97.324 | 60.194 | 108.416 | 393.012 | composition details | |

| FineWebEdu | English (en) | 421.209 | 327.453 | 467.837 | 2018.215 | composition details |

Category: Newspaper | ||||||

| GallicaPress | French (fr) | 3.205 | 67.496 | 121.606 | 408.882 | |

| AmericanStories | English (en) | 59.420 | 8.902 | 14.313 | 50.844 | composition details |

Category: Technical | ||||||

| PeS2o | English (en) | 38.972 | 42.296 | 65.365 | 268.963 | |

| HAL | French (fr) | 0.349 | 9.356 | 16.224 | 58.308 | |

| Theses | French (fr) | 0.102 | 7.547 | 14.060 | 47.758 | |

| Pile (USPTO_Backgrounds) | English (en) | 5.139 | 3.492 | 5.105 | 22.309 | |

| OpenEdition | French (fr) | 0.939 | 2.225 | 3.604 | 14.459 | |

| Pile (PhilPapers) | English (en) | 0.0308 | 0.363 | 0.618 | 2.304 | |

| Pile (NIH_ExPorter) | English (en) | 0.914 | 0.288 | 0.431 | 1.979 | |

Category: Book | ||||||

| GallicaMonographies | French (fr) | 0.278 | 15.106 | 25.169 | 90.456 | |

| Gutenberg | English (en) | 0.0563 | 3.544 | 5.516 | 20.579 | |

| French (fr) | 0.00345 | 0.227 | 0.383 | 1.392 | ||

| German (de) | 0.00188 | 0.0987 | 0.193 | 0.654 | ||

| Italian (it) | 0.000958 | 0.0657 | 0.129 | 0.414 | ||

| Spanish (es) | 0.000735 | 0.0512 | 0.0920 | 0.303 | ||

Category: Legislative Texts | ||||||

| Pile (FreeLaw) | English (en) | 3.415 | 8.204 | 14.011 | 52.580 | |

| Eurovoc | English (en) | 0.272 | 1.523 | 2.571 | 9.468 | |

| Italian (it) | 0.245 | 0.731 | 1.527 | 4.867 | ||

| German (de) | 0.247 | 0.678 | 1.497 | 4.915 | ||

| Spanish (es) | 0.246 | 0.757 | 1.411 | 4.684 | ||

| OpenData | French (fr) | 1.169 | 0.755 | 1.209 | 4.638 | |

| QuestionsEcritesParlement | French (fr) | 0.189 | 0.108 | 0.156 | 0.705 | |

| LEGI | French (fr) | 0.621 | 0.0878 | 0.145 | 0.563 | |

| AmendementsParlement | French (fr) | 0.673 | 0.0452 | 0.0738 | 0.274 | |

Category: Legislative Transcripts | ||||||

| Europarl | German (de) | 0.0102 | 0.0451 | 0.0734 | 0.327 | |

| Spanish (es) | 0.0103 | 0.0524 | 0.0733 | 0.325 | ||

| French (fr) | 0.0103 | 0.0528 | 0.0717 | 0.339 | ||

| English (en) | 0.0111 | 0.0563 | 0.0690 | 0.339 | ||

| DiscoursPublics | French (fr) | 0.110 | 0.163 | 0.238 | 1.025 | |

| InterventionsParlement | French (fr) | 1.832 | 0.104 | 0.157 | 0.654 | |

Category: Wiki | ||||||

| Wikipedia | English (en) | 6.893 | 4.708 | 7.898 | 26.616 | |

| German (de) | 2.877 | 1.709 | 3.476 | 11.252 | ||

| French (fr) | 2.648 | 1.726 | 2.940 | 9.879 | ||

| Spanish (es) | 1.947 | 1.245 | 2.124 | 7.161 | ||

| Italian (it) | 1.870 | 1.060 | 1.959 | 6.161 | ||

| wikisource | French (fr) | 0.186 | 0.523 | 0.795 | 3.080 | |

| wiktionary | French (fr) | 0.650 | 0.0531 | 0.117 | 0.347 | |

Category: Math | ||||||

| MathPile | English (en) | 0.737 | 3.408 | 9.637 | 27.290 | |

| Pile (DM_Mathematics) | English (en) | 0.992 | 1.746 | 4.928 | 8.127 | |

Category: Forum | ||||||

| Pile (StackExchange) | English (en) | 15.269 | 4.534 | 10.275 | 33.609 | |

| Pile (Ubuntu_IRC) | English (en) | 0.0104 | 0.867 | 2.159 | 5.610 | |

Category: Dialogue | ||||||

| Claire | English (en) | 0.949 | 0.818 | 1.161 | 4.709 | composition details |

| French (fr) | 0.0393 | 0.210 | 0.311 | 1.314 | composition details | |

| YouTube | French (fr) | 0.0375 | 0.145 | 0.336 | 1.003 | |

| STAC | English (en) | 0.0000450 | 0.0000529 | 0.000121 | 0.000327 | |

Category: Multilingual Parallel Corpora | ||||||

| CroissantAligned | fr-en | 408.029 | 16.911 | 25.351 | 107.003 | |

| EuroparlAligned | it-en | 1.901 | 0.100 | 0.151 | 0.638 | |

| fr-en | 2.003 | 0.105 | 0.143 | 0.655 | ||

| es-en | 1.961 | 0.103 | 0.143 | 0.631 | ||

| de-fr | 1.792 | 0.0908 | 0.141 | 0.621 | ||

Category: Programming | ||||||

| TheStack | JAVASCRIPT | 21.109 | 8.526 | 58.609 | 141.647 | |

| JAVA | 20.152 | 7.421 | 27.680 | 89.297 | ||

| C | 8.626 | 5.916 | 24.092 | 57.428 | ||

| PHP | 15.905 | 4.865 | 22.883 | 66.844 | ||

| PYTHON | 12.962 | 5.434 | 21.683 | 64.304 | ||

| C++ | 6.378 | 4.584 | 18.835 | 50.892 | ||

| C# | 10.839 | 3.574 | 13.381 | 46.286 | ||

| GO | 4.730 | 2.735 | 10.262 | 25.738 | ||

| TYPESCRIPT | 10.637 | 2.617 | 9.836 | 28.815 | ||

| RUST | 1.387 | 0.872 | 3.241 | 9.529 | ||

| RUBY | 3.405 | 0.646 | 2.392 | 7.139 | ||

| SWIFT | 1.756 | 0.553 | 1.876 | 6.134 | ||

| KOTLIN | 2.243 | 0.454 | 1.758 | 5.769 | ||

| SCALA | 1.362 | 0.457 | 1.587 | 4.862 | ||

| TEX | 0.398 | 0.394 | 1.507 | 3.805 | ||

| LUA | 0.559 | 0.318 | 1.367 | 3.279 | ||

| DART | 0.933 | 0.308 | 1.242 | 3.864 | ||

| PERL | 0.392 | 0.297 | 1.149 | 2.634 | ||

| MATHEMATICA | 0.0269 | 0.120 | 1.117 | 1.720 | ||

| ASSEMBLY | 0.248 | 0.209 | 0.867 | 1.575 | ||

| HASKELL | 0.545 | 0.307 | 0.807 | 2.364 | ||

| FORTRAN | 0.165 | 0.192 | 0.780 | 1.843 | ||

| JULIA | 0.299 | 0.152 | 0.660 | 1.539 | ||

| OCAML | 0.160 | 0.130 | 0.430 | 1.107 | ||

| ERLANG | 0.0994 | 0.0657 | 0.260 | 0.726 | ||

| ELIXIR | 0.282 | 0.0731 | 0.258 | 0.737 | ||

| CLOJURE | 0.126 | 0.0448 | 0.179 | 0.492 | ||

| R | 0.0392 | 0.0278 | 0.158 | 0.305 | ||

| MATLAB | 0.000967 | 0.00865 | 0.0427 | 0.0372 | ||

| RACKET | 0.00420 | 0.00479 | 0.0153 | 0.0378 | ||

Configurable Subsets and Versions

As the Lucie Training Dataset is a collection of multilingual corpora from different sources, it can be divided into subsets based on the source and language of its constituent corpora.

The list of possible configurations is available in the YAML header of this README file.

Each configuration corresponds to a pathname pattern in the data subdirectory.

The dataset is also available in the following versions:

- v1.1 / main (default): The data used for the first (main) pretraining phase of Lucie-7B, which contains approximately 2.3T tokens. The statistics above apply to this version.

- v1.2: An improved version of the main dataset, where

- GallicaMonographies and GallicaPress have been fltered aggressively to remove documents with low OCR quality.

- The

Ubuntu_IRCandPhilPaperssubsets of Pile have been refined by fixing encoding issues and removing documents in languages other than English, French, Spanish, German and Italian.

- v1.2-recent-web : The data used for the second pretraining phase (context extension) of Lucie-7B.

This version is identical to

v1.2with the exception that older snapshots of web data (before 2023 for RedPajama and before 2024 for FineWebEdu) have been excluded. All data fromv1.1that were not filtered out remain unchanged inv1.2andv1.2-recent-web.

Except from v1.1, which is a git tag, all versions are git branches in the dataset repository (e.g. v1.2).

The Example use in Python section contains example Python code for loading and iterating over the dataset with different configurations, including source, language and version.

Details on Data Sources

AmendementsParlement

- Source: Corpus contributed by OpenLLM partners.

- Extracted from: Regards citoyens. License: CC BY-SA.

- Description: A collection of proposed amendments by the French parliament. Documents contain the text of the proposed amendment, the name of the associated law as well as information on who voted on the amendment and what was decided.

AmericanStories

- Source: dell-research-harvard/AmericanStories. License: CC BY 4.0.

- Extracted from: Chronicling America. License: Open.

- Description: "The American Stories dataset is a collection of full article texts extracted from historical U.S. newspaper images. It includes nearly 20 million scans from the public domain Chronicling America collection maintained by the Library of Congress. The dataset is designed to address the challenges posed by complex layouts and low OCR quality in existing newspaper datasets" (from the dataset card). See the dataset composition details for statistics on documents by year. Dataset containing text retrieved through OCR.

- Pre-processing:

- Filtering: To filter out documents with excessive OCR errors, the dataset was refined by discarding texts with a perplexity higher than 2310, measured using a CCNET model in English (see code details). The code to compute CCNET perplexity, parallelizing on parquet files, is available here.

- Citation: Melissa Dell, Jacob Carlson, Tom Bryan, Emily Silcock, Abhishek Arora, Zejiang Shen, Luca D'Amico-Wong, Quan Le, Pablo Querubin and Leander Heldring (2023). "American Stories: A Large-Scale Structured Text Dataset of Historical U.S. Newspapers," arxiv:2308.12477.

Claire (French and English)

- Sources:

- French dataset: OpenLLM-France/Claire-Dialogue-French-0.1. License: CC BY-NC-SA 4.0.

- English dataset: OpenLLM-France/Claire-Dialogue-English-0.1. License: CC BY-NC-SA 4.0.

- Extracted from: see the datacards for the French and English datasets.

- Description: The Claire datasets are composed of transcripts of spoken conversations -- including parliamentary proceedings, interviews, debates, meetings, and free conversations -- as well as some written conversations from theater plays and written chats. The dataset is designed to help downstream performance of models fine-tuned for tasks requiring the comprehension of spontaneous spoken conversation, such as meeting summarization. Each dialogue is split into speech turns, and each speech turn is labeled with the name of the speaker or a unique identifier. See the composition details for the French dataset and the English dataset for a high-level view of the distribution of different types of documents in each dataset.

- Citation: Julie Hunter, Jérôme Louradour, Virgile Rennard, Ismaïl Harrando, Guokan Shang, Jean-Pierre Lorré (2023). The Claire French Dialogue Dataset. arXiv:2311.16840.

CroissantAligned

- Source: croissantllm/croissant_dataset_no_web_data (subset:

aligned_36b). License: not specified. - Extracted from:

- Translation pairs: OPUS (99.6% of the data in CroissantAligned). Pairs extracted from OPUS are labeled as "UnbabelFrEn".

- Thesis abstracts: French thesis abstract pairs. License: ETALAB-Licence-Ouverte-v2.0.

- Song lyrics: lacoccinelle.

- Description: CroissantAligned contains samples of parallel French/English (or English/French) data. Data extracted from OPUS takes the form of sentences pairs, where one sentence is in French and the other is in English. OPUS pairs were passed through a custom pipeline designed to select the highest quality translation examples. Selected pairs are labeled "UnbabelFrEn" in the CroissantAligned dataset. The thesis abstract subset contains thesis abstracts paired with translations written by the thesis authors. The song lyrics are translated by contributors to www.lacoccinelle.net. Parallel data are used to boost the multilingual capabilities of models trained on them (Faysse et al.,2024).

- Pre-processing:

- Language separation and tagging: The original text field of the Croissant dataset contains a sentence or passage in French or English immediately followed by its translation without any indication of which passage is in which language. The first step was thus to split each text into separate, monolingual passages and tag each passage with the appropriate language code, identified automatically using the langid library (see code details). In the Lucie Training Dataset, the

extrametadata field for CroissantAligned contains separate keys,text_frfor French andtext_enfor English, that stores the texts separately. - Random combination of texts prefixed by language: To create the text values, each monolingual text was repaired with its translation, but random separators and various methods of prefixing the text with the language (name or code) were added. This was done as a precaution to prevent models trained on this data from switching languages when generating text and can be seen as a very basic instruction to translate the source (first) text into the target (second) text (see code details).

- Language separation and tagging: The original text field of the Croissant dataset contains a sentence or passage in French or English immediately followed by its translation without any indication of which passage is in which language. The first step was thus to split each text into separate, monolingual passages and tag each passage with the appropriate language code, identified automatically using the langid library (see code details). In the Lucie Training Dataset, the

- Citation: Manuel Faysse, Patrick Fernandes, Nuno M. Guerreiro, António Loison, Duarte M. Alves, Caio Corro, Nicolas Boizard, João Alves, Ricardo Rei, Pedro H. Martins, Antoni Bigata Casademunt, François Yvon, André F.T. Martins, Gautier Viaud, Céline Hudelot, Pierre Colombo (2024). "CroissantLLM: A Truly Bilingual French-English Language Model," arXiv:2402.00786.

DiscoursPublics

- Source: Corpus contributed by OpenLLM partners.

- Extracted from: Vie Publique. License: ETALAB-Licence-Ouverte-v2.0.

- Description: A collection of public speeches from the principal public actors in France including speeches from the French President starting from 1974 and from the Prime Minister and members of the government starting from 1980.

- Pre-processing:

- Text cleaning: the mention of the source url and the number of views were removed from the text.

Europarl and EuroparlAligned

- Sources:

fr-en,es-en,it-enparallel data: Europarl v7. License: Open.fr,en,de,esmonolingual data andde-frparallel data: Europarl v10. License: Open.

- Description: "The Europarl parallel corpus is extracted from the proceedings of the European Parliament. It includes versions in 21 European languages: Romanic (French, Italian, Spanish, Portuguese, Romanian), Germanic (English, Dutch, German, Danish, Swedish), Slavik (Bulgarian, Czech, Polish, Slovak, Slovene), Finni-Ugric (Finnish, Hungarian, Estonian), Baltic (Latvian, Lithuanian), and Greek. The goal of the extraction and processing was to generate sentence aligned text for statistical machine translation systems" (www.statmt.org).

- Pre-processing:

- Random combination of aligned texts prefixed by language: The same process as used for the CroissantAligned dataset was applied to the EuroparlAligned dataset (see code details).

In the Lucie Training Dataset, the

extrafield in the metadata for EuroparlAligned provides texts in the two languages under the sub-fieldstext_1andtext_2, and the corresponding language codes underlang_1andlang_2.

- Random combination of aligned texts prefixed by language: The same process as used for the CroissantAligned dataset was applied to the EuroparlAligned dataset (see code details).

In the Lucie Training Dataset, the

- Citation: Philipp Koehn (2005). "Europarl: A Parallel Corpus for Statistical Machine Translation," MT Summit.

Eurovoc

- Source: EuropeanParliament/Eurovoc. License: EUPL 1.1.

- Extracted from: Cellar. License: CC BY-4.0.

- Description: A collection of mutlilingual documents from the data repository of the Publications Office of the European Union annotated with Eurovoc labels. The corpus contains legal, policy-related, historical and organizational information about the EU. Dataset containing text retrieved through OCR.

- Pre-processing:

- Filtering: To filter out documents with excessive OCR errors, the dataset was refined by discarding texts with a perplexity higher than 1500, measured using a CCNET model in English (see code details). The code to compute CCNET perplexity, parallelizing on parquet files, is available here.

- Text cleaning:

Mentions of Credit Institutions Directives (CID) that appears in the raw texts such as

(cid:146)were removed.

- Citations:

- Ilias Chalkidis, Emmanouil Fergadiotis, Prodromos Malakasiotis, Nikolaos Aletras, and Ion Androutsopoulos (2019). "Extreme Multi-Label Legal Text Classification: A Case Study in EU Legislation," Proceedings of the Natural Legal Language Processing Workshop 2019, pages 78–87, Minneapolis, Minnesota. Association for Computational Linguistics.

- Ilias Chalkidis, Manos Fergadiotis, Prodromos Malakasiotis and Ion Androutsopoulos (2019). "Large-Scale Multi-Label Text Classification on EU Legislation," Proceedings of the 57th Annual Meeting of the Association for Computational Linguistics (ACL 2019), Florence, Italy, (short papers).

- Andrei-Marius Avram, Vasile Pais, and Dan Ioan Tufis (2021). "PyEuroVoc: A Tool for Multilingual Legal Document Classification with EuroVoc Descriptors," Proceedings of the International Conference on Recent Advances in Natural Language Processing (RANLP 2021), pages 92–101, Held Online. INCOMA Ltd.

- Zein Shaheen, Gerhard Wohlgenannt and Erwin Filtz (2020). "Large scale legal text classification using transformer models," arXiv:2010.12871.

FineWebEdu

- Source: HuggingFaceFW/fineweb-edu. License: ODC-BY.

- Extracted from: FineWeb. License: ODC-BY.

- Description: A 1.3 trillion token selection from FineWeb, which contains 15 trillion tokens of curated data from 96 Common Crawl dumps. Content in FineWebEdu has been selected by a custom designed classifier for its high-quality, educational content. Most recent crawl: 2024-10 (see composition details for information about the crawls included in this dataset.)

- Pre-processing:

- Removing duplicate urls: urls were removed if their base domain overlapped with a dataset already in the Lucie Training Dataset (e.g., "philpapers.org") in order to increase diversity of content (see code details)

- Filtering by robots.txt files: we collect robots.txt and remove all documents for which CCBot is disallowed or for which we failed to collect information as of July 2024 in an effort to select data free from opt-out evidence according to the 4th article of the copyright European directive (2019).

- Citation: Guilherme Penedo, Hynek Kydlíček, Loubna Ben allal, Anton Lozhkov, Margaret Mitchell, Colin Raffel, Leandro Von Werra, Thomas Wolf (2024). "The FineWeb Datasets: Decanting the Web for the Finest Text Data at Scale," arXiv:2406.17557.

GallicaMonographies

- Source: Corpus contributed by OpenLLM partners. A version is also published here: PleIAs/French-PD-Books. License: Public domain.

- Extracted from: Gallicagram.

- Description: A large collection of French monographies in the public domain made available through the French National Library (Gallica). Dataset containing text retrieved through OCR.

- Pre-processing:

- Text cleaning for v1.1: To filter out documents with excessive OCR errors, the dataset was split into chunks and chunks were kept if the source language was detected as French by FastText with a confidence score of 0.65 or above, and the perplexity score, as measured using a CCNET model in French, was between 10 and 1000. The code to compute CCNET perplexity, parallelizing on parquet files, is available here.

- Filtering for v1.2: Using OCR scores provided in the metadata of the source corpus, documents with an OCR score of less than 90 out of 100 were filtered out.

GallicaPress

- Source: Corpus contributed by OpenLLM partners. A version is also published here: PleIAs/French-PD-Newspapers. License: Public domain.

- Extracted from: Gallicagram.

- Description: A large collection of French newspapers and periodicals in the public domain made available through the French National Library (Gallica). Dataset containing text retrieved through OCR.

- Pre-processing:

- Text cleaning for v1.1: To filter out documents with excessive OCR errors, the dataset was split into chunks and chunks were kept if the source language was detected as French by FastText with a confidence score of 0.65 or above, and the perplexity score, as measured using a CCNET model in French, was between 10 and 1000 (see code details). The code to compute CCNET perplexity, parallelizing on parquet files, is available here.

- Filtering for v1.2: Using OCR scores provided in the metadata of the source corpus, documents with an OCR score of less than 90 out of 100 were filtered out.

Gutenberg

- Source: Corpus compiled by OpenLLM partners.

- Extracted from:

- aleph.gutenberg.org via Project Gutenberg. License: Open.

- pgcorpus. License: CC BY-4.0.

- Description: A collection of free eBooks, manually prepared by human annotators.

- Pre-processing:

- Filtering: The dataset was filtered based on the author date of death, so that only texts from authors who died more than 70 years ago are included (80 years for French authors). See code details here. This filtering was done to ensure that the texts are in the public domain.

- Text cleaning: Headers and footers containing information about Project Gutenberg were removed (see code details).

HAL

- Source: bigscience-data/roots_fr_hal_archives_ouvertes. License: Roots dataset.

- Extracted from: HAL (Open access).

- Description: A collection of scientific papers and manuscripts distributed through the open science platform HAL. Dataset containing text retrieved through OCR.

- Pre-processing:

- Filtering: To filter out documents with excessive OCR errors, the dataset was refined by discarding texts with a perplexity higher than 930, measured using a CCNET model in French (see code details). The code to compute CCNET perplexity, parallelizing on parquet files, is available here.

- Citation: Hugo Laurençon, Lucile Saulnier, Thomas Wang, Christopher Akiki, Albert Villanova del Moral, Teven Le Scao, Leandro Von Werra, Chenghao Mou, Eduardo González Ponferrada, Huu Nguyen, Jörg Frohberg, Mario Šaško, Quentin Lhoest, Angelina McMillan-Major, Gerard Dupont, Stella Biderman, Anna Rogers, Loubna Ben allal, Francesco De Toni, Giada Pistilli, Olivier Nguyen, Somaieh Nikpoor, Maraim Masoud, Pierre Colombo, Javier de la Rosa, Paulo Villegas, Tristan Thrush, Shayne Longpre, Sebastian Nagel, Leon Weber, Manuel Muñoz, Jian Zhu, Daniel Van Strien, Zaid Alyafeai, Khalid Almubarak, Minh Chien Vu, Itziar Gonzalez-Dios, Aitor Soroa, Kyle Lo, Manan Dey, Pedro Ortiz Suarez, Aaron Gokaslan, Shamik Bose, David Adelani, Long Phan, Hieu Tran, Ian Yu, Suhas Pai, Jenny Chim, Violette Lepercq, Suzana Ilic, Margaret Mitchell, Sasha Alexandra Luccioni, Yacine Jernite (2022). "The BigScience ROOTS Corpus: A 1.6TB Composite Multilingual Dataset," Advances in Neural Information Processing Systems (NeurIPS), 35, 31809-31826.

InterventionsParlement

- Source: Corpus contributed by OpenLLM partners.

- Extracted from: Regards citoyens. License: CC BY-SA.

- Description: Transcripts of remarks made during French parlementary debates. Each text contains a continuous remark by a single speaker.

LEGI

- Source: Corpus contributed by OpenLLM partners. A version is also published here: Nicolas-BZRD/DILA_OPENDATA_FR_2023.

- Extracted from: OpenData (Data collection date: October, 2023).

- Description: "The French Government Open Data (DILA) Dataset is a collection of text data extracted from various sources provided by the French government, specifically the Direction de l'information légale et administrative (DILA). This dataset contains a wide range of legal, administrative, and legislative documents. The data has been organized into several categories for easy access and analysis" (from the dataset card).

MathPile (Commercial)

- Source: GAIR/MathPile_Commercial. License: CC BY-SA 4.0.

- Extracted from: MathPile. License: CC BY-SA-NC 4.0.

- Description: A preprocessed collection of documents focused on math, including Textbooks, arXiv, Wikipedia, ProofWiki, StackExchange, and web pages from Common Crawl. The content targets a range of levels, from kindergarten through postgraduate level. MathPile_Commercial was obtained by removing documents from MathPile that do not allow commercial use.

- Pre-processing:

- Formatting: Converted the content of StackExchange questions and answers to match the {"text": value} format, using the following formula:

text = sample["question"]["Body"] + "\n\n".join([answer["Body"] for answer in sample["answers"]]) - Citation: Zengzhi Wang, Rui Xia and Pengfei Liu (2023). "Generative AI for Math: Part I -- MathPile: A Billion-Token-Scale Pretraining Corpus for Math," arXiv:2312.17120.

OpenData

- Source: Nicolas-BZRD/DILA_OPENDATA_FR_2023 (balo, dole, inca, kali, and sarde subsets). License: ODC-BY.

- Extracted from: OpenData (Data collection date: October, 2023).

- Description: "The French Government Open Data (DILA) Dataset is a collection of text data extracted from various sources provided by the French government, specifically the Direction de l'information légale et administrative (DILA). This dataset contains a wide range of legal, administrative, and legislative documents. The data has been organized into several categories for easy access and analysis" (from the dataset card).

OpenEdition

- Source: Corpus contributed by OpenLLM partners.

- Extracted from: Open Edition. License: Open Edition Books.

- Description: A collection of scientific books, journal articles, blog entries and event descriptions.

PeS2o (v2)

- Source: allenai/peS2o version v2. License: ODC BY-v1.0.

- Extracted from: S2ORC (see aclanthology). License: ODC BY-v1.0.

- Description: A preprocessed collection of academic papers designed for pre-training of language models. PeS2o is composed of two subsets: one containing full papers and one containing only paper titles and abstracts. Dataset containing (some) text retrieved through OCR. Knowledge cutoff: 2023-01-03.

- Citation: Luca Soldaini and Kyle Lo (2023). "peS2o (Pretraining Efficiently on S2ORC) Dataset," Allen Institute for AI. GitHub.

Pile (Uncopyrighted)

- Source: monology/pile-uncopyrighted. License: Other.

- Extracted from: FreeLaw, StackExchange, USPTO Backgrounds, DM Mathematics, Ubuntu IRC, PhilPapers, NIH ExPorter from The Pile. License: MIT.

- Description (from the Datasheet):

- FreeLaw: "The Free Law Project is US registered non-profit that provide access to millions of legal opinions and analytical tools for academic studies in the legal realm."

- StackExchange: "The StackExchange dataset is a dump of anonymized user-contributed content on the Stack Exchange network, a popular collection of websites centered around user-contributed questions and answers."

- USPTO Backgrounds: "The USPTO Backgrounds dataset is a set of background sections from patents granted by the United States Patent and Trademark Office, derived from its published bulk archives."

- DM Mathematics: "The DeepMind Mathematics dataset consists of a collection of mathematical problems such as algebra, arithmetic, calculus, number theory, and probability, formatted as natural language prompts Saxton et al., 2019."

- Ubuntu IRC: "The Ubuntu IRC dataset is derived from the publicly available chatlogs of all Ubunturelated channels on the Freenode IRC chat server."

- PhilPapers: a dataset of open access philosophy publications from an international database maintained by the Center for Digital Philosophy at the University of Western Ontario.

- NIH ExPORTER: "The NIH Grant abstracts provides a bulk-data repository for awarded applications through the ExPORTER4 service covering the fiscal years 1985-present."

- Pre-processing (v1.2 only):

- Filtering of PhilPapers: Papers were removed if their language, detected using Stanza, was not classified as English, French, German, Spanish or Italian.

- Filtering and text cleaning of Ubuntu IRC: Texts from some channels were excluded to avoid data from languages other than English, French, German, Spanish or Italian and certain encoding errors were fixed (see code details here).

- Citations:

- Leo Gao, Stella Biderman, Sid Black, Laurence Golding, Travis Hoppe, Charles Foster, Jason Phang, Horace He, Anish Thite, Noa Nabeshima, Shawn Presser, Connor Leahy (2020). "The Pile: An 800GB Dataset of Diverse Text for Language Modeling," arXiv:2101.00027.

- Stella Biderman, Kieran Bicheno, Leo Gao (2022). "Datasheet for the Pile," arXiv:2201.07311.

QuestionsEcritesParlement

- Source: Corpus contributed by OpenLLM partners.

- Extracted from: Regards citoyens. License: CC BY-SA.

- Description: Collection of long written questions, read during a session at the French National Assembly. Questions are asked by a member of the French parliament and addressed to a minister (who is given two months to respond).

RedPajama (v2)

Source: togethercomputer/RedPajama-Data-V2. License: Apache 2.0 (data preparation code), Not specified (data) but see Common Crawl terms of use.

Extracted from: Common Crawl.

Description: "RedPajama-V2 is an open dataset for training large language models. The dataset includes over 100B text documents coming from 84 CommonCrawl snapshots and processed using the CCNet pipeline. Out of these, there are 30B documents in the corpus that additionally come with quality signals, and 20B documents that are deduplicated" (from GitHub). Most recent crawl for French data in the Lucie Training Dataset v1.1: 2023-14. (For more details on the time periods covered by crawls in this dataset see the composition details for French, German, Italian and Spanish.)

Pre-processing and deduplication:

- Url filtering:

- Removing duplicate urls: urls were removed if their base domain overlapped with a dataset already in the Lucie Training Dataset (e.g., "theses.fr") in order to increase diversity of content (see code details).

- Filtering certain toxic content: urls from a list of blacklisted content were removed (see code details).

- Filtering by robots.txt files: we collect robots.txt and remove all documents for which CCBot is disallowed or for which we failed to collect information as of July 2024 in an effort to select data free from opt-out evidence according to the 4th article of the copyright European directive (2019).

- Filtering: A series of filters were applied using quality signals already available in the dataset. This includes (see code details):

- CCnet perplexity below 10 or above 1000

- C4 filtering (including removal of documents that contain toxic words)

- Gopher filtering and repetition removal

- Redpajama document deduplication

- Removal of personally identifying information (PII): email addresses and ip addresses were replaced with random addresses (see code details).

- MinHash deduplication was performed on each snapshot and language independantly as proposed in FineWeb. For minhash configuration see code details.

The Datatrove library was used to perform both filtering and deduplication stages.

- Url filtering:

Citation: Together Computer (2023). "RedPajama-Data-v2: an Open Dataset with 30 Trillion Tokens for Training Large Language Models," GitHub.

STAC

- Source: STAC. License: CC BY-SA-NC 4.0.

- Description: A collection of multiparty chats from an online version of the game Settlers of Catan. The full STAC corpus contains annotations for discourse structure. We use only the text of the chats.

- Citation: Nicholas Asher, Julie Hunter, Mathieu Morey, Farah Benamara and Stergos Afantenos (2016). "Discourse structure and dialogue acts in multiparty dialogue: the STAC corpus," The Tenth International Conference on Language Resources and Evaluation (LREC 2016). European Language Resources Association, pp. 2721-2727.

TheStack (v1.2)

- Source: bigcode/the-stack-dedup. License: Other (mixture of copyleft licenses).

- Extracted from: GitHub via GHarchive. Mixed licenses for source.

- Description: "The Stack contains over 6TB of permissively-licensed source code files covering 358 programming languages. The dataset was created as part of the BigCode Project, an open scientific collaboration working on the responsible development of Large Language Models for Code (Code LLMs). The Stack serves as a pre-training dataset for Code LLMs, i.e., code-generating AI systems which enable the synthesis of programs from natural language descriptions as well as other from code snippets. This is the near-deduplicated version with 3TB data" (from the dataset card).

- Citation: Denis Kocetkov, Raymond Li, Loubna Ben Allal, Jia Li, Chenghao Mou, Carlos Muñoz Ferrandis, Yacine Jernite, Margaret Mitchell, Sean Hughes, Thomas Wolf, Dzmitry Bahdanau, Leandro von Werra and Harm de Vries (2022). "The Stack: 3 TB of permissively licensed source code," arxiv:2211.15533.

Theses

- Source: Corpus contributed by OpenLLM partners.

- Extracted from: theses.fr (License: Licence Ouverte / Open Licence version 2.0) and HAL (Open access).

- Description: A collection of doctoral theses published in France. Dataset containing text retrieved through OCR.

- Pre-processing:

- Text cleaning:

- Title pages about HAL, pages containing a significant fraction of control characters, and duplicate lines were removed (see code details).

- Because the results of OCR on tables and graphics can give rise to garbage text, the text was cleaned by removing the most suspicious chunks. In particular, a chunk was removed if it was not detected as being written in French, English, Spanish, German or Italian, or if the perplexity of a CCNet Language Model on the chunk was higher than 2000 (see code details). The code to compute CCNET perplexity, parallelizing on parquet files, is available here.

- Filtering: Texts with fewer than 1000 words or 10000 characters were removed (see code details).

- Text cleaning:

Wikipedia, Wikisource, Wiktionary

- Source: Corpus contributed by LINAGORA Labs (OpenLLM-France). Also published here:

- Extracted from: Wikimedia dumps. License: GFDL/CC BY-SA.

YouTube

- Source: Corpus contributed by LINAGORA Labs (OpenLLM-France) and LeVoiceLab.

- Extracted from: YouTube.

- Description: French subtitles from videos published with permissive licenses on YouTube.

Example use in Python

Load the dataset

Load and iterate over the full dataset using the datasets library:

from datasets import load_dataset

dataset = load_dataset("OpenLLM-France/Lucie-Training-Dataset", split="train", streaming=True)

for sample in dataset:

text = sample["text"]

# … do something with the text

Iterate over a subset

Several configurations are available to select a language, a source, or both, illustrated in the following examples.

The list of possible configurations can be obtained programmatically:

from datasets import load_dataset_builder

config_names = list(load_dataset_builder("OpenLLM-France/Lucie-Training-Dataset").builder_configs)

print(config_names)

['default', 'en', 'fr', 'de', 'es', 'it', 'de,fr', 'es,en', 'fr,en', 'it,en', 'natural', 'code', 'code-assembly', 'code-c', 'code-c#', 'code-c++', 'code-clojure', 'code-dart', 'code-elixir', 'code-erlang', 'code-fortran', 'code-go', 'code-haskell', 'code-java', 'code-javascript', 'code-julia', 'code-kotlin', 'code-lua', 'code-mathematica', 'code-matlab', 'code-ocaml', 'code-perl', 'code-php', 'code-python', 'code-r', 'code-racket', 'code-ruby', 'code-rust', 'code-scala', 'code-swift', 'code-tex', 'code-typescript', 'AmendementsParlement', 'AmericanStories', 'Claire', 'Claire-en', 'Claire-fr', 'CroissantAligned', 'DiscoursPublics', 'Europarl', 'Europarl-de', 'Europarl-en', 'Europarl-es', 'Europarl-fr', 'EuroparlAligned', 'EuroparlAligned-de,fr', 'EuroparlAligned-es,en', 'EuroparlAligned-fr,en', 'EuroparlAligned-it,en', 'Eurovoc', 'Eurovoc-de', 'Eurovoc-en', 'Eurovoc-es', 'Eurovoc-it', 'FineWebEdu', 'GallicaMonographies', 'GallicaPress', 'Gutenberg', 'Gutenberg-de', 'Gutenberg-en', 'Gutenberg-es', 'Gutenberg-fr', 'Gutenberg-it', 'HAL', 'InterventionsParlement', 'LEGI', 'MathPile', 'OpenData', 'OpenEdition', 'PeS2o', 'PeS2o-s2ag', 'PeS2o-s2orc', 'Pile', 'Pile-DM_Mathematics', 'Pile-FreeLaw', 'Pile-NIH_ExPorter', 'Pile-PhilPapers', 'Pile-StackExchange', 'Pile-USPTO_Backgrounds', 'Pile-Ubuntu_IRC', 'QuestionsEcritesParlement', 'RedPajama', 'RedPajama-de', 'RedPajama-es', 'RedPajama-fr', 'RedPajama-it', 'Stac', 'TheStack', 'Theses', 'Wikipedia', 'Wikipedia-de', 'Wikipedia-en', 'Wikipedia-es', 'Wikipedia-fr', 'Wikipedia-it', 'Wikisource', 'Wiktionary', 'YouTube']

Below are some examples of how to load data from different sources and in different languages.

Load data in French:

from datasets import load_dataset

kwargs = dict(split="train", streaming=True)

dataset = load_dataset("OpenLLM-France/Lucie-Training-Dataset", "fr", **kwargs)

Load data where French and English are aligned:

dataset = load_dataset("OpenLLM-France/Lucie-Training-Dataset", "fr,en", **kwargs)

Load data corresponding to files with programming languages:

dataset = load_dataset("OpenLLM-France/Lucie-Training-Dataset", "code", **kwargs)

Load data in Python:

dataset = load_dataset("OpenLLM-France/Lucie-Training-Dataset", "code-python", **kwargs)

Load data from Wikipedia (in all available languages):

dataset = load_dataset("OpenLLM-France/Lucie-Training-Dataset", "Wikipedia", **kwargs)

Load data from French pages of Wikipedia (wikipedia.fr):

dataset = load_dataset("OpenLLM-France/Lucie-Training-Dataset", "Wikipedia-fr", **kwargs)

Load the Pile dataset:

dataset = load_dataset("OpenLLM-France/Lucie-Training-Dataset", "Pile", **kwargs)

Load the subset "PhilPapers" from the Pile dataset:

dataset = load_dataset("OpenLLM-France/Lucie-Training-Dataset", "Pile-PhilPapers", **kwargs)

Load a specific version

You can load a specific version with the datasets Python package using the revision parameter of load_dataset(…):

from datasets import load_dataset

kwargs = dict(split="train", streaming=True)

name = None # or a configuration (e.g. "fr", "code-python", "Wikipedia-fr", "Pile-PhilPapers")

dataset = load_dataset("OpenLLM-France/Lucie-Training-Dataset", name, revision="v1.2", **kwargs)

Citation

When using the Lucie Training Dataset, please cite the following paper:

✍ Olivier Gouvert, Julie Hunter, Jérôme Louradour, Evan Dufraisse, Yaya Sy, Pierre-Carl Langlais, Anastasia Stasenko, Laura Rivière, Christophe Cerisara, Jean-Pierre Lorré (2025) Lucie-7B LLM and its training dataset

@misc{openllm2023claire,

title={The Lucie-7B LLM and the Lucie Training Dataset:

open resources for multilingual language generation},

author={Olivier Gouvert and Julie Hunter and Jérôme Louradour and Evan Dufraisse and Yaya Sy and Pierre-Carl Langlais and Anastasia Stasenko and Laura Rivière and Christophe Cerisara and Jean-Pierre Lorré},

year={2025},

archivePrefix={arXiv},

primaryClass={cs.CL}

}

Acknowledgements

The Lucie Training Dataset was created by members of LINAGORA and the OpenLLM-France community, including in alphabetical order: Evan Dufraisse (CEA), Olivier Gouvert (LINAGORA), Julie Hunter (LINAGORA), Pierre-Carl Langlais (OpSci/Pleias), Jean-Pierre Lorré (LINAGORA), Jérôme Louradour (LINAGORA), Michel-Marie Maudet (LINAGORA), Laura Rivière (LINAGORA), and Anastasia Stasenko (OpSci/Pleias).

We thank Rachel Bawden (INRIA), Clément Bénesse (Opsci), Christophe Cérisara (LORIA), Olivier Ferret (CEA), Joöl Gombin (Opsci), Ismaïl Harrando (LINAGORA), Jordan Ricker (Opsci), Guokan Shang (MBZUAI), and Yaya Sy (LORIA) for their helpful input.

Data storage and significant parts of the data processing were made possible through the HPC resources from GENCI–IDRIS (Grant 2024-GC011015444).

Contact

[email protected]