Control-LLM-Llama3.1-8B-Math16

This is a fine-tuned model of Llama-3.1-8B-Instruct for mathematical tasks on OpenCoder SFT dataset.

Linked Paper

This model is associated with the paper: Control-LLM.

Linked Open Source code - training, eval and benchmark

This model is associated with the github: Control-LLM.

Evaluation Results

Here is an overview of the evaluation results and findings:

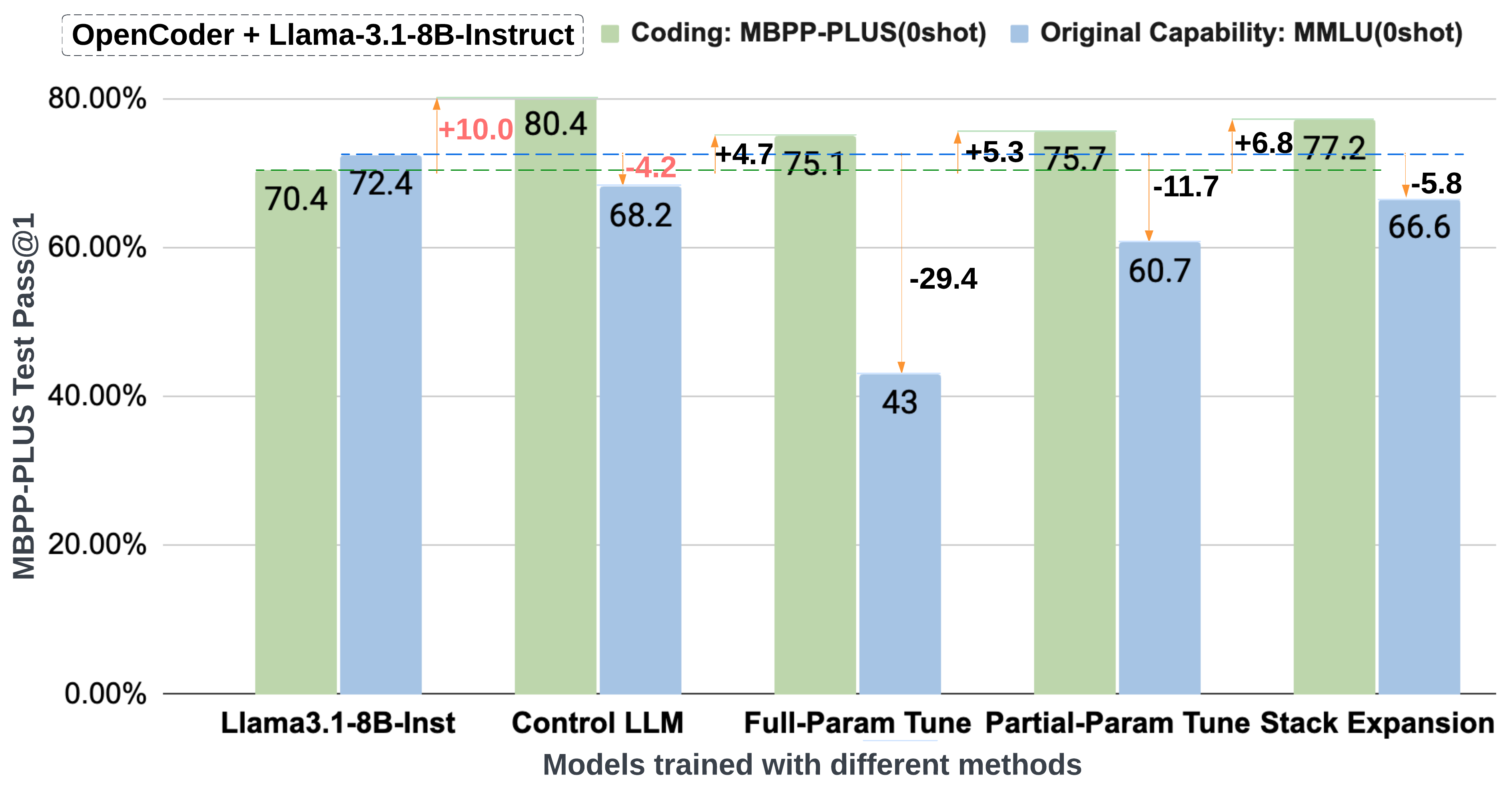

Benchmark Result and Catastrophic Forgetting on OpenCoder

The following plot illustrates benchmark result and catastrophic forgetting mitigation on the OpenCoder SFT dataset.

Benchmark Results Table

The table below summarizes evaluation results across coding tasks and original capabilities.

| Model | MB+ | MS | HE+ | HE | C-Avg | ARC | GP | MLU | MLUP | O-Avg | Overall |

|---|---|---|---|---|---|---|---|---|---|---|---|

| Llama3.1-8B-Ins | 70.4 | 67.7 | 66.5 | 70.7 | 69.1 | 83.4 | 29.9 | 72.4 | 46.7 | 60.5 | 64.8 |

| OpenCoder-8B-Ins | 81.2 | 76.3 | 78.0 | 82.3 | 79.5 | 8.2 | 25.4 | 37.4 | 11.3 | 24.6 | 52.1 |

| Full Param Tune | 75.1 | 69.6 | 71.3 | 76.8 | 73.3 | 24.4 | 21.9 | 43.0 | 19.2 | 31.5 | 52.4 |

| Partial Param Tune | 75.7 | 71.6 | 74.4 | 79.3 | 75.0 | 70.2 | 28.1 | 60.7 | 32.4 | 48.3 | 61.7 |

| Stack Expansion | 77.2 | 72.8 | 73.2 | 78.7 | 75.6 | 80.0 | 26.3 | 66.6 | 38.2 | 54.2 | 64.9 |

| Hybrid Expansion* | 77.5 | 73.5 | 76.2 | 82.3 | 77.1 | 80.9 | 32.6 | 68.1 | 40.3 | 56.0 | 66.6 |

| Control LLM* | 80.4 | 75.9 | 74.4 | 81.1 | 78.3 | 82.5 | 29.7 | 68.2 | 40.9 | 56.3 | 67.3 |

Explanation:

- MB+: MBPP Plus

- MS: MBPP Sanitized

- HE+: HumanEval Plus

- HE: HumanEval

- C-Avg: Coding - Size Weighted Average across MB+, MS, HE+, and HE

- ARC: ARC benchmark

- GP: GPQA benchmark

- MLU: MMLU (Massive Multitask Language Understanding)

- MLUP: MMLU Pro

- O-Avg: Original Capability - Size Weighted Average across ARC, GPQA, MMLU, and MMLU Pro

- Overall: Combined average across all tasks

- Downloads last month

- 8

Inference Providers

NEW

This model is not currently available via any of the supported third-party Inference Providers, and

the model is not deployed on the HF Inference API.

Model tree for ControlLLM/Llama-3.1-8B-OpenCoder16-Instruct

Base model

meta-llama/Llama-3.1-8B

Finetuned

meta-llama/Llama-3.1-8B-Instruct

Dataset used to train ControlLLM/Llama-3.1-8B-OpenCoder16-Instruct

Evaluation results

- pass_at_1,n=1 (code_instruct) on Code Evaluation Datasetself-reported0.784

- pass_at_1,n=1 (humaneval_greedy_instruct) on Code Evaluation Datasetself-reported0.817

- pass_at_1,n=1 (humaneval_plus_greedy_instruct) on Code Evaluation Datasetself-reported0.744

- pass_at_1,n=1 (mbpp_plus_0shot_instruct) on Code Evaluation Datasetself-reported0.804

- pass_at_1,n=1 (mbpp_sanitized_0shot_instruct) on Code Evaluation Datasetself-reported0.759

- exact_match,strict-match (original_capability_instruct) on Llama-3.1-8B-Instruct-evals Datasetself-reported0.563

- exact_match,strict-match (meta_arc_0shot_instruct) on Llama-3.1-8B-Instruct-evals Datasetself-reported0.825

- exact_match,strict-match (meta_gpqa_0shot_cot_instruct) on Llama-3.1-8B-Instruct-evals Datasetself-reported0.297

- exact_match,strict-match (meta_mmlu_0shot_instruct) on Llama-3.1-8B-Instruct-evals Datasetself-reported0.682

- exact_match,strict-match (meta_mmlu_pro_5shot_instruct) on Llama-3.1-8B-Instruct-evals Datasetself-reported0.409