metadata

base_model:

- mistralai/Mixtral-8x7B-Instruct-v0.1

library_name: transformers

tags:

- mergekit

- merge

license: apache-2.0

language:

- fr

- it

- de

- es

- en

Mixtral-8x7B-Instruct-v0.1-upscaled

This is a frankenmerge of mistralai/Mixtral-8x7B-Instruct-v0.1 created by interleaving layers of itself using mergekit.

Benchmark

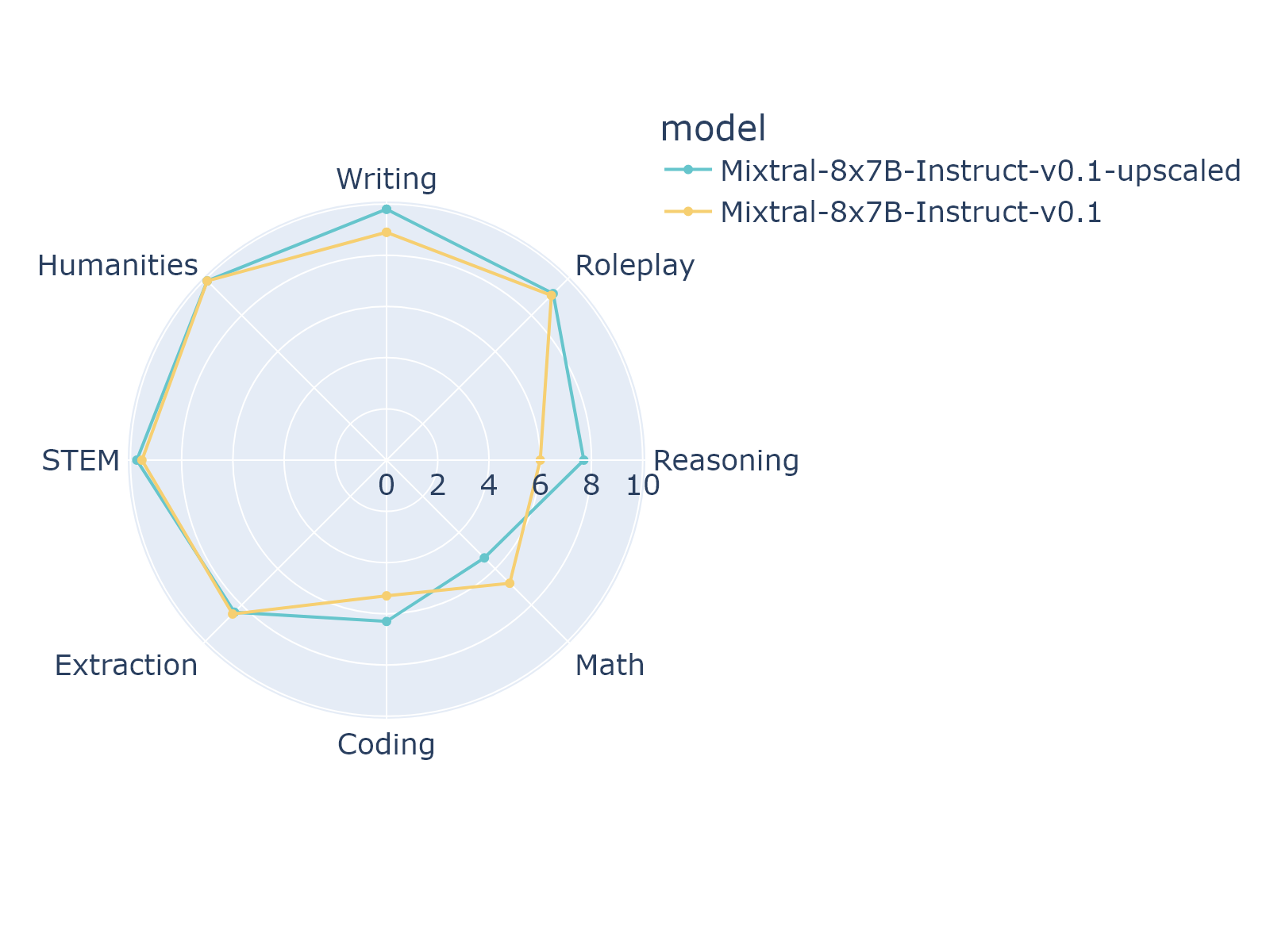

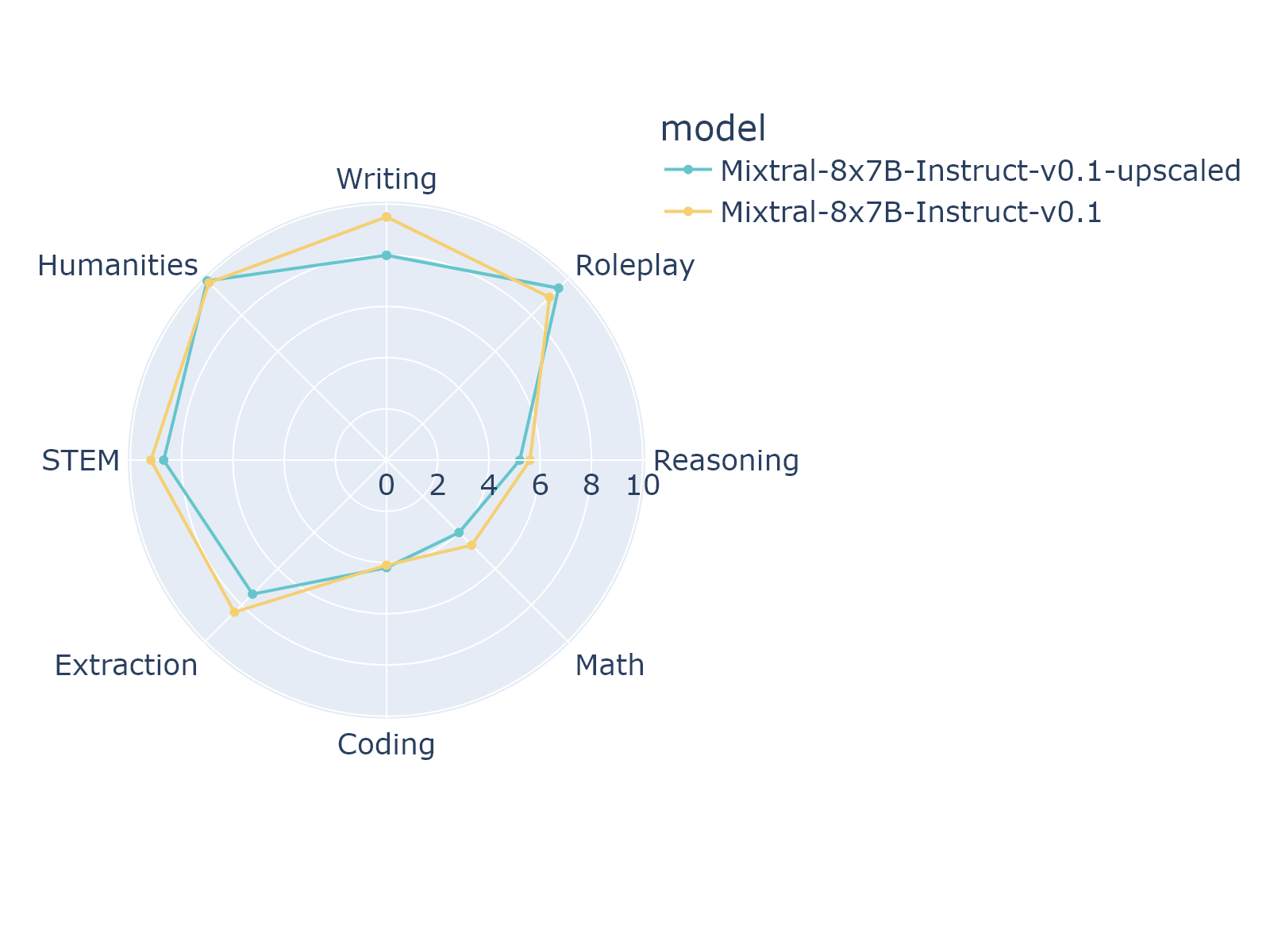

The benchmark score of the mt-bench for this model and the original models are as follows:

1-turn

2-turn

Merge Details

Merge Method

This model was merged using the passthrough merge method.

Models Merged

The following models were included in the merge:

- mistralai/Mixtral-8x7B-Instruct-v0.1

Configuration

The following YAML configuration was used to produce this model:

merge_method: passthrough

slices:

- sources:

- model: mistralai/Mixtral-8x7B-Instruct-v0.1

layer_range: [0, 8]

- sources:

- model: mistralai/Mixtral-8x7B-Instruct-v0.1

layer_range: [4, 12]

- sources:

- model: mistralai/Mixtral-8x7B-Instruct-v0.1

layer_range: [8, 16]

- sources:

- model: mistralai/Mixtral-8x7B-Instruct-v0.1

layer_range: [12, 20]

- sources:

- model: mistralai/Mixtral-8x7B-Instruct-v0.1

layer_range: [16, 24]

- sources:

- model: mistralai/Mixtral-8x7B-Instruct-v0.1

layer_range: [20, 28]

- sources:

- model: mistralai/Mixtral-8x7B-Instruct-v0.1

layer_range: [24, 32]

dtype: bfloat16

tokenizer_source: base