Spaces:

Running

Running

upload

Browse files- __pycache__/backend.cpython-37.pyc +0 -0

- app.py +48 -48

- download/20230719163556/20230719163556.zip +3 -0

- download/20230719163556/input/1512.03385.tar.gz +3 -0

- download/20230719163556/input/1706.03762.tar.gz +3 -0

- download/20230719163556/output/Attention-Is-All-You-Need/Figures/ModalNet-19.png +0 -0

- download/20230719163556/output/Attention-Is-All-You-Need/Figures/ModalNet-20.png +0 -0

- download/20230719163556/output/Attention-Is-All-You-Need/Figures/ModalNet-21.png +0 -0

- download/20230719163556/output/Attention-Is-All-You-Need/Figures/ModalNet-22.png +0 -0

- download/20230719163556/output/Attention-Is-All-You-Need/Figures/ModalNet-23.png +0 -0

- download/20230719163556/output/Attention-Is-All-You-Need/Figures/ModalNet-32.png +0 -0

- download/20230719163556/output/Attention-Is-All-You-Need/background.tex +58 -0

- download/20230719163556/output/Attention-Is-All-You-Need/introduction.tex +18 -0

- download/20230719163556/output/Attention-Is-All-You-Need/model_architecture.tex +155 -0

- download/20230719163556/output/Attention-Is-All-You-Need/ms.tex +413 -0

- download/20230719163556/output/Attention-Is-All-You-Need/nips_2017.sty +338 -0

- download/20230719163556/output/Attention-Is-All-You-Need/parameter_attention.tex +45 -0

- download/20230719163556/output/Attention-Is-All-You-Need/results.tex +166 -0

- download/20230719163556/output/Attention-Is-All-You-Need/sqrt_d_trick.tex +28 -0

- download/20230719163556/output/Attention-Is-All-You-Need/training.tex +42 -0

- download/20230719163556/output/Attention-Is-All-You-Need/vis/anaphora_resolution2_new.pdf +0 -0

- download/20230719163556/output/Attention-Is-All-You-Need/vis/anaphora_resolution_new.pdf +0 -0

- download/20230719163556/output/Attention-Is-All-You-Need/vis/attending_to_head2_new.pdf +0 -0

- download/20230719163556/output/Attention-Is-All-You-Need/vis/attending_to_head_new.pdf +0 -0

- download/20230719163556/output/Attention-Is-All-You-Need/vis/making_more_difficult5_new.pdf +0 -0

- download/20230719163556/output/Attention-Is-All-You-Need/vis/making_more_difficult_new.pdf +0 -0

- download/20230719163556/output/Attention-Is-All-You-Need/visualizations.tex +18 -0

- download/20230719163556/output/Attention-Is-All-You-Need/why_self_attention.tex +98 -0

- download/20230719163556/output/Deep-Residual-Learning-for-Image-Recognition/cvpr.sty +249 -0

- download/20230719163556/output/Deep-Residual-Learning-for-Image-Recognition/cvpr_eso.sty +109 -0

- download/20230719163556/output/Deep-Residual-Learning-for-Image-Recognition/eps/arch.pdf +0 -0

- download/20230719163556/output/Deep-Residual-Learning-for-Image-Recognition/eps/block.pdf +0 -0

- download/20230719163556/output/Deep-Residual-Learning-for-Image-Recognition/eps/block_deeper.pdf +0 -0

- download/20230719163556/output/Deep-Residual-Learning-for-Image-Recognition/eps/cifar.pdf +0 -0

- download/20230719163556/output/Deep-Residual-Learning-for-Image-Recognition/eps/imagenet.pdf +0 -0

- download/20230719163556/output/Deep-Residual-Learning-for-Image-Recognition/eps/std.pdf +0 -0

- download/20230719163556/output/Deep-Residual-Learning-for-Image-Recognition/eps/teaser.pdf +0 -0

- download/20230719163556/output/Deep-Residual-Learning-for-Image-Recognition/eso-pic.sty +267 -0

- download/20230719163556/output/Deep-Residual-Learning-for-Image-Recognition/ieee.bst +1129 -0

- download/20230719163556/output/Deep-Residual-Learning-for-Image-Recognition/residual_v1_arxiv_release.bbl +273 -0

- download/20230719163556/output/Deep-Residual-Learning-for-Image-Recognition/residual_v1_arxiv_release.tex +909 -0

__pycache__/backend.cpython-37.pyc

ADDED

|

Binary file (2.61 kB). View file

|

|

|

app.py

CHANGED

|

@@ -1,4 +1,4 @@

|

|

| 1 |

-

import imp

|

| 2 |

import streamlit as st

|

| 3 |

import pandas as pd

|

| 4 |

import numpy as np

|

|

@@ -41,51 +41,51 @@ if crawling_or_not:

|

|

| 41 |

# cleaning the pdf lists

|

| 42 |

pdf_lists = [i.strip() for i in pdf_lists if len(i) > 0]

|

| 43 |

# TODO: limit the number of paper up to 10 since I am not sure that whether base64 support large file download

|

| 44 |

-

try:

|

| 45 |

-

|

| 46 |

-

|

| 47 |

-

|

| 48 |

-

|

| 49 |

-

|

| 50 |

-

|

| 51 |

-

|

| 52 |

-

|

| 53 |

-

|

| 54 |

-

|

| 55 |

-

|

| 56 |

-

|

| 57 |

-

|

| 58 |

-

|

| 59 |

-

|

| 60 |

-

|

| 61 |

-

|

| 62 |

-

|

| 63 |

-

|

| 64 |

-

|

| 65 |

-

|

| 66 |

-

|

| 67 |

-

|

| 68 |

-

|

| 69 |

-

|

| 70 |

-

|

| 71 |

-

|

| 72 |

-

|

| 73 |

-

|

| 74 |

-

|

| 75 |

-

# finish one paper

|

| 76 |

-

bar.progress((i+1)/N)

|

| 77 |

-

download_status.text(f"Iteration [{i+1}/{N}]: Finish Downloading of "+title)

|

| 78 |

-

|

| 79 |

-

with st.spinner("Archiving as Zip Files..."):

|

| 80 |

-

# save it as zip file

|

| 81 |

-

filepath = archive_dir(out,os.path.join(base,project_name))

|

| 82 |

-

|

| 83 |

-

# download

|

| 84 |

-

b64 = ToBase64(filepath).decode()

|

| 85 |

-

href = f"<a href='data:file/csv;base64,{b64}' download='arxiv2latex-output-{datetime.datetime.now()}.zip' color='red'>Click here to Download the Output Latex Zip Files</a>"

|

| 86 |

-

st.markdown(href, unsafe_allow_html=True)

|

| 87 |

|

| 88 |

-

|

| 89 |

-

|

| 90 |

-

|

| 91 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# import imp

|

| 2 |

import streamlit as st

|

| 3 |

import pandas as pd

|

| 4 |

import numpy as np

|

|

|

|

| 41 |

# cleaning the pdf lists

|

| 42 |

pdf_lists = [i.strip() for i in pdf_lists if len(i) > 0]

|

| 43 |

# TODO: limit the number of paper up to 10 since I am not sure that whether base64 support large file download

|

| 44 |

+

# try:

|

| 45 |

+

if len(pdf_lists) > predefined_limits:

|

| 46 |

+

st.warning(f"Currently only support up to {predefined_limits} papers. Please input less than {predefined_limits} papers.")

|

| 47 |

+

else:

|

| 48 |

+

# parsing

|

| 49 |

+

base='./download/'

|

| 50 |

+

project_name = get_timestamp().replace(" ","-")

|

| 51 |

+

base = os.path.join(base,project_name)

|

| 52 |

+

make_dir_if_not_exist(base)

|

| 53 |

+

|

| 54 |

+

# st.write(download_status)

|

| 55 |

+

with st.spinner("Downloading papers..."):

|

| 56 |

+

# progress bar

|

| 57 |

+

bar = st.progress(0)

|

| 58 |

+

download_status = st.empty()

|

| 59 |

+

N = len(pdf_lists)

|

| 60 |

+

for i, pdf_link in tqdm(enumerate(pdf_lists)):

|

| 61 |

+

title = get_name_from_arvix(pdf_link)

|

| 62 |

+

file_stamp = pdf_link.split("/")[-1]

|

| 63 |

+

source_link = "https://arxiv.org/e-print/"+file_stamp

|

| 64 |

+

inp = os.path.join(base,'input')

|

| 65 |

+

make_dir_if_not_exist(inp)

|

| 66 |

+

out = os.path.join(base,'output')

|

| 67 |

+

make_dir_if_not_exist(out)

|

| 68 |

+

response = requests.get(source_link)

|

| 69 |

+

filename = file_stamp+".tar.gz"

|

| 70 |

+

filepath = os.path.join(inp,filename)

|

| 71 |

+

open(filepath, "wb").write(response.content)

|

| 72 |

+

outpath = os.path.join(out,title)

|

| 73 |

+

untar(filepath,outpath)

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 74 |

|

| 75 |

+

# finish one paper

|

| 76 |

+

bar.progress((i+1)/N)

|

| 77 |

+

download_status.text(f"Iteration [{i+1}/{N}]: Finish Downloading of "+title)

|

| 78 |

+

|

| 79 |

+

with st.spinner("Archiving as Zip Files..."):

|

| 80 |

+

# save it as zip file

|

| 81 |

+

filepath = archive_dir(out,os.path.join(base,project_name))

|

| 82 |

+

|

| 83 |

+

# download

|

| 84 |

+

b64 = ToBase64(filepath).decode()

|

| 85 |

+

href = f"<a href='data:file/csv;base64,{b64}' download='arxiv2latex-output-{datetime.datetime.now()}.zip' color='red'>Click here to Download the Output Latex Zip Files</a>"

|

| 86 |

+

st.markdown(href, unsafe_allow_html=True)

|

| 87 |

+

|

| 88 |

+

# 状态

|

| 89 |

+

st.success("Finished")

|

| 90 |

+

# except Exception as e:

|

| 91 |

+

# st.error("Something goes wrong. Please check the input or concat me to fix this bug. Error message: \n"+str(e))

|

download/20230719163556/20230719163556.zip

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:b8c08fed6381f25599036729607317c42b5c1a23ffc648cd0d43e3087819c4ef

|

| 3 |

+

size 1719685

|

download/20230719163556/input/1512.03385.tar.gz

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:a975e8b798fb4c447551bffd4e74a3abf169b151e4435390ab6d16bf88cef880

|

| 3 |

+

size 506177

|

download/20230719163556/input/1706.03762.tar.gz

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:2500348e730741b9a8eafcac0301f7e3f0b1c670f9e08ce62e6c45a36a64cf0a

|

| 3 |

+

size 1150961

|

download/20230719163556/output/Attention-Is-All-You-Need/Figures/ModalNet-19.png

ADDED

|

download/20230719163556/output/Attention-Is-All-You-Need/Figures/ModalNet-20.png

ADDED

|

download/20230719163556/output/Attention-Is-All-You-Need/Figures/ModalNet-21.png

ADDED

|

download/20230719163556/output/Attention-Is-All-You-Need/Figures/ModalNet-22.png

ADDED

|

download/20230719163556/output/Attention-Is-All-You-Need/Figures/ModalNet-23.png

ADDED

|

download/20230719163556/output/Attention-Is-All-You-Need/Figures/ModalNet-32.png

ADDED

|

download/20230719163556/output/Attention-Is-All-You-Need/background.tex

ADDED

|

@@ -0,0 +1,58 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

The goal of reducing sequential computation also forms the foundation of the Extended Neural GPU \citep{extendedngpu}, ByteNet \citep{NalBytenet2017} and ConvS2S \citep{JonasFaceNet2017}, all of which use convolutional neural networks as basic building block, computing hidden representations in parallel for all input and output positions. In these models, the number of operations required to relate signals from two arbitrary input or output positions grows in the distance between positions, linearly for ConvS2S and logarithmically for ByteNet. This makes it more difficult to learn dependencies between distant positions \citep{hochreiter2001gradient}. In the Transformer this is reduced to a constant number of operations, albeit at the cost of reduced effective resolution due to averaging attention-weighted positions, an effect we counteract with Multi-Head Attention as described in section~\ref{sec:attention}.

|

| 2 |

+

|

| 3 |

+

Self-attention, sometimes called intra-attention is an attention mechanism relating different positions of a single sequence in order to compute a representation of the sequence. Self-attention has been used successfully in a variety of tasks including reading comprehension, abstractive summarization, textual entailment and learning task-independent sentence representations \citep{cheng2016long, decomposableAttnModel, paulus2017deep, lin2017structured}.

|

| 4 |

+

|

| 5 |

+

End-to-end memory networks are based on a recurrent attention mechanism instead of sequence-aligned recurrence and have been shown to perform well on simple-language question answering and language modeling tasks \citep{sukhbaatar2015}.

|

| 6 |

+

|

| 7 |

+

To the best of our knowledge, however, the Transformer is the first transduction model relying entirely on self-attention to compute representations of its input and output without using sequence-aligned RNNs or convolution.

|

| 8 |

+

In the following sections, we will describe the Transformer, motivate self-attention and discuss its advantages over models such as \citep{neural_gpu, NalBytenet2017} and \citep{JonasFaceNet2017}.

|

| 9 |

+

|

| 10 |

+

|

| 11 |

+

%\citep{JonasFaceNet2017} report new SOTA on machine translation for English-to-German (EnDe), Enlish-to-French (EnFr) and English-to-Romanian language pairs.

|

| 12 |

+

|

| 13 |

+

%For example,! in MT, we must draw information from both input and previous output words to translate an output word accurately. An attention layer \citep{bahdanau2014neural} can connect a very large number of positions at low computation cost, making it an essential ingredient in competitive recurrent models for machine translation.

|

| 14 |

+

|

| 15 |

+

%A natural question to ask then is, "Could we replace recurrence with attention?". \marginpar{Don't know if it's the most natural question to ask given the previous statements. Also, need to say that the complexity table summarizes these statements} Such a model would be blessed with the computational efficiency of attention and the power of cross-positional communication. In this work, show that pure attention models work remarkably well for MT, achieving new SOTA results on EnDe and EnFr, and can be trained in under $2$ days on xyz architecture.

|

| 16 |

+

|

| 17 |

+

%After the seminal models introduced in \citep{sutskever14, bahdanau2014neural, cho2014learning}, recurrent models have become the dominant solution for both sequence modeling and sequence-to-sequence transduction. Many efforts such as \citep{wu2016google,luong2015effective,jozefowicz2016exploring} have pushed the boundaries of machine translation (MT) and language modeling with recurrent endoder-decoder and recurrent language models. Recent effort \citep{shazeer2017outrageously} has successfully combined the power of conditional computation with sequence models to train very large models for MT, pushing SOTA at lower computational cost.

|

| 18 |

+

|

| 19 |

+

%Recurrent models compute a vector of hidden states $h_t$, for each time step $t$ of computation. $h_t$ is a function of both the input at time $t$ and the previous hidden state $h_t$. This dependence on the previous hidden state precludes processing all timesteps at once, instead requiring long sequences of sequential operations. In practice, this results in greatly reduced computational efficiency, as on modern computing hardware, a single operation on a large batch is much faster than a large number of operations on small batches. The problem gets worse at longer sequence lengths. Although sequential computation is not a severe bottleneck at inference time, as autoregressively generating each output requires all previous outputs, the inability to compute scores at all output positions at once hinders us from rapidly training our models over large datasets. Although impressive work such as \citep{Kuchaiev2017Factorization} is able to significantly accelerate the training of LSTMs with factorization tricks, we are still bound by the linear dependence on sequence length.

|

| 20 |

+

|

| 21 |

+

%If the model could compute hidden states at each time step using only the inputs and outputs, it would be liberated from the dependence on results from previous time steps during training. This line of thought is the foundation of recent efforts such as the Markovian neural GPU \citep{neural_gpu}, ByteNet \citep{NalBytenet2017} and ConvS2S \citep{JonasFaceNet2017}, all of which use convolutional neural networks as a building block to compute hidden representations simultaneously for all timesteps, resulting in $O(1)$ sequential time complexity. \citep{JonasFaceNet2017} report new SOTA on machine translation for English-to-German (EnDe), Enlish-to-French (EnFr) and English-to-Romanian language pairs.

|

| 22 |

+

|

| 23 |

+

%A crucial component for accurate sequence prediction is modeling cross-positional communication. For example, in MT, we must draw information from both input and previous output words to translate an output word accurately. An attention layer \citep{bahdanau2014neural} can connect a very large number of positions at a low computation cost, also $O(1)$ sequential time complexity, making it an essential ingredient in recurrent encoder-decoder architectures for MT. A natural question to ask then is, "Could we replace recurrence with attention?". \marginpar{Don't know if it's the most natural question to ask given the previous statements. Also, need to say that the complexity table summarizes these statements} Such a model would be blessed with the computational efficiency of attention and the power of cross-positional communication. In this work, show that pure attention models work remarkably well for MT, achieving new SOTA results on EnDe and EnFr, and can be trained in under $2$ days on xyz architecture.

|

| 24 |

+

|

| 25 |

+

|

| 26 |

+

|

| 27 |

+

%Note: Facebook model is no better than RNNs in this regard, since it requires a number of layers proportional to the distance you want to communicate. Bytenet is more promising, since it requires a logarithmnic number of layers (does bytenet have SOTA results)?

|

| 28 |

+

|

| 29 |

+

%Note: An attention layer can connect a very large number of positions at a low computation cost in O(1) sequential operations. This is why encoder-decoder attention has been so successful in seq-to-seq models so far. It is only natural, then, to also use attention to connect the timesteps of the same sequence.

|

| 30 |

+

|

| 31 |

+

%Note: I wouldn't say that long sequences are not a problem during inference. It would be great if we could infer with no long sequences. We could just say later on that, while our training graph is constant-depth, our model still requires sequential operations in the decoder part during inference due to the autoregressive nature of the model.

|

| 32 |

+

|

| 33 |

+

%\begin{table}[h!]

|

| 34 |

+

%\caption{Attention models are quite efficient for cross-positional communications when sequence length is smaller than channel depth. $n$ represents the sequence length and $d$ represents the channel depth.}

|

| 35 |

+

%\label{tab:op_complexities}

|

| 36 |

+

%\begin{center}

|

| 37 |

+

%\vspace{-5pt}

|

| 38 |

+

%\scalebox{0.75}{

|

| 39 |

+

|

| 40 |

+

%\begin{tabular}{l|c|c|c}

|

| 41 |

+

%\hline \hline

|

| 42 |

+

%Layer Type & Receptive & Complexity & Sequential \\

|

| 43 |

+

% & Field & & Operations \\

|

| 44 |

+

%\hline

|

| 45 |

+

%Pointwise Feed-Forward & $1$ & $O(n \cdot d^2)$ & $O(1)$ \\

|

| 46 |

+

%\hline

|

| 47 |

+

%Recurrent & $n$ & $O(n \cdot d^2)$ & $O(n)$ \\

|

| 48 |

+

%\hline

|

| 49 |

+

%Convolutional & $r$ & $O(r \cdot n \cdot d^2)$ & $O(1)$ \\

|

| 50 |

+

%\hline

|

| 51 |

+

%Convolutional (separable) & $r$ & $O(r \cdot n \cdot d + n %\cdot d^2)$ & $O(1)$ \\

|

| 52 |

+

%\hline

|

| 53 |

+

%Attention & $r$ & $O(r \cdot n \cdot d)$ & $O(1)$ \\

|

| 54 |

+

%\hline \hline

|

| 55 |

+

%\end{tabular}

|

| 56 |

+

%}

|

| 57 |

+

%\end{center}

|

| 58 |

+

%\end{table}

|

download/20230719163556/output/Attention-Is-All-You-Need/introduction.tex

ADDED

|

@@ -0,0 +1,18 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

Recurrent neural networks, long short-term memory \citep{hochreiter1997} and gated recurrent \citep{gruEval14} neural networks in particular, have been firmly established as state of the art approaches in sequence modeling and transduction problems such as language modeling and machine translation \citep{sutskever14, bahdanau2014neural, cho2014learning}. Numerous efforts have since continued to push the boundaries of recurrent language models and encoder-decoder architectures \citep{wu2016google,luong2015effective,jozefowicz2016exploring}.

|

| 2 |

+

|

| 3 |

+

Recurrent models typically factor computation along the symbol positions of the input and output sequences. Aligning the positions to steps in computation time, they generate a sequence of hidden states $h_t$, as a function of the previous hidden state $h_{t-1}$ and the input for position $t$. This inherently sequential nature precludes parallelization within training examples, which becomes critical at longer sequence lengths, as memory constraints limit batching across examples.

|

| 4 |

+

%\marginpar{not sure if the memory constraints are understandable here}

|

| 5 |

+

Recent work has achieved significant improvements in computational efficiency through factorization tricks \citep{Kuchaiev2017Factorization} and conditional computation \citep{shazeer2017outrageously}, while also improving model performance in case of the latter. The fundamental constraint of sequential computation, however, remains.

|

| 6 |

+

|

| 7 |

+

%\marginpar{@all: there is work on analyzing what attention really does in seq2seq models, couldn't find it right away}

|

| 8 |

+

|

| 9 |

+

Attention mechanisms have become an integral part of compelling sequence modeling and transduction models in various tasks, allowing modeling of dependencies without regard to their distance in the input or output sequences \citep{bahdanau2014neural, structuredAttentionNetworks}. In all but a few cases \citep{decomposableAttnModel}, however, such attention mechanisms are used in conjunction with a recurrent network.

|

| 10 |

+

|

| 11 |

+

%\marginpar{not sure if "cross-positional communication" is understandable without explanation}

|

| 12 |

+

%\marginpar{insert exact training times and stats for the model that reaches sota earliest, maybe even a single GPU model?}

|

| 13 |

+

|

| 14 |

+

In this work we propose the Transformer, a model architecture eschewing recurrence and instead relying entirely on an attention mechanism to draw global dependencies between input and output. The Transformer allows for significantly more parallelization and can reach a new state of the art in translation quality after being trained for as little as twelve hours on eight P100 GPUs.

|

| 15 |

+

%\marginpar{you removed the constant number of repetitions part. I wrote it because I wanted to make it clear that the model does not only perform attention once, while it's also not recurrent. I thought that might be important to get across early.}

|

| 16 |

+

|

| 17 |

+

% Just a standard paragraph with citations, rewrite.

|

| 18 |

+

%After the seminal papers of \citep{sutskever14}, \citep{bahdanau2014neural}, and \citep{cho2014learning}, recurrent models have become the dominant solution for both sequence modeling and sequence-to-sequence transduction. Many efforts such as \citep{wu2016google,luong2015effective,jozefowicz2016exploring} have pushed the boundaries of machine translation and language modeling with recurrent sequence models. Recent effort \citep{shazeer2017outrageously} has combined the power of conditional computation with sequence models to train very large models for machine translation, pushing SOTA at lower computational cost. Recurrent models compute a vector of hidden states $h_t$, for each time step $t$ of computation. $h_t$ is a function of both the input at time $t$ and the previous hidden state $h_t$. This dependence on the previous hidden state encumbers recurrnet models to process multiple inputs at once, and their time complexity is a linear function of the length of the input and output, both during training and inference. [What I want to say here is that although this is fine during decoding, at training time, we are given both input and output and this linear nature does not allow the RNN to process all inputs and outputs simultaneously and haven't been used on datasets that are the of the scale of the web. What's the largest dataset we have ? . Talk about Nividia and possibly other's effors to speed up things, and possibly other efforts that alleviate this, but are still limited by it's comptuational nature]. Rest of the intro: What if you could construct the state based on the actual inputs and outputs, then you could construct them all at once. This has been the foundation of many promising recent efforts, bytenet,facenet (Also talk about quasi rnn here). Now we talk about attention!! Along with cell architectures such as long short-term meory (LSTM) \citep{hochreiter1997}, and gated recurrent units (GRUs) \citep{cho2014learning}, attention has emerged as an essential ingredient in successful sequence models, in particular for machine translation. In recent years, many, if not all, state-of-the-art (SOTA) results in machine translation have been achieved with attention-based sequence models \citep{wu2016google,luong2015effective,jozefowicz2016exploring}. Talk about the neon work on how it played with attention to do self attention! Then talk about what we do.

|

download/20230719163556/output/Attention-Is-All-You-Need/model_architecture.tex

ADDED

|

@@ -0,0 +1,155 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

|

| 2 |

+

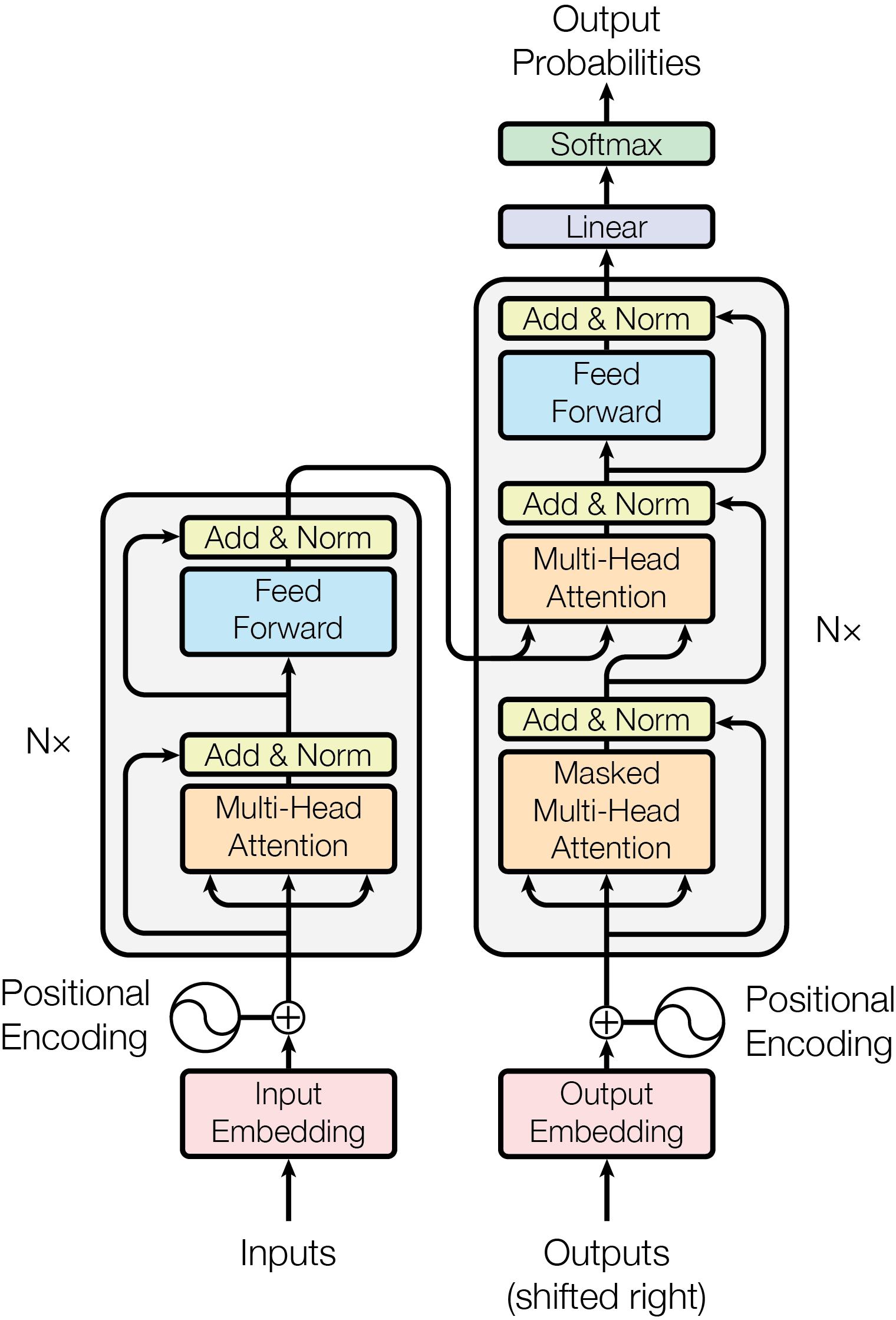

\begin{figure}

|

| 3 |

+

\centering

|

| 4 |

+

\includegraphics[scale=0.6]{Figures/ModalNet-21}

|

| 5 |

+

\caption{The Transformer - model architecture.}

|

| 6 |

+

\label{fig:model-arch}

|

| 7 |

+

\end{figure}

|

| 8 |

+

|

| 9 |

+

% Although the primary workhorse of our model is attention,

|

| 10 |

+

%Our model maintains the encoder-decoder structure that is common to many so-called sequence-to-sequence models \citep{bahdanau2014neural,sutskever14}. As in all such architectures, the encoder computes a representation of the input sequence, and the decoder consumes these representations along with the output tokens to autoregressively produce the output sequence. Where, traditionally, the encoder and decoder contain stacks of recurrent or convolutional layers, our encoder and decoder stacks are composed of attention layers and position-wise feed-forward layers (Figure~\ref{fig:model-arch}). The following sections describe the gross architecture and these particular components in detail.

|

| 11 |

+

|

| 12 |

+

Most competitive neural sequence transduction models have an encoder-decoder structure \citep{cho2014learning,bahdanau2014neural,sutskever14}. Here, the encoder maps an input sequence of symbol representations $(x_1, ..., x_n)$ to a sequence of continuous representations $\mathbf{z} = (z_1, ..., z_n)$. Given $\mathbf{z}$, the decoder then generates an output sequence $(y_1,...,y_m)$ of symbols one element at a time. At each step the model is auto-regressive \citep{graves2013generating}, consuming the previously generated symbols as additional input when generating the next.

|

| 13 |

+

|

| 14 |

+

The Transformer follows this overall architecture using stacked self-attention and point-wise, fully connected layers for both the encoder and decoder, shown in the left and right halves of Figure~\ref{fig:model-arch}, respectively.

|

| 15 |

+

|

| 16 |

+

\subsection{Encoder and Decoder Stacks}

|

| 17 |

+

|

| 18 |

+

\paragraph{Encoder:}The encoder is composed of a stack of $N=6$ identical layers. Each layer has two sub-layers. The first is a multi-head self-attention mechanism, and the second is a simple, position-wise fully connected feed-forward network. We employ a residual connection \citep{he2016deep} around each of the two sub-layers, followed by layer normalization \cite{layernorm2016}. That is, the output of each sub-layer is $\mathrm{LayerNorm}(x + \mathrm{Sublayer}(x))$, where $\mathrm{Sublayer}(x)$ is the function implemented by the sub-layer itself. To facilitate these residual connections, all sub-layers in the model, as well as the embedding layers, produce outputs of dimension $\dmodel=512$.

|

| 19 |

+

|

| 20 |

+

\paragraph{Decoder:}The decoder is also composed of a stack of $N=6$ identical layers. In addition to the two sub-layers in each encoder layer, the decoder inserts a third sub-layer, which performs multi-head attention over the output of the encoder stack. Similar to the encoder, we employ residual connections around each of the sub-layers, followed by layer normalization. We also modify the self-attention sub-layer in the decoder stack to prevent positions from attending to subsequent positions. This masking, combined with fact that the output embeddings are offset by one position, ensures that the predictions for position $i$ can depend only on the known outputs at positions less than $i$.

|

| 21 |

+

|

| 22 |

+

% In our model (Figure~\ref{fig:model-arch}), the encoder and decoder are composed of stacks of alternating self-attention layers (for cross-positional communication) and position-wise feed-forward layers (for in-place computation). In addition, the decoder stack contains encoder-decoder attention layers. Since attention is agnostic to the distances between words, our model requires a "positional encoding" to be added to the encoder and decoder input. The following sections describe all of these components in detail.

|

| 23 |

+

|

| 24 |

+

\subsection{Attention} \label{sec:attention}

|

| 25 |

+

An attention function can be described as mapping a query and a set of key-value pairs to an output, where the query, keys, values, and output are all vectors. The output is computed as a weighted sum of the values, where the weight assigned to each value is computed by a compatibility function of the query with the corresponding key.

|

| 26 |

+

|

| 27 |

+

\subsubsection{Scaled Dot-Product Attention} \label{sec:scaled-dot-prod}

|

| 28 |

+

|

| 29 |

+

% \begin{figure}

|

| 30 |

+

% \centering

|

| 31 |

+

% \includegraphics[scale=0.6]{Figures/ModalNet-19}

|

| 32 |

+

% \caption{Scaled Dot-Product Attention.}

|

| 33 |

+

% \label{fig:multi-head-att}

|

| 34 |

+

% \end{figure}

|

| 35 |

+

|

| 36 |

+

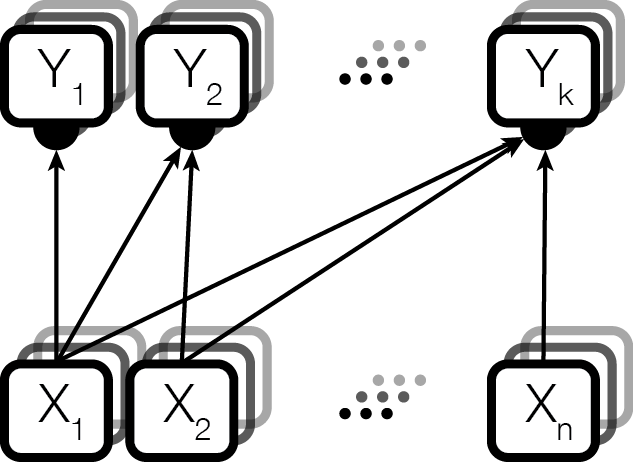

We call our particular attention "Scaled Dot-Product Attention" (Figure~\ref{fig:multi-head-att}). The input consists of queries and keys of dimension $d_k$, and values of dimension $d_v$. We compute the dot products of the query with all keys, divide each by $\sqrt{d_k}$, and apply a softmax function to obtain the weights on the values.

|

| 37 |

+

|

| 38 |

+

In practice, we compute the attention function on a set of queries simultaneously, packed together into a matrix $Q$. The keys and values are also packed together into matrices $K$ and $V$. We compute the matrix of outputs as:

|

| 39 |

+

|

| 40 |

+

\begin{equation}

|

| 41 |

+

\mathrm{Attention}(Q, K, V) = \mathrm{softmax}(\frac{QK^T}{\sqrt{d_k}})V

|

| 42 |

+

\end{equation}

|

| 43 |

+

|

| 44 |

+

The two most commonly used attention functions are additive attention \citep{bahdanau2014neural}, and dot-product (multiplicative) attention. Dot-product attention is identical to our algorithm, except for the scaling factor of $\frac{1}{\sqrt{d_k}}$. Additive attention computes the compatibility function using a feed-forward network with a single hidden layer. While the two are similar in theoretical complexity, dot-product attention is much faster and more space-efficient in practice, since it can be implemented using highly optimized matrix multiplication code.

|

| 45 |

+

|

| 46 |

+

%We scale the dot products by $1/\sqrt{d_k}$ to limit the magnitude of the dot products, which works well in practice. Otherwise, we found applying the softmax to often result in weights very close to 0 or 1, and hence minuscule gradients.

|

| 47 |

+

|

| 48 |

+

% Already described in the subsequent section

|

| 49 |

+

%When used as part of decoder self-attention, an optional mask function is applied just before the softmax to prevent positions from attending to subsequent positions. This mask simply sets the logits corresponding to all illegal connections (those outside of the lower triangle) to $-\infty$.

|

| 50 |

+

|

| 51 |

+

%\paragraph{Comparison to Additive Attention: } We choose dot product attention over additive attention \citep{bahdanau2014neural} since it can be computed using highly optimized matrix multiplication code. This optimization is particularly important to us, as we employ many attention layers in our model.

|

| 52 |

+

|

| 53 |

+

While for small values of $d_k$ the two mechanisms perform similarly, additive attention outperforms dot product attention without scaling for larger values of $d_k$ \citep{DBLP:journals/corr/BritzGLL17}. We suspect that for large values of $d_k$, the dot products grow large in magnitude, pushing the softmax function into regions where it has extremely small gradients \footnote{To illustrate why the dot products get large, assume that the components of $q$ and $k$ are independent random variables with mean $0$ and variance $1$. Then their dot product, $q \cdot k = \sum_{i=1}^{d_k} q_ik_i$, has mean $0$ and variance $d_k$.}. To counteract this effect, we scale the dot products by $\frac{1}{\sqrt{d_k}}$.

|

| 54 |

+

|

| 55 |

+

|

| 56 |

+

%We suspect this to be caused by the dot products growing too large in magnitude to result in useful gradients after applying the softmax function. To counteract this, we scale the dot product by $1/\sqrt{d_k}$.

|

| 57 |

+

|

| 58 |

+

|

| 59 |

+

\subsubsection{Multi-Head Attention} \label{sec:multihead}

|

| 60 |

+

|

| 61 |

+

\begin{figure}

|

| 62 |

+

\begin{minipage}[t]{0.5\textwidth}

|

| 63 |

+

\centering

|

| 64 |

+

Scaled Dot-Product Attention \\

|

| 65 |

+

\vspace{0.5cm}

|

| 66 |

+

\includegraphics[scale=0.6]{Figures/ModalNet-19}

|

| 67 |

+

\end{minipage}

|

| 68 |

+

\begin{minipage}[t]{0.5\textwidth}

|

| 69 |

+

\centering

|

| 70 |

+

Multi-Head Attention \\

|

| 71 |

+

\vspace{0.1cm}

|

| 72 |

+

\includegraphics[scale=0.6]{Figures/ModalNet-20}

|

| 73 |

+

\end{minipage}

|

| 74 |

+

|

| 75 |

+

|

| 76 |

+

% \centering

|

| 77 |

+

|

| 78 |

+

\caption{(left) Scaled Dot-Product Attention. (right) Multi-Head Attention consists of several attention layers running in parallel.}

|

| 79 |

+

\label{fig:multi-head-att}

|

| 80 |

+

\end{figure}

|

| 81 |

+

|

| 82 |

+

Instead of performing a single attention function with $\dmodel$-dimensional keys, values and queries, we found it beneficial to linearly project the queries, keys and values $h$ times with different, learned linear projections to $d_k$, $d_k$ and $d_v$ dimensions, respectively.

|

| 83 |

+

On each of these projected versions of queries, keys and values we then perform the attention function in parallel, yielding $d_v$-dimensional output values. These are concatenated and once again projected, resulting in the final values, as depicted in Figure~\ref{fig:multi-head-att}.

|

| 84 |

+

|

| 85 |

+

Multi-head attention allows the model to jointly attend to information from different representation subspaces at different positions. With a single attention head, averaging inhibits this.

|

| 86 |

+

|

| 87 |

+

\begin{align*}

|

| 88 |

+

\mathrm{MultiHead}(Q, K, V) &= \mathrm{Concat}(\mathrm{head_1}, ..., \mathrm{head_h})W^O\\

|

| 89 |

+

% \mathrm{where} \mathrm{head_i} &= \mathrm{Attention}(QW_Q_i^{\dmodel \times d_q}, KW_K_i^{\dmodel \times d_k}, VW^V_i^{\dmodel \times d_v})\\

|

| 90 |

+

\text{where}~\mathrm{head_i} &= \mathrm{Attention}(QW^Q_i, KW^K_i, VW^V_i)\\

|

| 91 |

+

\end{align*}

|

| 92 |

+

|

| 93 |

+

Where the projections are parameter matrices $W^Q_i \in \mathbb{R}^{\dmodel \times d_k}$, $W^K_i \in \mathbb{R}^{\dmodel \times d_k}$, $W^V_i \in \mathbb{R}^{\dmodel \times d_v}$ and $W^O \in \mathbb{R}^{hd_v \times \dmodel}$.

|

| 94 |

+

|

| 95 |

+

|

| 96 |

+

%find it better (and no more expensive) to have multiple parallel attention layers (each over the full set of positions) with proportionally lower-dimensional keys, values and queries. We call this "Multi-Head Attention" (Figure~\ref{fig:multi-head-att}). The keys, values, and queries for each of these parallel attention layers are computed by learned linear transformations of the inputs to the multi-head attention. We use different linear transformations across different parallel attention layers. The output of the parallel attention layers are concatenated, and then passed through a final learned linear transformation.

|

| 97 |

+

|

| 98 |

+

In this work we employ $h=8$ parallel attention layers, or heads. For each of these we use $d_k=d_v=\dmodel/h=64$.

|

| 99 |

+

Due to the reduced dimension of each head, the total computational cost is similar to that of single-head attention with full dimensionality.

|

| 100 |

+

|

| 101 |

+

\subsubsection{Applications of Attention in our Model}

|

| 102 |

+

|

| 103 |

+

The Transformer uses multi-head attention in three different ways:

|

| 104 |

+

\begin{itemize}

|

| 105 |

+

\item In "encoder-decoder attention" layers, the queries come from the previous decoder layer, and the memory keys and values come from the output of the encoder. This allows every position in the decoder to attend over all positions in the input sequence. This mimics the typical encoder-decoder attention mechanisms in sequence-to-sequence models such as \citep{wu2016google, bahdanau2014neural,JonasFaceNet2017}.

|

| 106 |

+

|

| 107 |

+

\item The encoder contains self-attention layers. In a self-attention layer all of the keys, values and queries come from the same place, in this case, the output of the previous layer in the encoder. Each position in the encoder can attend to all positions in the previous layer of the encoder.

|

| 108 |

+

|

| 109 |

+

\item Similarly, self-attention layers in the decoder allow each position in the decoder to attend to all positions in the decoder up to and including that position. We need to prevent leftward information flow in the decoder to preserve the auto-regressive property. We implement this inside of scaled dot-product attention by masking out (setting to $-\infty$) all values in the input of the softmax which correspond to illegal connections. See Figure~\ref{fig:multi-head-att}.

|

| 110 |

+

|

| 111 |

+

\end{itemize}

|

| 112 |

+

|

| 113 |

+

\subsection{Position-wise Feed-Forward Networks}\label{sec:ffn}

|

| 114 |

+

|

| 115 |

+

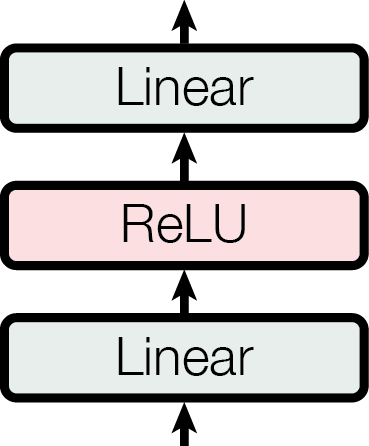

In addition to attention sub-layers, each of the layers in our encoder and decoder contains a fully connected feed-forward network, which is applied to each position separately and identically. This consists of two linear transformations with a ReLU activation in between.

|

| 116 |

+

|

| 117 |

+

\begin{equation}

|

| 118 |

+

\mathrm{FFN}(x)=\max(0, xW_1 + b_1) W_2 + b_2

|

| 119 |

+

\end{equation}

|

| 120 |

+

|

| 121 |

+

While the linear transformations are the same across different positions, they use different parameters from layer to layer. Another way of describing this is as two convolutions with kernel size 1. The dimensionality of input and output is $\dmodel=512$, and the inner-layer has dimensionality $d_{ff}=2048$.

|

| 122 |

+

|

| 123 |

+

|

| 124 |

+

|

| 125 |

+

%In the appendix, we describe how the position-wise feed-forward network can also be seen as a form of attention.

|

| 126 |

+

|

| 127 |

+

%from Jakob: The number of operations required for the model to relate signals from two arbitrary input or output positions grows in the distance between positions in input or output, linearly for ConvS2S and logarithmically for ByteNet, making it harder to learn dependencies between these positions \citep{hochreiter2001gradient}. In the transformer this is reduced to a constant number of operations, albeit at the cost of effective resolution caused by averaging attention-weighted positions, an effect we aim to counteract with multi-headed attention.

|

| 128 |

+

|

| 129 |

+

|

| 130 |

+

%Figure~\ref{fig:simple-att} presents a simple attention function, $A$, with a single head, that forms the basis of our multi-head attention. $A$ takes a query key vector $\kq$, matrices of memory keys $\km$ and memory values $\vm$ ,and produces a query value vector $\vq$ as

|

| 131 |

+

%\begin{equation*} \label{eq:attention}

|

| 132 |

+

% A(\kq, \km, \vm) = {\vm}^T (Softmax(\km \kq).

|

| 133 |

+

%\end{equation*}

|

| 134 |

+

%We linearly transform $\kq,\,\km$, and $\vm$ with learned matrices ${\Wkq \text{,} \, \Wkm}$, and ${\Wvm}$ before calling the attention function, and transform the output query with $\Wvq$ before handing it to the feed forward layer. Each attention layer has it's own set of transformation matrices, which are shared across all query positions. $A$ is applied in parallel for each query position, and is implemented very efficiently as a batch of matrix multiplies. The self-attention and encoder-decoder attention layers use $A$, but with different arguments. For example, in encdoder self-attention, queries in encoder layer $i$ attention to memories in encoder layer $i-1$. To ensure that decoder self-attention layers do not look at future words, we add $- \inf$ to the softmax logits in positions $j+1$ to query length for query position $l$.

|

| 135 |

+

|

| 136 |

+

%In simple attention, the query value is a weighted combination of the memory values where the attention weights sum to one. Although this function performs well in practice, the constraint on attention weights can restrict the amount of information that flows from memories to queries because the query cannot focus on multiple memory positions at once, which might be desirable when translating long sequences. \marginpar{@usz, could you think of an example of this ?} We remedy this by maintaining multiple attention heads at each query position that attend to all memory positions in parallel, with a different set of parameters per attention head $h$.

|

| 137 |

+

%\marginpar{}

|

| 138 |

+

|

| 139 |

+

\subsection{Embeddings and Softmax}

|

| 140 |

+

Similarly to other sequence transduction models, we use learned embeddings to convert the input tokens and output tokens to vectors of dimension $\dmodel$. We also use the usual learned linear transformation and softmax function to convert the decoder output to predicted next-token probabilities. In our model, we share the same weight matrix between the two embedding layers and the pre-softmax linear transformation, similar to \citep{press2016using}. In the embedding layers, we multiply those weights by $\sqrt{\dmodel}$.

|

| 141 |

+

|

| 142 |

+

|

| 143 |

+

\subsection{Positional Encoding}

|

| 144 |

+

Since our model contains no recurrence and no convolution, in order for the model to make use of the order of the sequence, we must inject some information about the relative or absolute position of the tokens in the sequence. To this end, we add "positional encodings" to the input embeddings at the bottoms of the encoder and decoder stacks. The positional encodings have the same dimension $\dmodel$ as the embeddings, so that the two can be summed. There are many choices of positional encodings, learned and fixed \citep{JonasFaceNet2017}.

|

| 145 |

+

|

| 146 |

+

In this work, we use sine and cosine functions of different frequencies:

|

| 147 |

+

|

| 148 |

+

\begin{align*}

|

| 149 |

+

PE_{(pos,2i)} = sin(pos / 10000^{2i/\dmodel}) \\

|

| 150 |

+

PE_{(pos,2i+1)} = cos(pos / 10000^{2i/\dmodel})

|

| 151 |

+

\end{align*}

|

| 152 |

+

|

| 153 |

+

where $pos$ is the position and $i$ is the dimension. That is, each dimension of the positional encoding corresponds to a sinusoid. The wavelengths form a geometric progression from $2\pi$ to $10000 \cdot 2\pi$. We chose this function because we hypothesized it would allow the model to easily learn to attend by relative positions, since for any fixed offset $k$, $PE_{pos+k}$ can be represented as a linear function of $PE_{pos}$.

|

| 154 |

+

|

| 155 |

+

We also experimented with using learned positional embeddings \citep{JonasFaceNet2017} instead, and found that the two versions produced nearly identical results (see Table~\ref{tab:variations} row (E)). We chose the sinusoidal version because it may allow the model to extrapolate to sequence lengths longer than the ones encountered during training.

|

download/20230719163556/output/Attention-Is-All-You-Need/ms.tex

ADDED

|

@@ -0,0 +1,413 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

\documentclass{article}

|

| 2 |

+

|

| 3 |

+

% if you need to pass options to natbib, use, e.g.:

|

| 4 |

+

\PassOptionsToPackage{numbers, compress}{natbib}

|

| 5 |

+

% before loading nips_2017

|

| 6 |

+

%

|

| 7 |

+

% to avoid loading the natbib package, add option nonatbib:

|

| 8 |

+

% \usepackage[nonatbib]{nips_2017}

|

| 9 |

+

|

| 10 |

+

% Keep final for now to remove annoying line numbers.

|

| 11 |

+

%\usepackage[final]{nips_2017}

|

| 12 |

+

% Keep final for now to remove annoying line numbers.

|

| 13 |

+

|

| 14 |

+

|

| 15 |

+

% to compile a camera-ready version, add the [final] option, e.g.:

|

| 16 |

+

\usepackage[final]{nips_2017}

|

| 17 |

+

|

| 18 |

+

\usepackage[utf8]{inputenc} % allow utf-8 input

|

| 19 |

+

\usepackage[T1]{fontenc} % use 8-bit T1 fonts

|

| 20 |

+

\usepackage{hyperref} % hyperlinks

|

| 21 |

+

\usepackage{url} % simple URL typesetting

|

| 22 |

+

\usepackage{booktabs} % professional-quality tables

|

| 23 |

+

\usepackage{amsfonts} % blackboard math symbols

|

| 24 |

+

\usepackage{amsmath}

|

| 25 |

+

\usepackage{nicefrac} % compact symbols for 1/2, etc.

|

| 26 |

+

\usepackage{microtype} % microtypography

|

| 27 |

+

\usepackage{subfiles}

|

| 28 |

+

\usepackage{xcolor}

|

| 29 |

+

\usepackage{multirow}

|

| 30 |

+

\usepackage{enumerate}

|

| 31 |

+

\usepackage{subfiles}

|

| 32 |

+

\usepackage{multirow}

|

| 33 |

+

\usepackage{graphicx}

|

| 34 |

+

\usepackage{subfiles}

|

| 35 |

+

|

| 36 |

+

|

| 37 |

+

% % Custom Commands:

|

| 38 |

+

% \newcommand\blfootnote[1]{%

|

| 39 |

+

% \begingroup

|

| 40 |

+

% \renewcommand\thefootnote{}\footnote{#1}%

|

| 41 |

+

% \addtocounter{footnote}{-1}%

|

| 42 |

+

% \endgroup

|

| 43 |

+

% }

|

| 44 |

+

|

| 45 |

+

\newcommand\todo[1]{\textcolor{red}{[[#1]]}}

|

| 46 |

+

\newcommand\mc[1]{\mathcal{#1}}

|

| 47 |

+

\newcommand*\samethanks[1][\value{footnote}]{\footnotemark[#1]}

|

| 48 |

+

%keys for memory and values. Can be changed if needed

|

| 49 |

+

\newcommand{\kq}{q}

|

| 50 |

+

\newcommand{\km}{k}

|

| 51 |

+

\newcommand{\vq}{o}

|

| 52 |

+

\newcommand{\vm}{m}

|

| 53 |

+

\newcommand{\Wkq}{W_q}

|

| 54 |

+

\newcommand{\Wkm}{W_k}

|

| 55 |

+

\newcommand{\Wvq}{W_o}

|

| 56 |

+

\newcommand{\Wvm}{W_m}

|

| 57 |

+

\newcommand{\dmodel}{d_{\text{model}}}

|

| 58 |

+

\newcommand{\dffn}{d_{\text{ffn}}}

|

| 59 |

+

\newcommand{\dff}{d_{\text{ff}}}

|

| 60 |

+

\newcommand{\mbf}[1]{\mathbf{#1}}

|

| 61 |

+

%\newcommand{\kq}{{q}_k}

|

| 62 |

+

%\newcommand{\km}{{m}_k}

|

| 63 |

+

%\newcommand{\vq}{{q}_v}

|

| 64 |

+

%\newcommand{\vm}{{m}_v}

|

| 65 |

+

%\newcommand{\Wkq}{{W_q}_k}

|

| 66 |

+

%\newcommand{\Wkm}{{W_m}_k}

|

| 67 |

+

%\newcommand{\Wvq}{{W_q}_v}

|

| 68 |

+

%\newcommand{\Wvm}{{W_m}_v}

|

| 69 |

+

\newcommand\concat[3]{\left[#1 \parallel_#3 #2\right]}

|

| 70 |

+

|

| 71 |

+

\title{Attention Is All You Need}

|

| 72 |

+

|

| 73 |

+

% The \author macro works with any number of authors. There are two

|

| 74 |

+

% commands used to separate the names and addresses of multiple

|

| 75 |

+

% authors: \And and \AND.

|

| 76 |

+

%

|

| 77 |

+

% Using \And between authors leaves it to LaTeX to determine where to

|

| 78 |

+

% break the lines. Using \AND forces a line break at that point. So,

|

| 79 |

+

% if LaTeX puts 3 of 4 authors names on the first line, and the last

|

| 80 |

+

% on the second line, try using \AND instead of \And before the third

|

| 81 |

+

% author name.

|

| 82 |

+

\author{

|

| 83 |

+

\AND

|

| 84 |

+

Ashish Vaswani\thanks{Equal contribution. Listing order is random. Jakob proposed replacing RNNs with self-attention and started the effort to evaluate this idea.

|

| 85 |

+

Ashish, with Illia, designed and implemented the first Transformer models and has been crucially involved in every aspect of this work. Noam proposed scaled dot-product attention, multi-head attention and the parameter-free position representation and became the other person involved in nearly every detail. Niki designed, implemented, tuned and evaluated countless model variants in our original codebase and tensor2tensor. Llion also experimented with novel model variants, was responsible for our initial codebase, and efficient inference and visualizations. Lukasz and Aidan spent countless long days designing various parts of and implementing tensor2tensor, replacing our earlier codebase, greatly improving results and massively accelerating our research.

|

| 86 |

+

}\\

|

| 87 |

+

Google Brain\\

|

| 88 |

+

\texttt{[email protected]}\\

|

| 89 |

+

\And

|

| 90 |

+

Noam Shazeer\footnotemark[1]\\

|

| 91 |

+

Google Brain\\

|

| 92 |

+

\texttt{[email protected]}\\

|

| 93 |

+

\And

|

| 94 |

+

Niki Parmar\footnotemark[1]\\

|

| 95 |

+

Google Research\\

|

| 96 |

+

\texttt{[email protected]}\\

|

| 97 |

+

\And

|

| 98 |

+

Jakob Uszkoreit\footnotemark[1]\\

|

| 99 |

+

Google Research\\

|

| 100 |

+

\texttt{[email protected]}\\

|

| 101 |

+

\And

|

| 102 |

+

Llion Jones\footnotemark[1]\\

|

| 103 |

+

Google Research\\

|

| 104 |

+

\texttt{[email protected]}\\

|

| 105 |

+

\And

|

| 106 |

+

Aidan N. Gomez\footnotemark[1] \hspace{1.7mm}\thanks{Work performed while at Google Brain.}\\

|

| 107 |

+

University of Toronto\\

|

| 108 |

+

\texttt{[email protected]}

|

| 109 |

+

\And

|

| 110 |

+

{\L}ukasz Kaiser\footnotemark[1]\\

|

| 111 |

+

Google Brain\\

|

| 112 |

+

\texttt{[email protected]}\\

|

| 113 |

+

\And

|

| 114 |

+

Illia Polosukhin\footnotemark[1]\hspace{1.7mm} \thanks{Work performed while at Google Research.}\\

|

| 115 |

+

\texttt{[email protected]}\\

|

| 116 |

+

}

|

| 117 |

+

|

| 118 |

+

\begin{document}

|

| 119 |

+

|

| 120 |

+

\maketitle

|

| 121 |

+

|

| 122 |

+

\begin{abstract}

|

| 123 |

+

The dominant sequence transduction models are based on complex recurrent or convolutional neural networks that include an encoder and a decoder. The best performing models also connect the encoder and decoder through an attention mechanism. We propose a new simple network architecture, the Transformer, based solely on attention mechanisms, dispensing with recurrence and convolutions entirely. Experiments on two machine translation tasks show these models to be superior in quality while being more parallelizable and requiring significantly less time to train. Our model achieves 28.4 BLEU on the WMT 2014 English-to-German translation task, improving over the existing best results, including ensembles, by over 2 BLEU. On the WMT 2014 English-to-French translation task, our model establishes a new single-model state-of-the-art BLEU score of 41.8 after training for 3.5 days on eight GPUs, a small fraction of the training costs of the best models from the literature. We show that the Transformer generalizes well to other tasks by applying it successfully to English constituency parsing both with large and limited training data.

|

| 124 |

+

% \blfootnote{Code available at \url{https://github.com/tensorflow/tensor2tensor}}

|

| 125 |

+

|

| 126 |

+

%TODO(noam): update results for new models.

|

| 127 |

+

|

| 128 |

+