diff --git a/lagent/examples/.ipynb_checkpoints/agent_api_web_demo-checkpoint.py b/lagent/examples/.ipynb_checkpoints/agent_api_web_demo-checkpoint.py

new file mode 100644

index 0000000000000000000000000000000000000000..de909f08ce94c1167adb411079447d85ebb8a9a1

--- /dev/null

+++ b/lagent/examples/.ipynb_checkpoints/agent_api_web_demo-checkpoint.py

@@ -0,0 +1,198 @@

+import copy

+import os

+from typing import List

+import streamlit as st

+from lagent.actions import ArxivSearch

+from lagent.prompts.parsers import PluginParser

+from lagent.agents.stream import INTERPRETER_CN, META_CN, PLUGIN_CN, AgentForInternLM, get_plugin_prompt

+from lagent.llms import GPTAPI

+from lagent.actions import ArxivSearch, WeatherQuery

+

+

+class SessionState:

+ """管理会话状态的类。"""

+

+ def init_state(self):

+ """初始化会话状态变量。"""

+ st.session_state['assistant'] = [] # 助手消息历史

+ st.session_state['user'] = [] # 用户消息历史

+ # 初始化插件列表

+ action_list = [

+ ArxivSearch(),

+ WeatherQuery(),

+ ]

+ st.session_state['plugin_map'] = {action.name: action for action in action_list}

+ st.session_state['model_map'] = {} # 存储模型实例

+ st.session_state['model_selected'] = None # 当前选定模型

+ st.session_state['plugin_actions'] = set() # 当前激活插件

+ st.session_state['history'] = [] # 聊天历史

+ st.session_state['api_base'] = None # 初始化API base地址

+

+ def clear_state(self):

+ """清除当前会话状态。"""

+ st.session_state['assistant'] = []

+ st.session_state['user'] = []

+ st.session_state['model_selected'] = None

+

+

+class StreamlitUI:

+ """管理 Streamlit 界面的类。"""

+

+ def __init__(self, session_state: SessionState):

+ self.session_state = session_state

+ self.plugin_action = [] # 当前选定的插件

+ # 初始化提示词

+ self.meta_prompt = META_CN

+ self.plugin_prompt = PLUGIN_CN

+ self.init_streamlit()

+

+ def init_streamlit(self):

+ """初始化 Streamlit 的 UI 设置。"""

+ st.set_page_config(

+ layout='wide',

+ page_title='lagent-web',

+ page_icon='./docs/imgs/lagent_icon.png'

+ )

+ st.header(':robot_face: :blue[Lagent] Web Demo ', divider='rainbow')

+

+ def setup_sidebar(self):

+ """设置侧边栏,选择模型和插件。"""

+ # 模型名称和 API Base 输入框

+ model_name = st.sidebar.text_input('模型名称:', value='internlm2.5-latest')

+

+ # ================================== 硅基流动的API ==================================

+ # 注意,如果采用硅基流动API,模型名称需要更改为:internlm/internlm2_5-7b-chat 或者 internlm/internlm2_5-20b-chat

+ # api_base = st.sidebar.text_input(

+ # 'API Base 地址:', value='https://api.siliconflow.cn/v1/chat/completions'

+ # )

+ # ================================== 浦语官方的API ==================================

+ api_base = st.sidebar.text_input(

+ 'API Base 地址:', value='https://internlm-chat.intern-ai.org.cn/puyu/api/v1/chat/completions'

+ )

+ # ==================================================================================

+ # 插件选择

+ plugin_name = st.sidebar.multiselect(

+ '插件选择',

+ options=list(st.session_state['plugin_map'].keys()),

+ default=[],

+ )

+

+ # 根据选择的插件生成插件操作列表

+ self.plugin_action = [st.session_state['plugin_map'][name] for name in plugin_name]

+

+ # 动态生成插件提示

+ if self.plugin_action:

+ self.plugin_prompt = get_plugin_prompt(self.plugin_action)

+

+ # 清空对话按钮

+ if st.sidebar.button('清空对话', key='clear'):

+ self.session_state.clear_state()

+

+ return model_name, api_base, self.plugin_action

+

+ def initialize_chatbot(self, model_name, api_base, plugin_action):

+ """初始化 GPTAPI 实例作为 chatbot。"""

+ token = os.getenv("token")

+ if not token:

+ st.error("未检测到环境变量 `token`,请设置环境变量,例如 `export token='your_token_here'` 后重新运行 X﹏X")

+ st.stop() # 停止运行应用

+

+ # 创建完整的 meta_prompt,保留原始结构并动态插入侧边栏配置

+ meta_prompt = [

+ {"role": "system", "content": self.meta_prompt, "api_role": "system"},

+ {"role": "user", "content": "", "api_role": "user"},

+ {"role": "assistant", "content": self.plugin_prompt, "api_role": "assistant"},

+ {"role": "environment", "content": "", "api_role": "environment"}

+ ]

+

+ api_model = GPTAPI(

+ model_type=model_name,

+ api_base=api_base,

+ key=token, # 从环境变量中获取授权令牌

+ meta_template=meta_prompt,

+ max_new_tokens=512,

+ temperature=0.8,

+ top_p=0.9

+ )

+ return api_model

+

+ def render_user(self, prompt: str):

+ """渲染用户输入内容。"""

+ with st.chat_message('user'):

+ st.markdown(prompt)

+

+ def render_assistant(self, agent_return):

+ """渲染助手响应内容。"""

+ with st.chat_message('assistant'):

+ content = getattr(agent_return, "content", str(agent_return))

+ st.markdown(content if isinstance(content, str) else str(content))

+

+

+def main():

+ """主函数,运行 Streamlit 应用。"""

+ if 'ui' not in st.session_state:

+ session_state = SessionState()

+ session_state.init_state()

+ st.session_state['ui'] = StreamlitUI(session_state)

+ else:

+ st.set_page_config(

+ layout='wide',

+ page_title='lagent-web',

+ page_icon='./docs/imgs/lagent_icon.png'

+ )

+ st.header(':robot_face: :blue[Lagent] Web Demo ', divider='rainbow')

+

+ # 设置侧边栏并获取模型和插件信息

+ model_name, api_base, plugin_action = st.session_state['ui'].setup_sidebar()

+ plugins = [dict(type=f"lagent.actions.{plugin.__class__.__name__}") for plugin in plugin_action]

+

+ if (

+ 'chatbot' not in st.session_state or

+ model_name != st.session_state['chatbot'].model_type or

+ 'last_plugin_action' not in st.session_state or

+ plugin_action != st.session_state['last_plugin_action'] or

+ api_base != st.session_state['api_base']

+ ):

+ # 更新 Chatbot

+ st.session_state['chatbot'] = st.session_state['ui'].initialize_chatbot(model_name, api_base, plugin_action)

+ st.session_state['last_plugin_action'] = plugin_action # 更新插件状态

+ st.session_state['api_base'] = api_base # 更新 API Base 地址

+

+ # 初始化 AgentForInternLM

+ st.session_state['agent'] = AgentForInternLM(

+ llm=st.session_state['chatbot'],

+ plugins=plugins,

+ output_format=dict(

+ type=PluginParser,

+ template=PLUGIN_CN,

+ prompt=get_plugin_prompt(plugin_action)

+ )

+ )

+ # 清空对话历史

+ st.session_state['session_history'] = []

+

+ if 'agent' not in st.session_state:

+ st.session_state['agent'] = None

+

+ agent = st.session_state['agent']

+ for prompt, agent_return in zip(st.session_state['user'], st.session_state['assistant']):

+ st.session_state['ui'].render_user(prompt)

+ st.session_state['ui'].render_assistant(agent_return)

+

+ # 处理用户输入

+ if user_input := st.chat_input(''):

+ st.session_state['ui'].render_user(user_input)

+

+ # 调用模型时确保侧边栏的系统提示词和插件提示词生效

+ res = agent(user_input, session_id=0)

+ st.session_state['ui'].render_assistant(res)

+

+ # 更新会话状态

+ st.session_state['user'].append(user_input)

+ st.session_state['assistant'].append(copy.deepcopy(res))

+

+ st.session_state['last_status'] = None

+

+

+if __name__ == '__main__':

+ main()

diff --git a/lagent/examples/.ipynb_checkpoints/multi_agents_api_web_demo-checkpoint.py b/lagent/examples/.ipynb_checkpoints/multi_agents_api_web_demo-checkpoint.py

new file mode 100644

index 0000000000000000000000000000000000000000..c30f0b4280c5179778a89fd3ac3b46441028fdcd

--- /dev/null

+++ b/lagent/examples/.ipynb_checkpoints/multi_agents_api_web_demo-checkpoint.py

@@ -0,0 +1,198 @@

+import os

+import asyncio

+import json

+import re

+import requests

+import streamlit as st

+

+from lagent.agents import Agent

+from lagent.prompts.parsers import PluginParser

+from lagent.agents.stream import PLUGIN_CN, get_plugin_prompt

+from lagent.schema import AgentMessage

+from lagent.actions import ArxivSearch

+from lagent.hooks import Hook

+from lagent.llms import GPTAPI

+

+YOUR_TOKEN_HERE = os.getenv("token")

+if not YOUR_TOKEN_HERE:

+ raise EnvironmentError("未找到环境变量 'token',请设置后再运行程序。")

+

+# Hook类,用于对消息添加前缀

+class PrefixedMessageHook(Hook):

+ def __init__(self, prefix, senders=None):

+ """

+ 初始化Hook

+ :param prefix: 消息前缀

+ :param senders: 指定发送者列表

+ """

+ self.prefix = prefix

+ self.senders = senders or []

+

+ def before_agent(self, agent, messages, session_id):

+ """

+ 在代理处理消息前修改消息内容

+ :param agent: 当前代理

+ :param messages: 消息列表

+ :param session_id: 会话ID

+ """

+ for message in messages:

+ if message.sender in self.senders:

+ message.content = self.prefix + message.content

+

+class AsyncBlogger:

+ """博客生成类,整合写作者和批评者。"""

+

+ def __init__(self, model_type, api_base, writer_prompt, critic_prompt, critic_prefix='', max_turn=2):

+ """

+ 初始化博客生成器

+ :param model_type: 模型类型

+ :param api_base: API 基地址

+ :param writer_prompt: 写作者提示词

+ :param critic_prompt: 批评者提示词

+ :param critic_prefix: 批评消息前缀

+ :param max_turn: 最大轮次

+ """

+ self.model_type = model_type

+ self.api_base = api_base

+ self.llm = GPTAPI(

+ model_type=model_type,

+ api_base=api_base,

+ key=YOUR_TOKEN_HERE,

+ max_new_tokens=4096,

+ )

+ self.plugins = [dict(type='lagent.actions.ArxivSearch')]

+ self.writer = Agent(

+ self.llm,

+ writer_prompt,

+ name='写作者',

+ output_format=dict(

+ type=PluginParser,

+ template=PLUGIN_CN,

+ prompt=get_plugin_prompt(self.plugins)

+ )

+ )

+ self.critic = Agent(

+ self.llm,

+ critic_prompt,

+ name='批评者',

+ hooks=[PrefixedMessageHook(critic_prefix, ['写作者'])]

+ )

+ self.max_turn = max_turn

+

+ async def forward(self, message: AgentMessage, update_placeholder):

+ """

+ 执行多阶段博客生成流程

+ :param message: 初始消息

+ :param update_placeholder: Streamlit占位符

+ :return: 最终优化的博客内容

+ """

+ step1_placeholder = update_placeholder.container()

+ step2_placeholder = update_placeholder.container()

+ step3_placeholder = update_placeholder.container()

+

+ # 第一步:生成初始内容

+ step1_placeholder.markdown("**Step 1: 生成初始内容...**")

+ message = self.writer(message)

+ if message.content:

+ step1_placeholder.markdown(f"**生成的初始内容**:\n\n{message.content}")

+ else:

+ step1_placeholder.markdown("**生成的初始内容为空,请检查生成逻辑。**")

+

+ # 第二步:批评者提供反馈

+ step2_placeholder.markdown("**Step 2: 批评者正在提供反馈和文献推荐...**")

+ message = self.critic(message)

+ if message.content:

+ # 解析批评者反馈

+ suggestions = re.search(r"1\. 批评建议:\n(.*?)2\. 推荐的关键词:", message.content, re.S)

+ keywords = re.search(r"2\. 推荐的关键词:\n- (.*)", message.content)

+ feedback = suggestions.group(1).strip() if suggestions else "未提供批评建议"

+ keywords = keywords.group(1).strip() if keywords else "未提供关键词"

+

+ # Arxiv 文献查询

+ arxiv_search = ArxivSearch()

+ arxiv_results = arxiv_search.get_arxiv_article_information(keywords)

+

+ # 显示批评内容和文献推荐

+ message.content = f"**批评建议**:\n{feedback}\n\n**推荐的文献**:\n{arxiv_results}"

+ step2_placeholder.markdown(f"**批评和文献推荐**:\n\n{message.content}")

+ else:

+ step2_placeholder.markdown("**批评内容为空,请检查批评逻辑。**")

+

+ # 第三步:写作者根据反馈优化内容

+ step3_placeholder.markdown("**Step 3: 根据反馈改进内容...**")

+ improvement_prompt = AgentMessage(

+ sender="critic",

+ content=(

+ f"根据以下批评建议和推荐文献对内容进行改进:\n\n"

+ f"批评建议:\n{feedback}\n\n"

+ f"推荐文献:\n{arxiv_results}\n\n"

+ f"请优化初始内容,使其更加清晰、丰富,并符合专业水准。"

+ ),

+ )

+ message = self.writer(improvement_prompt)

+ if message.content:

+ step3_placeholder.markdown(f"**最终优化的博客内容**:\n\n{message.content}")

+ else:

+ step3_placeholder.markdown("**最终优化的博客内容为空,请检查生成逻辑。**")

+

+ return message

+

+def setup_sidebar():

+ """设置侧边栏,选择模型。"""

+ model_name = st.sidebar.text_input('模型名称:', value='internlm2.5-latest')

+ api_base = st.sidebar.text_input(

+ 'API Base 地址:', value='https://internlm-chat.intern-ai.org.cn/puyu/api/v1/chat/completions'

+ )

+

+ return model_name, api_base

+

+def main():

+ """

+ 主函数:构建Streamlit界面并处理用户交互

+ """

+ st.set_page_config(layout='wide', page_title='Lagent Web Demo', page_icon='🤖')

+ st.title("多代理博客优化助手")

+

+ model_type, api_base = setup_sidebar()

+ topic = st.text_input('输入一个话题:', 'Self-Supervised Learning')

+ generate_button = st.button('生成博客内容')

+

+ if (

+ 'blogger' not in st.session_state or

+ st.session_state['model_type'] != model_type or

+ st.session_state['api_base'] != api_base

+ ):

+ st.session_state['blogger'] = AsyncBlogger(

+ model_type=model_type,

+ api_base=api_base,

+ writer_prompt="你是一位优秀的AI内容写作者,请撰写一篇有吸引力且信息丰富的博客内容。",

+ critic_prompt="""

+ 作为一位严谨的批评者,请给出建设性的批评和改进建议,并基于相关主题使用已有的工具推荐一些参考文献,推荐的关键词应该是英语形式,简洁且切题。

+ 请按照以下格式提供反馈:

+ 1. 批评建议:

+ - (具体建议)

+ 2. 推荐的关键词:

+ - (关键词1, 关键词2, ...)

+ """,

+ critic_prefix="请批评以下内容,并提供改进建议:\n\n"

+ )

+ st.session_state['model_type'] = model_type

+ st.session_state['api_base'] = api_base

+

+ if generate_button:

+ update_placeholder = st.empty()

+

+ async def run_async_blogger():

+ message = AgentMessage(

+ sender='user',

+ content=f"请撰写一篇关于{topic}的博客文章,要求表达专业,生动有趣,并且易于理解。"

+ )

+ result = await st.session_state['blogger'].forward(message, update_placeholder)

+ return result

+

+ loop = asyncio.new_event_loop()

+ asyncio.set_event_loop(loop)

+ loop.run_until_complete(run_async_blogger())

+

+if __name__ == '__main__':

+ main()

\ No newline at end of file

diff --git a/lagent/lagent.egg-info/PKG-INFO b/lagent/lagent.egg-info/PKG-INFO

new file mode 100644

index 0000000000000000000000000000000000000000..0451a90147a9100b015eaa27ccb2a46640fe0a3f

--- /dev/null

+++ b/lagent/lagent.egg-info/PKG-INFO

@@ -0,0 +1,600 @@

+Metadata-Version: 2.1

+Name: lagent

+Version: 0.5.0rc1

+Summary: A lightweight framework for building LLM-based agents

+Home-page: https://github.com/InternLM/lagent

+License: Apache 2.0

+Keywords: artificial general intelligence,agent,agi,llm

+Description-Content-Type: text/markdown

+License-File: LICENSE

+Requires-Dist: aiohttp

+Requires-Dist: arxiv

+Requires-Dist: asyncache

+Requires-Dist: asyncer

+Requires-Dist: distro

+Requires-Dist: duckduckgo_search==5.3.1b1

+Requires-Dist: filelock

+Requires-Dist: func_timeout

+Requires-Dist: griffe<1.0

+Requires-Dist: json5

+Requires-Dist: jsonschema

+Requires-Dist: jupyter==1.0.0

+Requires-Dist: jupyter_client==8.6.2

+Requires-Dist: jupyter_core==5.7.2

+Requires-Dist: pydantic==2.6.4

+Requires-Dist: requests

+Requires-Dist: termcolor

+Requires-Dist: tiktoken

+Requires-Dist: timeout-decorator

+Requires-Dist: typing-extensions

+Provides-Extra: all

+Requires-Dist: google-search-results; extra == "all"

+Requires-Dist: lmdeploy>=0.2.5; extra == "all"

+Requires-Dist: pillow; extra == "all"

+Requires-Dist: python-pptx; extra == "all"

+Requires-Dist: timeout_decorator; extra == "all"

+Requires-Dist: torch; extra == "all"

+Requires-Dist: transformers<=4.40,>=4.34; extra == "all"

+Requires-Dist: vllm>=0.3.3; extra == "all"

+Requires-Dist: aiohttp; extra == "all"

+Requires-Dist: arxiv; extra == "all"

+Requires-Dist: asyncache; extra == "all"

+Requires-Dist: asyncer; extra == "all"

+Requires-Dist: distro; extra == "all"

+Requires-Dist: duckduckgo_search==5.3.1b1; extra == "all"

+Requires-Dist: filelock; extra == "all"

+Requires-Dist: func_timeout; extra == "all"

+Requires-Dist: griffe<1.0; extra == "all"

+Requires-Dist: json5; extra == "all"

+Requires-Dist: jsonschema; extra == "all"

+Requires-Dist: jupyter==1.0.0; extra == "all"

+Requires-Dist: jupyter_client==8.6.2; extra == "all"

+Requires-Dist: jupyter_core==5.7.2; extra == "all"

+Requires-Dist: pydantic==2.6.4; extra == "all"

+Requires-Dist: requests; extra == "all"

+Requires-Dist: termcolor; extra == "all"

+Requires-Dist: tiktoken; extra == "all"

+Requires-Dist: timeout-decorator; extra == "all"

+Requires-Dist: typing-extensions; extra == "all"

+Provides-Extra: optional

+Requires-Dist: google-search-results; extra == "optional"

+Requires-Dist: lmdeploy>=0.2.5; extra == "optional"

+Requires-Dist: pillow; extra == "optional"

+Requires-Dist: python-pptx; extra == "optional"

+Requires-Dist: timeout_decorator; extra == "optional"

+Requires-Dist: torch; extra == "optional"

+Requires-Dist: transformers<=4.40,>=4.34; extra == "optional"

+Requires-Dist: vllm>=0.3.3; extra == "optional"

+

+

+

+

+

+[](https://lagent.readthedocs.io/en/latest/)

+[](https://pypi.org/project/lagent)

+[](https://github.com/InternLM/lagent/tree/main/LICENSE)

+[](https://github.com/InternLM/lagent/issues)

+[](https://github.com/InternLM/lagent/issues)

+

+

+

+

+

+

+ 👋 join us on 𝕏 (Twitter), Discord and WeChat

+

+

+## Installation

+

+Install from source:

+

+```bash

+git clone https://github.com/InternLM/lagent.git

+cd lagent

+pip install -e .

+```

+

+## Usage

+

+Lagent is inspired by the design philosophy of PyTorch. We expect that the analogy of neural network layers will make the workflow clearer and more intuitive, so users only need to focus on creating layers and defining message passing between them in a Pythonic way. This is a simple tutorial to get you quickly started with building multi-agent applications.

+

+### Models as Agents

+

+Agents use `AgentMessage` for communication.

+

+```python

+from typing import Dict, List

+from lagent.agents import Agent

+from lagent.schema import AgentMessage

+from lagent.llms import VllmModel, INTERNLM2_META

+

+llm = VllmModel(

+ path='Qwen/Qwen2-7B-Instruct',

+ meta_template=INTERNLM2_META,

+ tp=1,

+ top_k=1,

+ temperature=1.0,

+ stop_words=['<|im_end|>'],

+ max_new_tokens=1024,

+)

+system_prompt = '你的回答只能从“典”、“孝”、“急”三个字中选一个。'

+agent = Agent(llm, system_prompt)

+

+user_msg = AgentMessage(sender='user', content='今天天气情况')

+bot_msg = agent(user_msg)

+print(bot_msg)

+```

+

+```

+content='急' sender='Agent' formatted=None extra_info=None type=None receiver=None stream_state=

+```

+

+### Memory as State

+

+Both input and output messages will be added to the memory of `Agent` in each forward pass. This is performed in `__call__` rather than `forward`. See the following pseudo code

+

+```python

+ def __call__(self, *message):

+ message = pre_hooks(message)

+ add_memory(message)

+ message = self.forward(*message)

+ add_memory(message)

+ message = post_hooks(message)

+ return message

+```

+

+Inspect the memory in two ways

+

+```python

+memory: List[AgentMessage] = agent.memory.get_memory()

+print(memory)

+print('-' * 120)

+dumped_memory: Dict[str, List[dict]] = agent.state_dict()

+print(dumped_memory['memory'])

+```

+

+```

+[AgentMessage(content='今天天气情况', sender='user', formatted=None, extra_info=None, type=None, receiver=None, stream_state=), AgentMessage(content='急', sender='Agent', formatted=None, extra_info=None, type=None, receiver=None, stream_state=)]

+------------------------------------------------------------------------------------------------------------------------

+[{'content': '今天天气情况', 'sender': 'user', 'formatted': None, 'extra_info': None, 'type': None, 'receiver': None, 'stream_state': }, {'content': '急', 'sender': 'Agent', 'formatted': None, 'extra_info': None, 'type': None, 'receiver': None, 'stream_state': }]

+```

+

+Clear the memory of this session(`session_id=0` by default):

+

+```python

+agent.memory.reset()

+```

+

+### Custom Message Aggregation

+

+`DefaultAggregator` is called under the hood to assemble and convert `AgentMessage` to OpenAI message format.

+

+```python

+ def forward(self, *message: AgentMessage, session_id=0, **kwargs) -> Union[AgentMessage, str]:

+ formatted_messages = self.aggregator.aggregate(

+ self.memory.get(session_id),

+ self.name,

+ self.output_format,

+ self.template,

+ )

+ llm_response = self.llm.chat(formatted_messages, **kwargs)

+ ...

+```

+

+Implement a simple aggregator that can receive few-shots

+

+```python

+from typing import List, Union

+from lagent.memory import Memory

+from lagent.prompts import StrParser

+from lagent.agents.aggregator import DefaultAggregator

+

+class FewshotAggregator(DefaultAggregator):

+ def __init__(self, few_shot: List[dict] = None):

+ self.few_shot = few_shot or []

+

+ def aggregate(self,

+ messages: Memory,

+ name: str,

+ parser: StrParser = None,

+ system_instruction: Union[str, dict, List[dict]] = None) -> List[dict]:

+ _message = []

+ if system_instruction:

+ _message.extend(

+ self.aggregate_system_intruction(system_instruction))

+ _message.extend(self.few_shot)

+ messages = messages.get_memory()

+ for message in messages:

+ if message.sender == name:

+ _message.append(

+ dict(role='assistant', content=str(message.content)))

+ else:

+ user_message = message.content

+ if len(_message) > 0 and _message[-1]['role'] == 'user':

+ _message[-1]['content'] += user_message

+ else:

+ _message.append(dict(role='user', content=user_message))

+ return _message

+

+agent = Agent(

+ llm,

+ aggregator=FewshotAggregator(

+ [

+ {"role": "user", "content": "今天天气"},

+ {"role": "assistant", "content": "【晴】"},

+ ]

+ )

+)

+user_msg = AgentMessage(sender='user', content='昨天天气')

+bot_msg = agent(user_msg)

+print(bot_msg)

+```

+

+```

+content='【多云转晴,夜间有轻微降温】' sender='Agent' formatted=None extra_info=None type=None receiver=None stream_state=

+```

+

+### Flexible Response Formatting

+

+In `AgentMessage`, `formatted` is reserved to store information parsed by `output_format` from the model output.

+

+```python

+ def forward(self, *message: AgentMessage, session_id=0, **kwargs) -> Union[AgentMessage, str]:

+ ...

+ llm_response = self.llm.chat(formatted_messages, **kwargs)

+ if self.output_format:

+ formatted_messages = self.output_format.parse_response(llm_response)

+ return AgentMessage(

+ sender=self.name,

+ content=llm_response,

+ formatted=formatted_messages,

+ )

+ ...

+```

+

+Use a tool parser as follows

+

+````python

+from lagent.prompts.parsers import ToolParser

+

+system_prompt = "逐步分析并编写Python代码解决以下问题。"

+parser = ToolParser(tool_type='code interpreter', begin='```python\n', end='\n```\n')

+llm.gen_params['stop_words'].append('\n```\n')

+agent = Agent(llm, system_prompt, output_format=parser)

+

+user_msg = AgentMessage(

+ sender='user',

+ content='Marie is thinking of a multiple of 63, while Jay is thinking of a '

+ 'factor of 63. They happen to be thinking of the same number. There are '

+ 'two possibilities for the number that each of them is thinking of, one '

+ 'positive and one negative. Find the product of these two numbers.')

+bot_msg = agent(user_msg)

+print(bot_msg.model_dump_json(indent=4))

+````

+

+````

+{

+ "content": "首先,我们需要找出63的所有正因数和负因数。63的正因数可以通过分解63的质因数来找出,即\\(63 = 3^2 \\times 7\\)。因此,63的正因数包括1, 3, 7, 9, 21, 和 63。对于负因数,我们只需将上述正因数乘以-1。\n\n接下来,我们需要找出与63的正因数相乘的结果为63的数,以及与63的负因数相乘的结果为63的数。这可以通过将63除以每个正因数和负因数来实现。\n\n最后,我们将找到的两个数相乘得到最终答案。\n\n下面是Python代码实现:\n\n```python\ndef find_numbers():\n # 正因数\n positive_factors = [1, 3, 7, 9, 21, 63]\n # 负因数\n negative_factors = [-1, -3, -7, -9, -21, -63]\n \n # 找到与正因数相乘的结果为63的数\n positive_numbers = [63 / factor for factor in positive_factors]\n # 找到与负因数相乘的结果为63的数\n negative_numbers = [-63 / factor for factor in negative_factors]\n \n # 计算两个数的乘积\n product = positive_numbers[0] * negative_numbers[0]\n \n return product\n\nresult = find_numbers()\nprint(result)",

+ "sender": "Agent",

+ "formatted": {

+ "tool_type": "code interpreter",

+ "thought": "首先,我们需要找出63的所有正因数和负因数。63的正因数可以通过分解63的质因数来找出,即\\(63 = 3^2 \\times 7\\)。因此,63的正因数包括1, 3, 7, 9, 21, 和 63。对于负因数,我们只需将上述正因数乘以-1。\n\n接下来,我们需要找出与63的正因数相乘的结果为63的数,以及与63的负因数相乘的结果为63的数。这可以通过将63除以每个正因数和负因数来实现。\n\n最后,我们将找到的两个数相乘得到最终答案。\n\n下面是Python代码实现:\n\n",

+ "action": "def find_numbers():\n # 正因数\n positive_factors = [1, 3, 7, 9, 21, 63]\n # 负因数\n negative_factors = [-1, -3, -7, -9, -21, -63]\n \n # 找到与正因数相乘的结果为63的数\n positive_numbers = [63 / factor for factor in positive_factors]\n # 找到与负因数相乘的结果为63的数\n negative_numbers = [-63 / factor for factor in negative_factors]\n \n # 计算两个数的乘积\n product = positive_numbers[0] * negative_numbers[0]\n \n return product\n\nresult = find_numbers()\nprint(result)",

+ "status": 1

+ },

+ "extra_info": null,

+ "type": null,

+ "receiver": null,

+ "stream_state": 0

+}

+````

+

+### Consistency of Tool Calling

+

+`ActionExecutor` uses the same communication data structure as `Agent`, but requires the content of input `AgentMessage` to be a dict containing:

+

+- `name`: tool name, e.g. `'IPythonInterpreter'`, `'WebBrowser.search'`.

+- `parameters`: keyword arguments of the tool API, e.g. `{'command': 'import math;math.sqrt(2)'}`, `{'query': ['recent progress in AI']}`.

+

+You can register custom hooks for message conversion.

+

+```python

+from lagent.hooks import Hook

+from lagent.schema import ActionReturn, ActionStatusCode, AgentMessage

+from lagent.actions import ActionExecutor, IPythonInteractive

+

+class CodeProcessor(Hook):

+ def before_action(self, executor, message, session_id):

+ message = message.copy(deep=True)

+ message.content = dict(

+ name='IPythonInteractive', parameters={'command': message.formatted['action']}

+ )

+ return message

+

+ def after_action(self, executor, message, session_id):

+ action_return = message.content

+ if isinstance(action_return, ActionReturn):

+ if action_return.state == ActionStatusCode.SUCCESS:

+ response = action_return.format_result()

+ else:

+ response = action_return.errmsg

+ else:

+ response = action_return

+ message.content = response

+ return message

+

+executor = ActionExecutor(actions=[IPythonInteractive()], hooks=[CodeProcessor()])

+bot_msg = AgentMessage(

+ sender='Agent',

+ content='首先,我们需要...',

+ formatted={

+ 'tool_type': 'code interpreter',

+ 'thought': '首先,我们需要...',

+ 'action': 'def find_numbers():\n # 正因数\n positive_factors = [1, 3, 7, 9, 21, 63]\n # 负因数\n negative_factors = [-1, -3, -7, -9, -21, -63]\n \n # 找到与正因数相乘的结果为63的数\n positive_numbers = [63 / factor for factor in positive_factors]\n # 找到与负因数相乘的结果为63的数\n negative_numbers = [-63 / factor for factor in negative_factors]\n \n # 计算两个数的乘积\n product = positive_numbers[0] * negative_numbers[0]\n \n return product\n\nresult = find_numbers()\nprint(result)',

+ 'status': 1

+ })

+executor_msg = executor(bot_msg)

+print(executor_msg)

+```

+

+```

+content='3969.0' sender='ActionExecutor' formatted=None extra_info=None type=None receiver=None stream_state=

+```

+

+**For convenience, Lagent provides `InternLMActionProcessor` which is adapted to messages formatted by `ToolParser` as mentioned above.**

+

+### Dual Interfaces

+

+Lagent adopts dual interface design, where almost every component(LLMs, actions, action executors...) has the corresponding asynchronous variant by prefixing its identifier with 'Async'. It is recommended to use synchronous agents for debugging and asynchronous ones for large-scale inference to make the most of idle CPU and GPU resources.

+

+However, make sure the internal consistency of agents, i.e. asynchronous agents should be equipped with asynchronous LLMs and asynchronous action executors that drive asynchronous tools.

+

+```python

+from lagent.llms import VllmModel, AsyncVllmModel, LMDeployPipeline, AsyncLMDeployPipeline

+from lagent.actions import ActionExecutor, AsyncActionExecutor, WebBrowser, AsyncWebBrowser

+from lagent.agents import Agent, AsyncAgent, AgentForInternLM, AsyncAgentForInternLM

+```

+

+______________________________________________________________________

+

+## Practice

+

+- **Try to implement `forward` instead of `__call__` of subclasses unless necessary.**

+- **Always include the `session_id` argument explicitly, which is designed for isolation of memory, LLM requests and tool invocation(e.g. maintain multiple independent IPython environments) in concurrency.**

+

+### Single Agent

+

+Math agents that solve problems by programming

+

+````python

+from lagent.agents.aggregator import InternLMToolAggregator

+

+class Coder(Agent):

+ def __init__(self, model_path, system_prompt, max_turn=3):

+ super().__init__()

+ llm = VllmModel(

+ path=model_path,

+ meta_template=INTERNLM2_META,

+ tp=1,

+ top_k=1,

+ temperature=1.0,

+ stop_words=['\n```\n', '<|im_end|>'],

+ max_new_tokens=1024,

+ )

+ self.agent = Agent(

+ llm,

+ system_prompt,

+ output_format=ToolParser(

+ tool_type='code interpreter', begin='```python\n', end='\n```\n'

+ ),

+ # `InternLMToolAggregator` is adapted to `ToolParser` for aggregating

+ # messages with tool invocations and execution results

+ aggregator=InternLMToolAggregator(),

+ )

+ self.executor = ActionExecutor([IPythonInteractive()], hooks=[CodeProcessor()])

+ self.max_turn = max_turn

+

+ def forward(self, message: AgentMessage, session_id=0) -> AgentMessage:

+ for _ in range(self.max_turn):

+ message = self.agent(message, session_id=session_id)

+ if message.formatted['tool_type'] is None:

+ return message

+ message = self.executor(message, session_id=session_id)

+ return message

+

+coder = Coder('Qwen/Qwen2-7B-Instruct', 'Solve the problem step by step with assistance of Python code')

+query = AgentMessage(

+ sender='user',

+ content='Find the projection of $\\mathbf{a}$ onto $\\mathbf{b} = '

+ '\\begin{pmatrix} 1 \\\\ -3 \\end{pmatrix}$ if $\\mathbf{a} \\cdot \\mathbf{b} = 2.$'

+)

+answer = coder(query)

+print(answer.content)

+print('-' * 120)

+for msg in coder.state_dict()['agent.memory']:

+ print('*' * 80)

+ print(f'{msg["sender"]}:\n\n{msg["content"]}')

+````

+

+### Multiple Agents

+

+Asynchronous blogging agents that improve writing quality by self-refinement ([original AutoGen example](https://microsoft.github.io/autogen/0.2/docs/topics/prompting-and-reasoning/reflection/))

+

+```python

+import asyncio

+import os

+from lagent.llms import AsyncGPTAPI

+from lagent.agents import AsyncAgent

+os.environ['OPENAI_API_KEY'] = 'YOUR_API_KEY'

+

+class PrefixedMessageHook(Hook):

+ def __init__(self, prefix: str, senders: list = None):

+ self.prefix = prefix

+ self.senders = senders or []

+

+ def before_agent(self, agent, messages, session_id):

+ for message in messages:

+ if message.sender in self.senders:

+ message.content = self.prefix + message.content

+

+class AsyncBlogger(AsyncAgent):

+ def __init__(self, model_path, writer_prompt, critic_prompt, critic_prefix='', max_turn=3):

+ super().__init__()

+ llm = AsyncGPTAPI(model_type=model_path, retry=5, max_new_tokens=2048)

+ self.writer = AsyncAgent(llm, writer_prompt, name='writer')

+ self.critic = AsyncAgent(

+ llm, critic_prompt, name='critic', hooks=[PrefixedMessageHook(critic_prefix, ['writer'])]

+ )

+ self.max_turn = max_turn

+

+ async def forward(self, message: AgentMessage, session_id=0) -> AgentMessage:

+ for _ in range(self.max_turn):

+ message = await self.writer(message, session_id=session_id)

+ message = await self.critic(message, session_id=session_id)

+ return await self.writer(message, session_id=session_id)

+

+blogger = AsyncBlogger(

+ 'gpt-4o-2024-05-13',

+ writer_prompt="You are an writing assistant tasked to write engaging blogpost. You try to generate the best blogpost possible for the user's request. "

+ "If the user provides critique, then respond with a revised version of your previous attempts",

+ critic_prompt="Generate critique and recommendations on the writing. Provide detailed recommendations, including requests for length, depth, style, etc..",

+ critic_prefix='Reflect and provide critique on the following writing. \n\n',

+)

+user_prompt = (

+ "Write an engaging blogpost on the recent updates in {topic}. "

+ "The blogpost should be engaging and understandable for general audience. "

+ "Should have more than 3 paragraphes but no longer than 1000 words.")

+bot_msgs = asyncio.get_event_loop().run_until_complete(

+ asyncio.gather(

+ *[

+ blogger(AgentMessage(sender='user', content=user_prompt.format(topic=topic)), session_id=i)

+ for i, topic in enumerate(['AI', 'Biotechnology', 'New Energy', 'Video Games', 'Pop Music'])

+ ]

+ )

+)

+print(bot_msgs[0].content)

+print('-' * 120)

+for msg in blogger.state_dict(session_id=0)['writer.memory']:

+ print('*' * 80)

+ print(f'{msg["sender"]}:\n\n{msg["content"]}')

+print('-' * 120)

+for msg in blogger.state_dict(session_id=0)['critic.memory']:

+ print('*' * 80)

+ print(f'{msg["sender"]}:\n\n{msg["content"]}')

+```

+

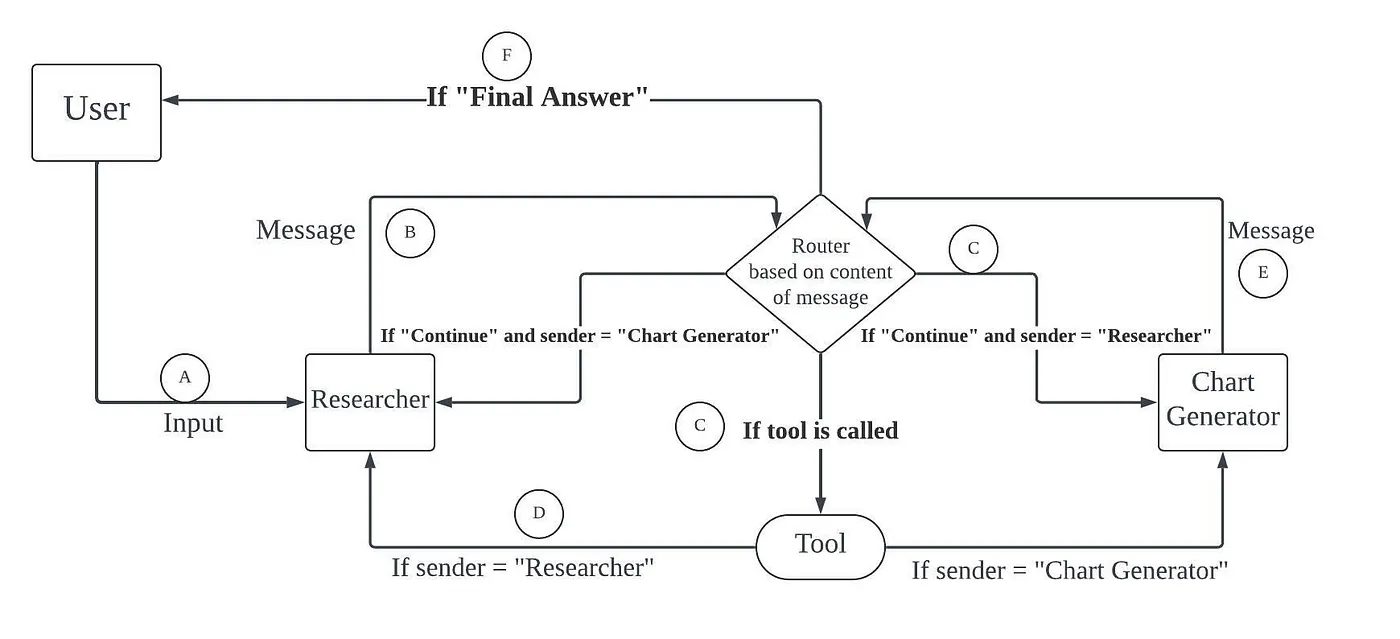

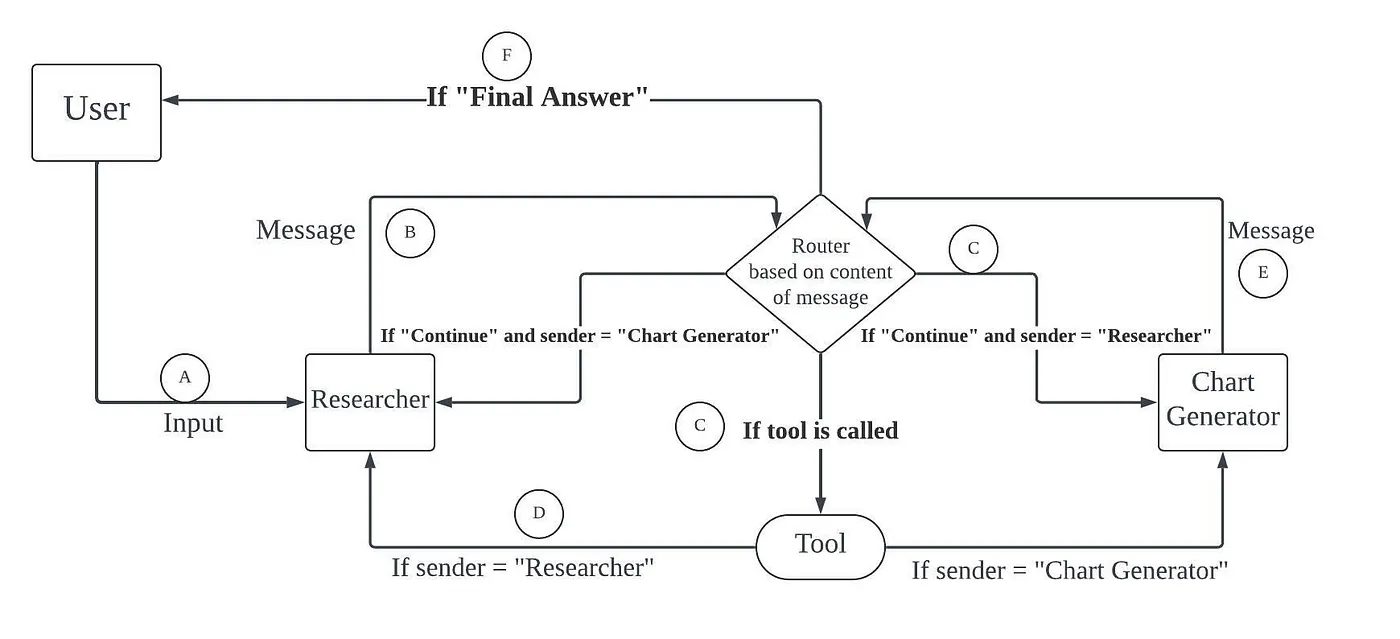

+A multi-agent workflow that performs information retrieval, data collection and chart plotting ([original LangGraph example](https://vijaykumarkartha.medium.com/multiple-ai-agents-creating-multi-agent-workflows-using-langgraph-and-langchain-0587406ec4e6))

+

+

+

+

🔼 Back to top

diff --git a/lagent/lagent.egg-info/SOURCES.txt b/lagent/lagent.egg-info/SOURCES.txt

new file mode 100644

index 0000000000000000000000000000000000000000..7c2d07daed3cc5f3d3c344a618941479237907eb

--- /dev/null

+++ b/lagent/lagent.egg-info/SOURCES.txt

@@ -0,0 +1,71 @@

+LICENSE

+MANIFEST.in

+README.md

+setup.cfg

+setup.py

+lagent/__init__.py

+lagent/schema.py

+lagent/version.py

+lagent.egg-info/PKG-INFO

+lagent.egg-info/SOURCES.txt

+lagent.egg-info/dependency_links.txt

+lagent.egg-info/requires.txt

+lagent.egg-info/top_level.txt

+lagent/actions/__init__.py

+lagent/actions/action_executor.py

+lagent/actions/arxiv_search.py

+lagent/actions/base_action.py

+lagent/actions/bing_map.py

+lagent/actions/builtin_actions.py

+lagent/actions/google_scholar_search.py

+lagent/actions/google_search.py

+lagent/actions/ipython_interactive.py

+lagent/actions/ipython_interpreter.py

+lagent/actions/ipython_manager.py

+lagent/actions/parser.py

+lagent/actions/ppt.py

+lagent/actions/python_interpreter.py

+lagent/actions/web_browser.py

+lagent/agents/__init__.py

+lagent/agents/agent.py

+lagent/agents/react.py

+lagent/agents/stream.py

+lagent/agents/aggregator/__init__.py

+lagent/agents/aggregator/default_aggregator.py

+lagent/agents/aggregator/tool_aggregator.py

+lagent/distributed/__init__.py

+lagent/distributed/http_serve/__init__.py

+lagent/distributed/http_serve/api_server.py

+lagent/distributed/http_serve/app.py

+lagent/distributed/ray_serve/__init__.py

+lagent/distributed/ray_serve/ray_warpper.py

+lagent/hooks/__init__.py

+lagent/hooks/action_preprocessor.py

+lagent/hooks/hook.py

+lagent/hooks/logger.py

+lagent/llms/__init__.py

+lagent/llms/base_api.py

+lagent/llms/base_llm.py

+lagent/llms/huggingface.py

+lagent/llms/lmdeploy_wrapper.py

+lagent/llms/meta_template.py

+lagent/llms/openai.py

+lagent/llms/sensenova.py

+lagent/llms/vllm_wrapper.py

+lagent/memory/__init__.py

+lagent/memory/base_memory.py

+lagent/memory/manager.py

+lagent/prompts/__init__.py

+lagent/prompts/prompt_template.py

+lagent/prompts/parsers/__init__.py

+lagent/prompts/parsers/custom_parser.py

+lagent/prompts/parsers/json_parser.py

+lagent/prompts/parsers/str_parser.py

+lagent/prompts/parsers/tool_parser.py

+lagent/utils/__init__.py

+lagent/utils/gen_key.py

+lagent/utils/package.py

+lagent/utils/util.py

+requirements/docs.txt

+requirements/optional.txt

+requirements/runtime.txt

\ No newline at end of file

diff --git a/lagent/lagent.egg-info/dependency_links.txt b/lagent/lagent.egg-info/dependency_links.txt

new file mode 100644

index 0000000000000000000000000000000000000000..8b137891791fe96927ad78e64b0aad7bded08bdc

--- /dev/null

+++ b/lagent/lagent.egg-info/dependency_links.txt

@@ -0,0 +1 @@

+

diff --git a/lagent/lagent.egg-info/requires.txt b/lagent/lagent.egg-info/requires.txt

new file mode 100644

index 0000000000000000000000000000000000000000..cfd987460eac9660713576d83741d17b3022d711

--- /dev/null

+++ b/lagent/lagent.egg-info/requires.txt

@@ -0,0 +1,59 @@

+aiohttp

+arxiv

+asyncache

+asyncer

+distro

+duckduckgo_search==5.3.1b1

+filelock

+func_timeout

+griffe<1.0

+json5

+jsonschema

+jupyter==1.0.0

+jupyter_client==8.6.2

+jupyter_core==5.7.2

+pydantic==2.6.4

+requests

+termcolor

+tiktoken

+timeout-decorator

+typing-extensions

+

+[all]

+google-search-results

+lmdeploy>=0.2.5

+pillow

+python-pptx

+timeout_decorator

+torch

+transformers<=4.40,>=4.34

+vllm>=0.3.3

+aiohttp

+arxiv

+asyncache

+asyncer

+distro

+duckduckgo_search==5.3.1b1

+filelock

+func_timeout

+griffe<1.0

+json5

+jsonschema

+jupyter==1.0.0

+jupyter_client==8.6.2

+jupyter_core==5.7.2

+pydantic==2.6.4

+requests

+termcolor

+tiktoken

+typing-extensions

+

+[optional]

+google-search-results

+lmdeploy>=0.2.5

+pillow

+python-pptx

+timeout_decorator

+torch

+transformers<=4.40,>=4.34

+vllm>=0.3.3

diff --git a/lagent/lagent.egg-info/top_level.txt b/lagent/lagent.egg-info/top_level.txt

new file mode 100644

index 0000000000000000000000000000000000000000..9273dc63a1927785084010f14533cbb6197c40d7

--- /dev/null

+++ b/lagent/lagent.egg-info/top_level.txt

@@ -0,0 +1 @@

+lagent

diff --git a/lagent/lagent/__pycache__/__init__.cpython-310.pyc b/lagent/lagent/__pycache__/__init__.cpython-310.pyc

new file mode 100644

index 0000000000000000000000000000000000000000..a2a7083c03ec746f4e118ee386346ad9b4e53813

Binary files /dev/null and b/lagent/lagent/__pycache__/__init__.cpython-310.pyc differ

diff --git a/lagent/lagent/__pycache__/schema.cpython-310.pyc b/lagent/lagent/__pycache__/schema.cpython-310.pyc

new file mode 100644

index 0000000000000000000000000000000000000000..d9f16c159f74491ef6a21517cef5b740808fccd0

Binary files /dev/null and b/lagent/lagent/__pycache__/schema.cpython-310.pyc differ

diff --git a/lagent/lagent/__pycache__/version.cpython-310.pyc b/lagent/lagent/__pycache__/version.cpython-310.pyc

new file mode 100644

index 0000000000000000000000000000000000000000..0b4ee364772fff2f87d2bb492e9a5c54c508df48

Binary files /dev/null and b/lagent/lagent/__pycache__/version.cpython-310.pyc differ

diff --git a/lagent/lagent/actions/.ipynb_checkpoints/__init__-checkpoint.py b/lagent/lagent/actions/.ipynb_checkpoints/__init__-checkpoint.py

new file mode 100644

index 0000000000000000000000000000000000000000..6f777710682ce566d813dfcff56a1f93e37e9845

--- /dev/null

+++ b/lagent/lagent/actions/.ipynb_checkpoints/__init__-checkpoint.py

@@ -0,0 +1,26 @@

+from .action_executor import ActionExecutor, AsyncActionExecutor

+from .arxiv_search import ArxivSearch, AsyncArxivSearch

+from .base_action import BaseAction, tool_api

+from .bing_map import AsyncBINGMap, BINGMap

+from .builtin_actions import FinishAction, InvalidAction, NoAction

+from .google_scholar_search import AsyncGoogleScholar, GoogleScholar

+from .google_search import AsyncGoogleSearch, GoogleSearch

+from .ipython_interactive import AsyncIPythonInteractive, IPythonInteractive

+from .ipython_interpreter import AsyncIPythonInterpreter, IPythonInterpreter

+from .ipython_manager import IPythonInteractiveManager

+from .parser import BaseParser, JsonParser, TupleParser

+from .ppt import PPT, AsyncPPT

+from .python_interpreter import AsyncPythonInterpreter, PythonInterpreter

+from .web_browser import AsyncWebBrowser, WebBrowser

+from .weather_query import WeatherQuery

+

+__all__ = [

+ 'BaseAction', 'ActionExecutor', 'AsyncActionExecutor', 'InvalidAction',

+ 'FinishAction', 'NoAction', 'BINGMap', 'AsyncBINGMap', 'ArxivSearch',

+ 'AsyncArxivSearch', 'GoogleSearch', 'AsyncGoogleSearch', 'GoogleScholar',

+ 'AsyncGoogleScholar', 'IPythonInterpreter', 'AsyncIPythonInterpreter',

+ 'IPythonInteractive', 'AsyncIPythonInteractive',

+ 'IPythonInteractiveManager', 'PythonInterpreter', 'AsyncPythonInterpreter',

+ 'PPT', 'AsyncPPT', 'WebBrowser', 'AsyncWebBrowser', 'BaseParser',

+ 'JsonParser', 'TupleParser', 'tool_api', 'WeatherQuery'

+]

diff --git a/lagent/lagent/actions/.ipynb_checkpoints/weather_query-checkpoint.py b/lagent/lagent/actions/.ipynb_checkpoints/weather_query-checkpoint.py

new file mode 100644

index 0000000000000000000000000000000000000000..dbe3e991dbca34e0a6d373d62d457c7237317741

--- /dev/null

+++ b/lagent/lagent/actions/.ipynb_checkpoints/weather_query-checkpoint.py

@@ -0,0 +1,71 @@

+import os

+import requests

+from lagent.actions.base_action import BaseAction, tool_api

+from lagent.schema import ActionReturn, ActionStatusCode

+

+class WeatherQuery(BaseAction):

+ def __init__(self):

+ super().__init__()

+ self.api_key = os.getenv("weather_token")

+ print(self.api_key)

+ if not self.api_key:

+ raise EnvironmentError("未找到环境变量 'token'。请设置你的和风天气 API Key 到 'weather_token' 环境变量中,比如export weather_token='xxx' ")

+

+ @tool_api

+ def run(self, location: str) -> dict:

+ """

+ 查询实时天气信息。

+

+ Args:

+ location (str): 要查询的地点名称、LocationID 或经纬度坐标(如 "101010100" 或 "116.41,39.92")。

+

+ Returns:

+ dict: 包含天气信息的字典

+ * location: 地点名称

+ * weather: 天气状况

+ * temperature: 当前温度

+ * wind_direction: 风向

+ * wind_speed: 风速(公里/小时)

+ * humidity: 相对湿度(%)

+ * report_time: 数据报告时间

+ """

+ try:

+ # 如果 location 不是坐标格式(例如 "116.41,39.92"),则调用 GeoAPI 获取 LocationID

+ if not ("," in location and location.replace(",", "").replace(".", "").isdigit()):

+ # 使用 GeoAPI 获取 LocationID

+ geo_url = f"https://geoapi.qweather.com/v2/city/lookup?location={location}&key={self.api_key}"

+ geo_response = requests.get(geo_url)

+ geo_data = geo_response.json()

+

+ if geo_data.get("code") != "200" or not geo_data.get("location"):

+ raise Exception(f"GeoAPI 返回错误码:{geo_data.get('code')} 或未找到位置")

+

+ location = geo_data["location"][0]["id"]

+

+ # 构建天气查询的 API 请求 URL

+ weather_url = f"https://devapi.qweather.com/v7/weather/now?location={location}&key={self.api_key}"

+ response = requests.get(weather_url)

+ data = response.json()

+

+ # 检查 API 响应码

+ if data.get("code") != "200":

+ raise Exception(f"Weather API 返回错误码:{data.get('code')}")

+

+ # 解析和组织天气信息

+ weather_info = {

+ "location": location,

+ "weather": data["now"]["text"],

+ "temperature": data["now"]["temp"] + "°C",

+ "wind_direction": data["now"]["windDir"],

+ "wind_speed": data["now"]["windSpeed"] + " km/h",

+ "humidity": data["now"]["humidity"] + "%",

+ "report_time": data["updateTime"]

+ }

+

+ return {"result": weather_info}

+

+ except Exception as exc:

+ return ActionReturn(

+ errmsg=f"WeatherQuery 异常:{exc}",

+ state=ActionStatusCode.HTTP_ERROR

+ )

\ No newline at end of file

diff --git a/lagent/lagent/actions/__pycache__/__init__.cpython-310.pyc b/lagent/lagent/actions/__pycache__/__init__.cpython-310.pyc

new file mode 100644

index 0000000000000000000000000000000000000000..8433e9502db9f81e119c018362eb490d1a5f8bc1

Binary files /dev/null and b/lagent/lagent/actions/__pycache__/__init__.cpython-310.pyc differ

diff --git a/lagent/lagent/actions/__pycache__/action_executor.cpython-310.pyc b/lagent/lagent/actions/__pycache__/action_executor.cpython-310.pyc

new file mode 100644

index 0000000000000000000000000000000000000000..31fba326e2d57a21dbf98682f21708d5a3772a5b

Binary files /dev/null and b/lagent/lagent/actions/__pycache__/action_executor.cpython-310.pyc differ

diff --git a/lagent/lagent/actions/__pycache__/arxiv_search.cpython-310.pyc b/lagent/lagent/actions/__pycache__/arxiv_search.cpython-310.pyc

new file mode 100644

index 0000000000000000000000000000000000000000..64dd5199aabe7aac2e73f9245b5746a8974d4ee7

Binary files /dev/null and b/lagent/lagent/actions/__pycache__/arxiv_search.cpython-310.pyc differ

diff --git a/lagent/lagent/actions/__pycache__/base_action.cpython-310.pyc b/lagent/lagent/actions/__pycache__/base_action.cpython-310.pyc

new file mode 100644

index 0000000000000000000000000000000000000000..87d76639d9207e6db6e25bec2085555665661ad1

Binary files /dev/null and b/lagent/lagent/actions/__pycache__/base_action.cpython-310.pyc differ

diff --git a/lagent/lagent/actions/__pycache__/bing_map.cpython-310.pyc b/lagent/lagent/actions/__pycache__/bing_map.cpython-310.pyc

new file mode 100644

index 0000000000000000000000000000000000000000..4c612bbc58db6e9e1277ff5aab005ae05e1e9fea

Binary files /dev/null and b/lagent/lagent/actions/__pycache__/bing_map.cpython-310.pyc differ

diff --git a/lagent/lagent/actions/__pycache__/builtin_actions.cpython-310.pyc b/lagent/lagent/actions/__pycache__/builtin_actions.cpython-310.pyc

new file mode 100644

index 0000000000000000000000000000000000000000..434eb0b732e3dcdca70204ebe04fbf40f994f493

Binary files /dev/null and b/lagent/lagent/actions/__pycache__/builtin_actions.cpython-310.pyc differ

diff --git a/lagent/lagent/actions/__pycache__/google_scholar_search.cpython-310.pyc b/lagent/lagent/actions/__pycache__/google_scholar_search.cpython-310.pyc

new file mode 100644

index 0000000000000000000000000000000000000000..2c7ca9a06b10db4759cc3f36eb1b6ad364052faf

Binary files /dev/null and b/lagent/lagent/actions/__pycache__/google_scholar_search.cpython-310.pyc differ

diff --git a/lagent/lagent/actions/__pycache__/google_search.cpython-310.pyc b/lagent/lagent/actions/__pycache__/google_search.cpython-310.pyc

new file mode 100644

index 0000000000000000000000000000000000000000..907e338713f4b1113c8120b2c2e51520fb10dd54

Binary files /dev/null and b/lagent/lagent/actions/__pycache__/google_search.cpython-310.pyc differ

diff --git a/lagent/lagent/actions/__pycache__/ipython_interactive.cpython-310.pyc b/lagent/lagent/actions/__pycache__/ipython_interactive.cpython-310.pyc

new file mode 100644

index 0000000000000000000000000000000000000000..2e0abb402643ed64e278323b74d40f452ec8dcd1

Binary files /dev/null and b/lagent/lagent/actions/__pycache__/ipython_interactive.cpython-310.pyc differ

diff --git a/lagent/lagent/actions/__pycache__/ipython_interpreter.cpython-310.pyc b/lagent/lagent/actions/__pycache__/ipython_interpreter.cpython-310.pyc

new file mode 100644

index 0000000000000000000000000000000000000000..c2df4a78379ffefd19da96bfd6150d32dd36f0c9

Binary files /dev/null and b/lagent/lagent/actions/__pycache__/ipython_interpreter.cpython-310.pyc differ

diff --git a/lagent/lagent/actions/__pycache__/ipython_manager.cpython-310.pyc b/lagent/lagent/actions/__pycache__/ipython_manager.cpython-310.pyc

new file mode 100644

index 0000000000000000000000000000000000000000..aa3577d6ae9a06bfe603f38f9d8eb081dfd48067

Binary files /dev/null and b/lagent/lagent/actions/__pycache__/ipython_manager.cpython-310.pyc differ

diff --git a/lagent/lagent/actions/__pycache__/parser.cpython-310.pyc b/lagent/lagent/actions/__pycache__/parser.cpython-310.pyc

new file mode 100644

index 0000000000000000000000000000000000000000..a34a6f782b0ed71dd27849270129f333cd752623

Binary files /dev/null and b/lagent/lagent/actions/__pycache__/parser.cpython-310.pyc differ

diff --git a/lagent/lagent/actions/__pycache__/ppt.cpython-310.pyc b/lagent/lagent/actions/__pycache__/ppt.cpython-310.pyc

new file mode 100644

index 0000000000000000000000000000000000000000..9b4090e7259e601418e7a3ec13a9c6b507bed0df

Binary files /dev/null and b/lagent/lagent/actions/__pycache__/ppt.cpython-310.pyc differ

diff --git a/lagent/lagent/actions/__pycache__/python_interpreter.cpython-310.pyc b/lagent/lagent/actions/__pycache__/python_interpreter.cpython-310.pyc

new file mode 100644

index 0000000000000000000000000000000000000000..63071b9e3a9c9e4a25413bbc598e1e6c53405f48

Binary files /dev/null and b/lagent/lagent/actions/__pycache__/python_interpreter.cpython-310.pyc differ

diff --git a/lagent/lagent/actions/__pycache__/weather_query.cpython-310.pyc b/lagent/lagent/actions/__pycache__/weather_query.cpython-310.pyc

new file mode 100644

index 0000000000000000000000000000000000000000..9f41cd9cd395e158c2838436688d2555f4518258

Binary files /dev/null and b/lagent/lagent/actions/__pycache__/weather_query.cpython-310.pyc differ

diff --git a/lagent/lagent/actions/__pycache__/web_browser.cpython-310.pyc b/lagent/lagent/actions/__pycache__/web_browser.cpython-310.pyc

new file mode 100644

index 0000000000000000000000000000000000000000..d6e289a1e727145bdb83e979b2f70f9498ae8f86

Binary files /dev/null and b/lagent/lagent/actions/__pycache__/web_browser.cpython-310.pyc differ

diff --git a/lagent/lagent/agents/__pycache__/__init__.cpython-310.pyc b/lagent/lagent/agents/__pycache__/__init__.cpython-310.pyc

new file mode 100644

index 0000000000000000000000000000000000000000..2fc49b1165a8a9f98446244f2447110ae93972d9

Binary files /dev/null and b/lagent/lagent/agents/__pycache__/__init__.cpython-310.pyc differ

diff --git a/lagent/lagent/agents/__pycache__/agent.cpython-310.pyc b/lagent/lagent/agents/__pycache__/agent.cpython-310.pyc

new file mode 100644

index 0000000000000000000000000000000000000000..19b9a948bb233a6c08545da07529d84a9943eaff

Binary files /dev/null and b/lagent/lagent/agents/__pycache__/agent.cpython-310.pyc differ

diff --git a/lagent/lagent/agents/__pycache__/react.cpython-310.pyc b/lagent/lagent/agents/__pycache__/react.cpython-310.pyc

new file mode 100644

index 0000000000000000000000000000000000000000..9a93e697c148425b2a0ce2d3f92757e5d207cc1d

Binary files /dev/null and b/lagent/lagent/agents/__pycache__/react.cpython-310.pyc differ

diff --git a/lagent/lagent/agents/__pycache__/stream.cpython-310.pyc b/lagent/lagent/agents/__pycache__/stream.cpython-310.pyc

new file mode 100644

index 0000000000000000000000000000000000000000..cc2fb75b6cc717d175037a27b4151ad60050bb56

Binary files /dev/null and b/lagent/lagent/agents/__pycache__/stream.cpython-310.pyc differ

diff --git a/lagent/lagent/agents/aggregator/__pycache__/__init__.cpython-310.pyc b/lagent/lagent/agents/aggregator/__pycache__/__init__.cpython-310.pyc

new file mode 100644

index 0000000000000000000000000000000000000000..af9aa21ebfcc4829e4483513750967bbb211ceb4

Binary files /dev/null and b/lagent/lagent/agents/aggregator/__pycache__/__init__.cpython-310.pyc differ

diff --git a/lagent/lagent/agents/aggregator/__pycache__/default_aggregator.cpython-310.pyc b/lagent/lagent/agents/aggregator/__pycache__/default_aggregator.cpython-310.pyc

new file mode 100644

index 0000000000000000000000000000000000000000..aa9d10599b6ba9f9b9558630b5e1a0172c30e5a1

Binary files /dev/null and b/lagent/lagent/agents/aggregator/__pycache__/default_aggregator.cpython-310.pyc differ

diff --git a/lagent/lagent/agents/aggregator/__pycache__/tool_aggregator.cpython-310.pyc b/lagent/lagent/agents/aggregator/__pycache__/tool_aggregator.cpython-310.pyc

new file mode 100644

index 0000000000000000000000000000000000000000..62c7a3146cd7f466ae15254695f16eaf427bea04

Binary files /dev/null and b/lagent/lagent/agents/aggregator/__pycache__/tool_aggregator.cpython-310.pyc differ

diff --git a/lagent/lagent/hooks/__pycache__/__init__.cpython-310.pyc b/lagent/lagent/hooks/__pycache__/__init__.cpython-310.pyc

new file mode 100644

index 0000000000000000000000000000000000000000..b21b36e7d9ab1967593907a70d2e90de8c3c828d

Binary files /dev/null and b/lagent/lagent/hooks/__pycache__/__init__.cpython-310.pyc differ

diff --git a/lagent/lagent/hooks/__pycache__/action_preprocessor.cpython-310.pyc b/lagent/lagent/hooks/__pycache__/action_preprocessor.cpython-310.pyc

new file mode 100644

index 0000000000000000000000000000000000000000..b0ca6d078355f35e5dcb403f60d74944e302e8b4

Binary files /dev/null and b/lagent/lagent/hooks/__pycache__/action_preprocessor.cpython-310.pyc differ

diff --git a/lagent/lagent/hooks/__pycache__/hook.cpython-310.pyc b/lagent/lagent/hooks/__pycache__/hook.cpython-310.pyc

new file mode 100644

index 0000000000000000000000000000000000000000..add51fe063aca584d41140bd9e34a284ca433983

Binary files /dev/null and b/lagent/lagent/hooks/__pycache__/hook.cpython-310.pyc differ

diff --git a/lagent/lagent/hooks/__pycache__/logger.cpython-310.pyc b/lagent/lagent/hooks/__pycache__/logger.cpython-310.pyc

new file mode 100644

index 0000000000000000000000000000000000000000..076817a0fb043f376de84455dd7011a1db93eb09

Binary files /dev/null and b/lagent/lagent/hooks/__pycache__/logger.cpython-310.pyc differ

diff --git a/lagent/lagent/llms/__pycache__/__init__.cpython-310.pyc b/lagent/lagent/llms/__pycache__/__init__.cpython-310.pyc

new file mode 100644

index 0000000000000000000000000000000000000000..5f21329054d70c95d223acbcebd1e591368a96cf

Binary files /dev/null and b/lagent/lagent/llms/__pycache__/__init__.cpython-310.pyc differ

diff --git a/lagent/lagent/llms/__pycache__/base_api.cpython-310.pyc b/lagent/lagent/llms/__pycache__/base_api.cpython-310.pyc

new file mode 100644

index 0000000000000000000000000000000000000000..aad0699605cebec582d5fb191cfef8372dd0846e

Binary files /dev/null and b/lagent/lagent/llms/__pycache__/base_api.cpython-310.pyc differ

diff --git a/lagent/lagent/llms/__pycache__/base_llm.cpython-310.pyc b/lagent/lagent/llms/__pycache__/base_llm.cpython-310.pyc

new file mode 100644

index 0000000000000000000000000000000000000000..cc91416427736546a954f0a1c72a49341a0212a9

Binary files /dev/null and b/lagent/lagent/llms/__pycache__/base_llm.cpython-310.pyc differ

diff --git a/lagent/lagent/llms/__pycache__/huggingface.cpython-310.pyc b/lagent/lagent/llms/__pycache__/huggingface.cpython-310.pyc

new file mode 100644

index 0000000000000000000000000000000000000000..ab54da8d9cc004d84188ca58011c29f8554da92b

Binary files /dev/null and b/lagent/lagent/llms/__pycache__/huggingface.cpython-310.pyc differ

diff --git a/lagent/lagent/llms/__pycache__/lmdeploy_wrapper.cpython-310.pyc b/lagent/lagent/llms/__pycache__/lmdeploy_wrapper.cpython-310.pyc

new file mode 100644

index 0000000000000000000000000000000000000000..02ae76198cc6ed1bb9806c230b690f2edd323b22

Binary files /dev/null and b/lagent/lagent/llms/__pycache__/lmdeploy_wrapper.cpython-310.pyc differ

diff --git a/lagent/lagent/llms/__pycache__/meta_template.cpython-310.pyc b/lagent/lagent/llms/__pycache__/meta_template.cpython-310.pyc

new file mode 100644

index 0000000000000000000000000000000000000000..ac1fb5a9760305aad93aa938e5ccccbacddd1386

Binary files /dev/null and b/lagent/lagent/llms/__pycache__/meta_template.cpython-310.pyc differ

diff --git a/lagent/lagent/llms/__pycache__/openai.cpython-310.pyc b/lagent/lagent/llms/__pycache__/openai.cpython-310.pyc

new file mode 100644

index 0000000000000000000000000000000000000000..587590becfb28afe0be3c95141a2a60c2ddaa3dd

Binary files /dev/null and b/lagent/lagent/llms/__pycache__/openai.cpython-310.pyc differ

diff --git a/lagent/lagent/llms/__pycache__/sensenova.cpython-310.pyc b/lagent/lagent/llms/__pycache__/sensenova.cpython-310.pyc

new file mode 100644

index 0000000000000000000000000000000000000000..3babdc9700fc1e92b149fb54d7b61efeca0eff32

Binary files /dev/null and b/lagent/lagent/llms/__pycache__/sensenova.cpython-310.pyc differ

diff --git a/lagent/lagent/llms/__pycache__/vllm_wrapper.cpython-310.pyc b/lagent/lagent/llms/__pycache__/vllm_wrapper.cpython-310.pyc

new file mode 100644

index 0000000000000000000000000000000000000000..ffdff226d0e4d8e073ea232a22238e3ab391a54e

Binary files /dev/null and b/lagent/lagent/llms/__pycache__/vllm_wrapper.cpython-310.pyc differ

diff --git a/lagent/lagent/memory/__pycache__/__init__.cpython-310.pyc b/lagent/lagent/memory/__pycache__/__init__.cpython-310.pyc

new file mode 100644

index 0000000000000000000000000000000000000000..d7b32d2587ff4c4c64f06f1fafb4f37da05f4400

Binary files /dev/null and b/lagent/lagent/memory/__pycache__/__init__.cpython-310.pyc differ

diff --git a/lagent/lagent/memory/__pycache__/base_memory.cpython-310.pyc b/lagent/lagent/memory/__pycache__/base_memory.cpython-310.pyc

new file mode 100644

index 0000000000000000000000000000000000000000..74331ffa7ab3611142a2373a300df04ca06f16ba

Binary files /dev/null and b/lagent/lagent/memory/__pycache__/base_memory.cpython-310.pyc differ

diff --git a/lagent/lagent/memory/__pycache__/manager.cpython-310.pyc b/lagent/lagent/memory/__pycache__/manager.cpython-310.pyc

new file mode 100644

index 0000000000000000000000000000000000000000..baf30b1b6108d44ca1464691f2ee65eae11fef8d

Binary files /dev/null and b/lagent/lagent/memory/__pycache__/manager.cpython-310.pyc differ

diff --git a/lagent/lagent/prompts/__pycache__/__init__.cpython-310.pyc b/lagent/lagent/prompts/__pycache__/__init__.cpython-310.pyc

new file mode 100644

index 0000000000000000000000000000000000000000..2ba5df37cf4dc6fe57a34370391b2f2e10560241

Binary files /dev/null and b/lagent/lagent/prompts/__pycache__/__init__.cpython-310.pyc differ

diff --git a/lagent/lagent/prompts/__pycache__/prompt_template.cpython-310.pyc b/lagent/lagent/prompts/__pycache__/prompt_template.cpython-310.pyc

new file mode 100644

index 0000000000000000000000000000000000000000..0202f1a8e6a2b9d44d6e6cc9922d85ff41afc6ea

Binary files /dev/null and b/lagent/lagent/prompts/__pycache__/prompt_template.cpython-310.pyc differ

diff --git a/lagent/lagent/prompts/parsers/__pycache__/__init__.cpython-310.pyc b/lagent/lagent/prompts/parsers/__pycache__/__init__.cpython-310.pyc

new file mode 100644

index 0000000000000000000000000000000000000000..36acdfbdece6a8d096cc81bd4c982a37a1b7f69e

Binary files /dev/null and b/lagent/lagent/prompts/parsers/__pycache__/__init__.cpython-310.pyc differ

diff --git a/lagent/lagent/prompts/parsers/__pycache__/custom_parser.cpython-310.pyc b/lagent/lagent/prompts/parsers/__pycache__/custom_parser.cpython-310.pyc

new file mode 100644

index 0000000000000000000000000000000000000000..43989b1b75c13d2b4f8fae5f9695e804dddd1438

Binary files /dev/null and b/lagent/lagent/prompts/parsers/__pycache__/custom_parser.cpython-310.pyc differ

diff --git a/lagent/lagent/prompts/parsers/__pycache__/json_parser.cpython-310.pyc b/lagent/lagent/prompts/parsers/__pycache__/json_parser.cpython-310.pyc

new file mode 100644

index 0000000000000000000000000000000000000000..0506149bff3d6c9377a929b38ec960498c4fab1c

Binary files /dev/null and b/lagent/lagent/prompts/parsers/__pycache__/json_parser.cpython-310.pyc differ

diff --git a/lagent/lagent/prompts/parsers/__pycache__/str_parser.cpython-310.pyc b/lagent/lagent/prompts/parsers/__pycache__/str_parser.cpython-310.pyc

new file mode 100644

index 0000000000000000000000000000000000000000..f4c650dbbb2e75efdeaa6d8d63efc74e4bc7dfa2

Binary files /dev/null and b/lagent/lagent/prompts/parsers/__pycache__/str_parser.cpython-310.pyc differ

diff --git a/lagent/lagent/prompts/parsers/__pycache__/tool_parser.cpython-310.pyc b/lagent/lagent/prompts/parsers/__pycache__/tool_parser.cpython-310.pyc

new file mode 100644

index 0000000000000000000000000000000000000000..acc37708285b63eeac1fad724e7baf2f80efc661

Binary files /dev/null and b/lagent/lagent/prompts/parsers/__pycache__/tool_parser.cpython-310.pyc differ

diff --git a/lagent/lagent/utils/__pycache__/__init__.cpython-310.pyc b/lagent/lagent/utils/__pycache__/__init__.cpython-310.pyc

new file mode 100644

index 0000000000000000000000000000000000000000..08d886129bba004000bdf7ff8c4bee329022a2b4

Binary files /dev/null and b/lagent/lagent/utils/__pycache__/__init__.cpython-310.pyc differ

diff --git a/lagent/lagent/utils/__pycache__/package.cpython-310.pyc b/lagent/lagent/utils/__pycache__/package.cpython-310.pyc

new file mode 100644

index 0000000000000000000000000000000000000000..3eabc80378bf842e54d7ab63a8040bfd6108c296

Binary files /dev/null and b/lagent/lagent/utils/__pycache__/package.cpython-310.pyc differ

diff --git a/lagent/lagent/utils/__pycache__/util.cpython-310.pyc b/lagent/lagent/utils/__pycache__/util.cpython-310.pyc

new file mode 100644

index 0000000000000000000000000000000000000000..e480145be12162eb9be800047a11bd2643c59bff

Binary files /dev/null and b/lagent/lagent/utils/__pycache__/util.cpython-310.pyc differ

+

+[](https://lagent.readthedocs.io/en/latest/)

+[](https://pypi.org/project/lagent)

+[](https://github.com/InternLM/lagent/tree/main/LICENSE)

+[](https://github.com/InternLM/lagent/issues)

+[](https://github.com/InternLM/lagent/issues)

+

+

+

+

+

+

+

+[](https://lagent.readthedocs.io/en/latest/)

+[](https://pypi.org/project/lagent)

+[](https://github.com/InternLM/lagent/tree/main/LICENSE)

+[](https://github.com/InternLM/lagent/issues)

+[](https://github.com/InternLM/lagent/issues)

+

+

+

+

+

+ +

+