Sync local Space with Hub

Browse filesThis view is limited to 50 files because it contains too many changes.

See raw diff

- .gitattributes +1 -0

- index.html +336 -369

- static/images/confusion_matrixes.png +0 -0

- static/images/correlation_table.png +0 -0

- static/images/favicon.svg +0 -37

- static/images/grounded_qa_cases.png +0 -0

- static/images/interpolate_end.jpg +0 -0

- static/images/interpolate_start.jpg +0 -0

- static/images/matrices.png +0 -0

- static/images/meme.jpeg +0 -0

- static/images/{steve.webm → one_sample.png} +2 -2

- static/images/result_table.png +0 -0

- static/images/schema_unit_test.png +0 -0

- static/images/schema_unit_test_blog.png +0 -0

- static/interpolation/stacked/000000.jpg +0 -0

- static/interpolation/stacked/000001.jpg +0 -0

- static/interpolation/stacked/000002.jpg +0 -0

- static/interpolation/stacked/000003.jpg +0 -0

- static/interpolation/stacked/000004.jpg +0 -0

- static/interpolation/stacked/000005.jpg +0 -0

- static/interpolation/stacked/000006.jpg +0 -0

- static/interpolation/stacked/000007.jpg +0 -0

- static/interpolation/stacked/000008.jpg +0 -0

- static/interpolation/stacked/000009.jpg +0 -0

- static/interpolation/stacked/000010.jpg +0 -0

- static/interpolation/stacked/000011.jpg +0 -0

- static/interpolation/stacked/000012.jpg +0 -0

- static/interpolation/stacked/000013.jpg +0 -0

- static/interpolation/stacked/000014.jpg +0 -0

- static/interpolation/stacked/000015.jpg +0 -0

- static/interpolation/stacked/000016.jpg +0 -0

- static/interpolation/stacked/000017.jpg +0 -0

- static/interpolation/stacked/000018.jpg +0 -0

- static/interpolation/stacked/000019.jpg +0 -0

- static/interpolation/stacked/000020.jpg +0 -0

- static/interpolation/stacked/000021.jpg +0 -0

- static/interpolation/stacked/000022.jpg +0 -0

- static/interpolation/stacked/000023.jpg +0 -0

- static/interpolation/stacked/000024.jpg +0 -0

- static/interpolation/stacked/000025.jpg +0 -0

- static/interpolation/stacked/000026.jpg +0 -0

- static/interpolation/stacked/000027.jpg +0 -0

- static/interpolation/stacked/000028.jpg +0 -0

- static/interpolation/stacked/000029.jpg +0 -0

- static/interpolation/stacked/000030.jpg +0 -0

- static/interpolation/stacked/000031.jpg +0 -0

- static/interpolation/stacked/000032.jpg +0 -0

- static/interpolation/stacked/000033.jpg +0 -0

- static/interpolation/stacked/000034.jpg +0 -0

- static/interpolation/stacked/000035.jpg +0 -0

.gitattributes

CHANGED

|

@@ -46,3 +46,4 @@ static/videos/shiba.mp4 filter=lfs diff=lfs merge=lfs -text

|

|

| 46 |

static/videos/steve.mp4 filter=lfs diff=lfs merge=lfs -text

|

| 47 |

static/videos/teaser.mp4 filter=lfs diff=lfs merge=lfs -text

|

| 48 |

static/videos/toby.mp4 filter=lfs diff=lfs merge=lfs -text

|

|

|

|

|

|

| 46 |

static/videos/steve.mp4 filter=lfs diff=lfs merge=lfs -text

|

| 47 |

static/videos/teaser.mp4 filter=lfs diff=lfs merge=lfs -text

|

| 48 |

static/videos/toby.mp4 filter=lfs diff=lfs merge=lfs -text

|

| 49 |

+

static/images/one_sample.png filter=lfs diff=lfs merge=lfs -text

|

index.html

CHANGED

|

@@ -1,435 +1,402 @@

|

|

| 1 |

<!DOCTYPE html>

|

| 2 |

<html>

|

|

|

|

| 3 |

<head>

|

| 4 |

<meta charset="utf-8">

|

| 5 |

<meta name="description"

|

| 6 |

-

|

| 7 |

<meta name="keywords" content="Nerfies, D-NeRF, NeRF">

|

| 8 |

<meta name="viewport" content="width=device-width, initial-scale=1">

|

| 9 |

-

<title>

|

|

|

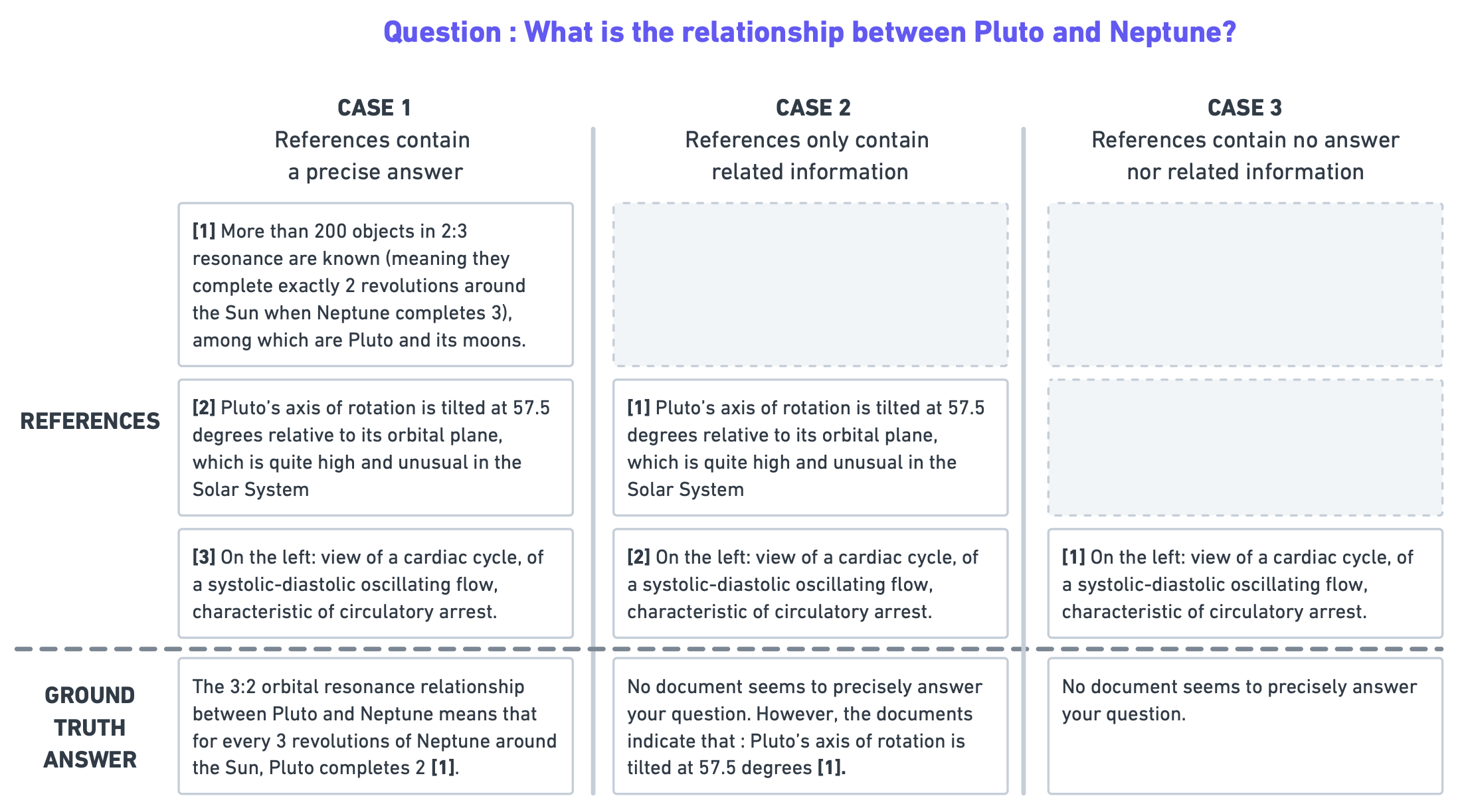

|

| 10 |

|

| 11 |

-

<link href="https://fonts.googleapis.com/css?family=Google+Sans|Noto+Sans|Castoro"

|

| 12 |

-

rel="stylesheet">

|

| 13 |

|

| 14 |

<link rel="stylesheet" href="./static/css/bulma.min.css">

|

| 15 |

-

<link rel="stylesheet" href="./static/css/bulma-carousel.min.css">

|

| 16 |

-

<link rel="stylesheet" href="./static/css/bulma-slider.min.css">

|

| 17 |

<link rel="stylesheet" href="./static/css/fontawesome.all.min.css">

|

| 18 |

-

<link rel="stylesheet"

|

| 19 |

-

href="https://cdn.jsdelivr.net/gh/jpswalsh/academicons@1/css/academicons.min.css">

|

| 20 |

<link rel="stylesheet" href="./static/css/index.css">

|

| 21 |

<link rel="icon" href="./static/images/favicon.svg">

|

| 22 |

|

|

|

|

|

|

|

| 23 |

<script src="https://ajax.googleapis.com/ajax/libs/jquery/3.5.1/jquery.min.js"></script>

|

| 24 |

<script defer src="./static/js/fontawesome.all.min.js"></script>

|

| 25 |

<script src="./static/js/bulma-carousel.min.js"></script>

|

| 26 |

<script src="./static/js/bulma-slider.min.js"></script>

|

| 27 |

<script src="./static/js/index.js"></script>

|

| 28 |

</head>

|

|

|

|

| 29 |

<body>

|

| 30 |

|

| 31 |

-

<section class="hero">

|

| 32 |

-

|

| 33 |

-

|

| 34 |

-

|

| 35 |

-

|

| 36 |

-

|

| 37 |

-

|

| 38 |

-

<

|

| 39 |

-

<

|

| 40 |

-

|

| 41 |

-

<

|

| 42 |

-

|

| 43 |

-

|

| 44 |

-

|

| 45 |

-

|

| 46 |

-

|

| 47 |

-

|

| 48 |

-

|

| 49 |

-

|

| 50 |

-

</

|

| 51 |

-

<span class="author-block">

|

| 52 |

-

<a href="https://homes.cs.washington.edu/~seitz/" target="_blank">Steven M. Seitz</a><sup>1,2</sup>,

|

| 53 |

-

</span>

|

| 54 |

-

<span class="author-block">

|

| 55 |

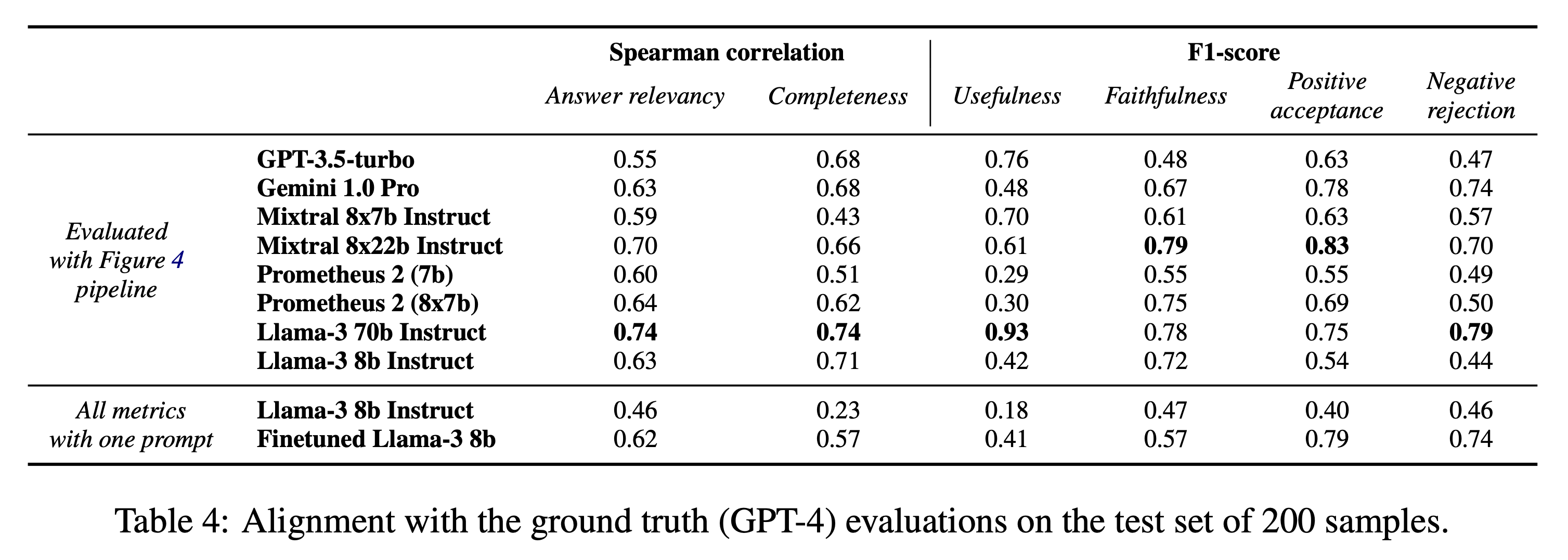

-

<a href="http://www.ricardomartinbrualla.com" target="_blank">Ricardo Martin-Brualla</a><sup>2</sup>

|

| 56 |

-

</span>

|

| 57 |

-

</div>

|

| 58 |

|

| 59 |

-

|

| 60 |

-

|

| 61 |

-

|

| 62 |

-

</div>

|

| 63 |

|

| 64 |

-

|

| 65 |

-

|

| 66 |

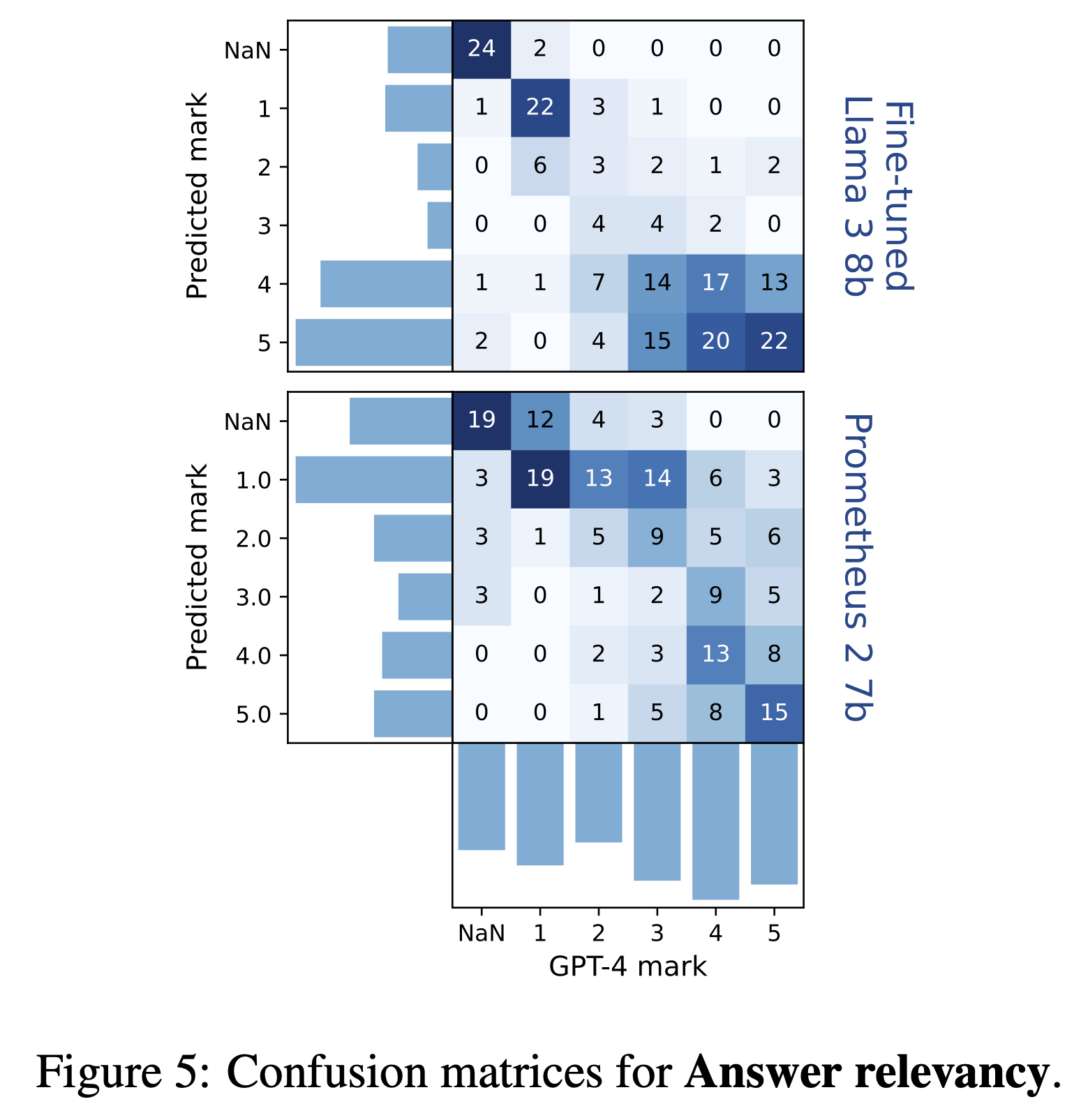

-

|

| 67 |

-

|

| 68 |

-

|

| 69 |

-

|

| 70 |

-

|

| 71 |

<i class="fas fa-file-pdf"></i>

|

| 72 |

-

|

| 73 |

-

|

| 74 |

-

|

| 75 |

-

|

| 76 |

-

|

| 77 |

-

|

| 78 |

-

|

| 79 |

-

|

| 80 |

<i class="ai ai-arxiv"></i>

|

| 81 |

-

|

| 82 |

-

|

| 83 |

-

|

| 84 |

-

|

| 85 |

-

|

| 86 |

-

|

| 87 |

-

|

| 88 |

-

|

| 89 |

-

|

| 90 |

-

<i class="fab fa-youtube"></i>

|

| 91 |

-

</span>

|

| 92 |

-

<span>Video</span>

|

| 93 |

-

</a>

|

| 94 |

-

</span>

|

| 95 |

-

<!-- Code Link. -->

|

| 96 |

-

<span class="link-block">

|

| 97 |

-

<a href="https://github.com/google/nerfies" target="_blank"

|

| 98 |

-

class="external-link button is-normal is-rounded is-dark">

|

| 99 |

-

<span class="icon">

|

| 100 |

<i class="fab fa-github"></i>

|

| 101 |

-

|

| 102 |

-

|

| 103 |

</a>

|

| 104 |

-

|

| 105 |

-

|

| 106 |

-

|

| 107 |

-

|

| 108 |

-

|

| 109 |

-

|

| 110 |

-

<i class="

|

| 111 |

-

|

| 112 |

-

|

| 113 |

</a>

|

| 114 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 115 |

|

|

|

|

| 116 |

</div>

|

| 117 |

</div>

|

| 118 |

</div>

|

| 119 |

</div>

|

| 120 |

-

</

|

| 121 |

-

</section>

|

| 122 |

-

|

| 123 |

-

<section class="hero teaser">

|

| 124 |

-

<div class="container is-max-desktop">

|

| 125 |

-

<div class="hero-body">

|

| 126 |

-

<video id="teaser" autoplay muted loop playsinline height="100%">

|

| 127 |

-

<source src="./static/videos/teaser.mp4"

|

| 128 |

-

type="video/mp4">

|

| 129 |

-

</video>

|

| 130 |

-

<h2 class="subtitle has-text-centered">

|

| 131 |

-

<span class="dnerf">Nerfies</span> turns selfie videos from your phone into

|

| 132 |

-

free-viewpoint

|

| 133 |

-

portraits.

|

| 134 |

-

</h2>

|

| 135 |

-

</div>

|

| 136 |

-

</div>

|

| 137 |

-

</section>

|

| 138 |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 139 |

|

| 140 |

-

|

| 141 |

-

|

| 142 |

-

|

| 143 |

-

|

| 144 |

-

|

| 145 |

-

|

| 146 |

-

|

| 147 |

-

type="video/mp4">

|

| 148 |

-

</video>

|

| 149 |

-

</div>

|

| 150 |

-

<div class="item item-chair-tp">

|

| 151 |

-

<video poster="" id="chair-tp" autoplay controls muted loop playsinline height="100%">

|

| 152 |

-

<source src="./static/videos/chair-tp.mp4"

|

| 153 |

-

type="video/mp4">

|

| 154 |

-

</video>

|

| 155 |

-

</div>

|

| 156 |

-

<div class="item item-shiba">

|

| 157 |

-

<video poster="" id="shiba" autoplay controls muted loop playsinline height="100%">

|

| 158 |

-

<source src="./static/videos/shiba.mp4"

|

| 159 |

-

type="video/mp4">

|

| 160 |

-

</video>

|

| 161 |

-

</div>

|

| 162 |

-

<div class="item item-fullbody">

|

| 163 |

-

<video poster="" id="fullbody" autoplay controls muted loop playsinline height="100%">

|

| 164 |

-

<source src="./static/videos/fullbody.mp4"

|

| 165 |

-

type="video/mp4">

|

| 166 |

-

</video>

|

| 167 |

-

</div>

|

| 168 |

-

<div class="item item-blueshirt">

|

| 169 |

-

<video poster="" id="blueshirt" autoplay controls muted loop playsinline height="100%">

|

| 170 |

-

<source src="./static/videos/blueshirt.mp4"

|

| 171 |

-

type="video/mp4">

|

| 172 |

-

</video>

|

| 173 |

-

</div>

|

| 174 |

-

<div class="item item-mask">

|

| 175 |

-

<video poster="" id="mask" autoplay controls muted loop playsinline height="100%">

|

| 176 |

-

<source src="./static/videos/mask.mp4"

|

| 177 |

-

type="video/mp4">

|

| 178 |

-

</video>

|

| 179 |

-

</div>

|

| 180 |

-

<div class="item item-coffee">

|

| 181 |

-

<video poster="" id="coffee" autoplay controls muted loop playsinline height="100%">

|

| 182 |

-

<source src="./static/videos/coffee.mp4"

|

| 183 |

-

type="video/mp4">

|

| 184 |

-

</video>

|

| 185 |

-

</div>

|

| 186 |

-

<div class="item item-toby">

|

| 187 |

-

<video poster="" id="toby" autoplay controls muted loop playsinline height="100%">

|

| 188 |

-

<source src="./static/videos/toby2.mp4"

|

| 189 |

-

type="video/mp4">

|

| 190 |

-

</video>

|

| 191 |

</div>

|

| 192 |

</div>

|

| 193 |

-

|

| 194 |

-

</div>

|

| 195 |

-

</section>

|

| 196 |

-

|

| 197 |

|

| 198 |

-

<section class="section">

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 199 |

<div class="container is-max-desktop">

|

| 200 |

-

<!-- Abstract. -->

|

| 201 |

<div class="columns is-centered has-text-centered">

|

| 202 |

<div class="column is-four-fifths">

|

| 203 |

-

<h2 class="title is-3">Abstract</h2>

|

| 204 |

<div class="content has-text-justified">

|

|

|

|

| 205 |

<p>

|

| 206 |

-

|

| 207 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 208 |

</p>

|

| 209 |

-

<p>

|

| 210 |

-

|

| 211 |

-

|

| 212 |

-

|

| 213 |

-

canonical 5D NeRF.

|

| 214 |

-

We observe that these NeRF-like deformation fields are prone to local minima, and

|

| 215 |

-

propose a coarse-to-fine optimization method for coordinate-based models that allows for

|

| 216 |

-

more robust optimization.

|

| 217 |

-

By adapting principles from geometry processing and physical simulation to NeRF-like

|

| 218 |

-

models, we propose an elastic regularization of the deformation field that further

|

| 219 |

-

improves robustness.

|

| 220 |

</p>

|

| 221 |

<p>

|

| 222 |

-

|

| 223 |

-

|

| 224 |

-

|

| 225 |

-

|

| 226 |

-

|

| 227 |

-

rig with two mobile phones that take time-synchronized photos, yielding train/validation

|

| 228 |

-

images of the same pose at different viewpoints. We show that our method faithfully

|

| 229 |

-

reconstructs non-rigidly deforming scenes and reproduces unseen views with high

|

| 230 |

-

fidelity.

|

| 231 |

</p>

|

| 232 |

-

</div>

|

| 233 |

-

</div>

|

| 234 |

-

</div>

|

| 235 |

-

<!--/ Abstract. -->

|

| 236 |

-

|

| 237 |

-

<!-- Paper video. -->

|

| 238 |

-

<div class="columns is-centered has-text-centered">

|

| 239 |

-

<div class="column is-four-fifths">

|

| 240 |

-

<h2 class="title is-3">Video</h2>

|

| 241 |

-

<div class="publication-video">

|

| 242 |

-

<iframe src="https://www.youtube.com/embed/MrKrnHhk8IA?rel=0&showinfo=0"

|

| 243 |

-

frameborder="0" allow="autoplay; encrypted-media" allowfullscreen></iframe>

|

| 244 |

-

</div>

|

| 245 |

-

</div>

|

| 246 |

-

</div>

|

| 247 |

-

<!--/ Paper video. -->

|

| 248 |

-

</div>

|

| 249 |

-

</section>

|

| 250 |

-

|

| 251 |

-

|

| 252 |

-

<section class="section">

|

| 253 |

-

<div class="container is-max-desktop">

|

| 254 |

-

|

| 255 |

-

<div class="columns is-centered">

|

| 256 |

-

|

| 257 |

-

<!-- Visual Effects. -->

|

| 258 |

-

<div class="column">

|

| 259 |

-

<div class="content">

|

| 260 |

-

<h2 class="title is-3">Visual Effects</h2>

|

| 261 |

<p>

|

| 262 |

-

|

| 263 |

-

|

|

|

|

| 264 |

</p>

|

| 265 |

-

<video id="dollyzoom" autoplay controls muted loop playsinline height="100%">

|

| 266 |

-

<source src="./static/videos/dollyzoom-stacked.mp4"

|

| 267 |

-

type="video/mp4">

|

| 268 |

-

</video>

|

| 269 |

-

</div>

|

| 270 |

-

</div>

|

| 271 |

-

<!--/ Visual Effects. -->

|

| 272 |

-

|

| 273 |

-

<!-- Matting. -->

|

| 274 |

-

<div class="column">

|

| 275 |

-

<h2 class="title is-3">Matting</h2>

|

| 276 |

-

<div class="columns is-centered">

|

| 277 |

-

<div class="column content">

|

| 278 |

-

<p>

|

| 279 |

-

As a byproduct of our method, we can also solve the matting problem by ignoring

|

| 280 |

-

samples that fall outside of a bounding box during rendering.

|

| 281 |

-

</p>

|

| 282 |

-

<video id="matting-video" controls playsinline height="100%">

|

| 283 |

-

<source src="./static/videos/matting.mp4"

|

| 284 |

-

type="video/mp4">

|

| 285 |

-

</video>

|

| 286 |

-

</div>

|

| 287 |

-

|

| 288 |

</div>

|

| 289 |

</div>

|

| 290 |

</div>

|

| 291 |

-

|

| 292 |

-

|

| 293 |

-

|

| 294 |

-

|

| 295 |

-

|

| 296 |

-

|

| 297 |

-

|

| 298 |

-

|

| 299 |

-

|

| 300 |

-

|

| 301 |

-

|

| 302 |

-

|

| 303 |

-

|

| 304 |

-

|

| 305 |

-

|

| 306 |

-

|

| 307 |

-

|

| 308 |

-

|

| 309 |

-

|

| 310 |

-

|

| 311 |

-

|

| 312 |

-

|

| 313 |

-

|

| 314 |

-

|

| 315 |

-

|

| 316 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 317 |

</div>

|

| 318 |

-

<input class="slider is-fullwidth is-large is-info"

|

| 319 |

-

id="interpolation-slider"

|

| 320 |

-

step="1" min="0" max="100" value="0" type="range">

|

| 321 |

-

</div>

|

| 322 |

-

<div class="column is-3 has-text-centered">

|

| 323 |

-

<img src="./static/images/interpolate_end.jpg"

|

| 324 |

-

class="interpolation-image"

|

| 325 |

-

alt="Interpolation end reference image."/>

|

| 326 |

-

<p class="is-bold">End Frame</p>

|

| 327 |

</div>

|

| 328 |

</div>

|

| 329 |

-

|

| 330 |

-

|

| 331 |

-

|

| 332 |

-

|

| 333 |

-

<

|

| 334 |

-

|

| 335 |

-

|

| 336 |

-

|

| 337 |

-

|

| 338 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 339 |

</div>

|

| 340 |

-

|

| 341 |

-

|

| 342 |

-

|

| 343 |

-

|

| 344 |

-

|

| 345 |

-

|

| 346 |

-

|

| 347 |

-

|

| 348 |

-

|

| 349 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 350 |

</div>

|

| 351 |

-

|

| 352 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 353 |

</div>

|

| 354 |

-

</

|

| 355 |

-

<!--/ Animation. -->

|

| 356 |

|

| 357 |

-

|

| 358 |

-

|

| 359 |

-

|

| 360 |

-

|

| 361 |

-

|

| 362 |

-

|

| 363 |

-

|

| 364 |

-

|

| 365 |

-

There's a lot of excellent work that was introduced around the same time as ours.

|

| 366 |

-

</p>

|

| 367 |

-

<p>

|

| 368 |

-

<a href="https://arxiv.org/abs/2104.09125" target="_blank">Progressive Encoding for Neural Optimization</a> introduces an idea similar to our windowed position encoding for coarse-to-fine optimization.

|

| 369 |

-

</p>

|

| 370 |

-

<p>

|

| 371 |

-

<a href="https://www.albertpumarola.com/research/D-NeRF/index.html" target="_blank">D-NeRF</a> and <a href="https://gvv.mpi-inf.mpg.de/projects/nonrigid_nerf/" target="_blank">NR-NeRF</a>

|

| 372 |

-

both use deformation fields to model non-rigid scenes.

|

| 373 |

-

</p>

|

| 374 |

-

<p>

|

| 375 |

-

Some works model videos with a NeRF by directly modulating the density, such as <a href="https://video-nerf.github.io/" target="_blank">Video-NeRF</a>, <a href="https://www.cs.cornell.edu/~zl548/NSFF/" target="_blank">NSFF</a>, and <a href="https://neural-3d-video.github.io/" target="_blank">DyNeRF</a>

|

| 376 |

-

</p>

|

| 377 |

-

<p>

|

| 378 |

-

There are probably many more by the time you are reading this. Check out <a href="https://dellaert.github.io/NeRF/" target="_blank">Frank Dellart's survey on recent NeRF papers</a>, and <a href="https://github.com/yenchenlin/awesome-NeRF" target="_blank">Yen-Chen Lin's curated list of NeRF papers</a>.

|

| 379 |

-

</p>

|

| 380 |

</div>

|

| 381 |

</div>

|

| 382 |

-

|

| 383 |

-

<!--/ Concurrent Work. -->

|

| 384 |

|

| 385 |

-

</div>

|

| 386 |

-

</section>

|

| 387 |

|

| 388 |

-

|

| 389 |

-

|

| 390 |

-

<div class="container is-max-desktop content">

|

| 391 |

-

<h2 class="title">BibTeX</h2>

|

| 392 |

-

<pre><code>@article{park2021nerfies,

|

| 393 |

-

author = {Park, Keunhong and Sinha, Utkarsh and Barron, Jonathan T. and Bouaziz, Sofien and Goldman, Dan B and Seitz, Steven M. and Martin-Brualla, Ricardo},

|

| 394 |

-

title = {Nerfies: Deformable Neural Radiance Fields},

|

| 395 |

-

journal = {ICCV},

|

| 396 |

-

year = {2021},

|

| 397 |

-

}</code></pre>

|

| 398 |

-

</div>

|

| 399 |

-

</section>

|

| 400 |

-

|

| 401 |

-

|

| 402 |

-

<footer class="footer">

|

| 403 |

-

<div class="container">

|

| 404 |

-

<div class="content has-text-centered">

|

| 405 |

-

<a class="icon-link" target="_blank"

|

| 406 |

-

href="./static/videos/nerfies_paper.pdf">

|

| 407 |

-

<i class="fas fa-file-pdf"></i>

|

| 408 |

-

</a>

|

| 409 |

-

<a class="icon-link" href="https://github.com/keunhong" target="_blank" class="external-link" disabled>

|

| 410 |

-

<i class="fab fa-github"></i>

|

| 411 |

-

</a>

|

| 412 |

-

</div>

|

| 413 |

-

<div class="columns is-centered">

|

| 414 |

-

<div class="column is-8">

|

| 415 |

-

<div class="content">

|

| 416 |

-

<p>

|

| 417 |

-

This website is licensed under a <a rel="license" target="_blank"

|

| 418 |

-

href="http://creativecommons.org/licenses/by-sa/4.0/">Creative

|

| 419 |

-

Commons Attribution-ShareAlike 4.0 International License</a>.

|

| 420 |

-

</p>

|

| 421 |

-

<p>

|

| 422 |

-

This means you are free to borrow the <a target="_blank"

|

| 423 |

-

href="https://github.com/nerfies/nerfies.github.io">source code</a> of this website,

|

| 424 |

-

we just ask that you link back to this page in the footer.

|

| 425 |

-

Please remember to remove the analytics code included in the header of the website which

|

| 426 |

-

you do not want on your website.

|

| 427 |

-

</p>

|

| 428 |

-

</div>

|

| 429 |

-

</div>

|

| 430 |

-

</div>

|

| 431 |

-

</div>

|

| 432 |

-

</footer>

|

| 433 |

|

| 434 |

</body>

|

| 435 |

-

|

|

|

|

|

|

| 1 |

<!DOCTYPE html>

|

| 2 |

<html>

|

| 3 |

+

|

| 4 |

<head>

|

| 5 |

<meta charset="utf-8">

|

| 6 |

<meta name="description"

|

| 7 |

+

content="Deformable Neural Radiance Fields creates free-viewpoint portraits (nerfies) from casually captured videos.">

|

| 8 |

<meta name="keywords" content="Nerfies, D-NeRF, NeRF">

|

| 9 |

<meta name="viewport" content="width=device-width, initial-scale=1">

|

| 10 |

+

<title>GroUSE: A Benchmark to Evaluate Evaluators in Grounded Question

|

| 11 |

+

Answering</title>

|

| 12 |

|

| 13 |

+

<link href="https://fonts.googleapis.com/css?family=Google+Sans|Noto+Sans|Castoro" rel="stylesheet">

|

|

|

|

| 14 |

|

| 15 |

<link rel="stylesheet" href="./static/css/bulma.min.css">

|

| 16 |

+

<!-- <link rel="stylesheet" href="./static/css/bulma-carousel.min.css"> -->

|

| 17 |

+

<!-- <link rel="stylesheet" href="./static/css/bulma-slider.min.css"> -->

|

| 18 |

<link rel="stylesheet" href="./static/css/fontawesome.all.min.css">

|

| 19 |

+

<link rel="stylesheet" href="https://cdn.jsdelivr.net/gh/jpswalsh/academicons@1/css/academicons.min.css">

|

|

|

|

| 20 |

<link rel="stylesheet" href="./static/css/index.css">

|

| 21 |

<link rel="icon" href="./static/images/favicon.svg">

|

| 22 |

|

| 23 |

+

|

| 24 |

+

|

| 25 |

<script src="https://ajax.googleapis.com/ajax/libs/jquery/3.5.1/jquery.min.js"></script>

|

| 26 |

<script defer src="./static/js/fontawesome.all.min.js"></script>

|

| 27 |

<script src="./static/js/bulma-carousel.min.js"></script>

|

| 28 |

<script src="./static/js/bulma-slider.min.js"></script>

|

| 29 |

<script src="./static/js/index.js"></script>

|

| 30 |

</head>

|

| 31 |

+

|

| 32 |

<body>

|

| 33 |

|

| 34 |

+

<section class="hero">

|

| 35 |

+

<div class="hero-body">

|

| 36 |

+

<div class="container is-max-desktop">

|

| 37 |

+

<div class="columns is-centered">

|

| 38 |

+

<div class="column has-text-centered">

|

| 39 |

+

<h1 class="title is-1 publication-title">GroUSE: A Benchmark to Evaluate Evaluators in Grounded Question

|

| 40 |

+

Answering</h1>

|

| 41 |

+

<div class="is-size-5 publication-authors">

|

| 42 |

+

<span class="author-block">

|

| 43 |

+

<a href="https://www.linkedin.com/in/sacha-muller/" target="_blank">Sacha Muller</a>,</span>

|

| 44 |

+

<span class="author-block">

|

| 45 |

+

<a href="https://www.linkedin.com/in/antonio-loison/" target="_blank">António

|

| 46 |

+

Loison</a>,</span>

|

| 47 |

+

<span class="author-block">

|

| 48 |

+

<a href="https://www.linkedin.com/in/bilel-omrani/" target="_blank">Bilel Omrani</a>,

|

| 49 |

+

</span>

|

| 50 |

+

<span class="author-block">

|

| 51 |

+

<a href="https://www.linkedin.com/in/gautier-viaud/" target="_blank">Gautier Viaud</a>,

|

| 52 |

+

</span>

|

| 53 |

+

</div>

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 54 |

|

| 55 |

+

<div class="is-size-5 publication-authors">

|

| 56 |

+

<span class="author-block">Illuin Technology</span>

|

| 57 |

+

</div>

|

|

|

|

| 58 |

|

| 59 |

+

<div class="column has-text-centered">

|

| 60 |

+

<div class="publication-links">

|

| 61 |

+

|

| 62 |

+

<span class="link-block">

|

| 63 |

+

<a href="https://arxiv.org/pdf/2409.06595" target="_blank"

|

| 64 |

+

class="external-link button is-normal is-rounded is-dark">

|

| 65 |

+

<span class="icon">

|

| 66 |

<i class="fas fa-file-pdf"></i>

|

| 67 |

+

</span>

|

| 68 |

+

<span>Paper</span>

|

| 69 |

+

</a>

|

| 70 |

+

</span>

|

| 71 |

+

<span class="link-block">

|

| 72 |

+

<a href="https://arxiv.org/abs/2409.06595" target="_blank"

|

| 73 |

+

class="external-link button is-normal is-rounded is-dark">

|

| 74 |

+

<span class="icon">

|

| 75 |

<i class="ai ai-arxiv"></i>

|

| 76 |

+

</span>

|

| 77 |

+

<span>arXiv</span>

|

| 78 |

+

</a>

|

| 79 |

+

</span>

|

| 80 |

+

<!-- Code Link. -->

|

| 81 |

+

<span class="link-block">

|

| 82 |

+

<a href="https://github.com/illuin-tech/grouse" target="_blank"

|

| 83 |

+

class="external-link button is-normal is-rounded is-dark">

|

| 84 |

+

<span class="icon">

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 85 |

<i class="fab fa-github"></i>

|

| 86 |

+

</span>

|

| 87 |

+

<span>Code</span>

|

| 88 |

</a>

|

| 89 |

+

</span>

|

| 90 |

+

<!-- Dataset Link. -->

|

| 91 |

+

<span class="link-block">

|

| 92 |

+

<a href="https://github.com/google/nerfies/releases/tag/0.1" target="_blank"

|

| 93 |

+

class="external-link button is-normal is-rounded is-dark">

|

| 94 |

+

<span class="icon">

|

| 95 |

+

<i class="fas fa-database"></i>

|

| 96 |

+

</span>

|

| 97 |

+

<span>Dataset</span>

|

| 98 |

</a>

|

| 99 |

+

</span>

|

| 100 |

+

<!-- Dataset Link. -->

|

| 101 |

+

<span class="link-block">

|

| 102 |

+

<a href="https://huggingface.co/illuin/llama-3-grouse" target="_blank"

|

| 103 |

+

class="external-link button is-normal is-rounded is-dark">

|

| 104 |

+

<span class="icon">

|

| 105 |

+

<i class="fas fa-robot"></i>

|

| 106 |

+

</span>

|

| 107 |

+

<span>Model</span>

|

| 108 |

+

</a>

|

| 109 |

+

</div>

|

| 110 |

|

| 111 |

+

</div>

|

| 112 |

</div>

|

| 113 |

</div>

|

| 114 |

</div>

|

| 115 |

</div>

|

| 116 |

+

</section>

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 117 |

|

| 118 |

+

<section class="section">

|

| 119 |

+

<div class="container is-max-desktop">

|

| 120 |

+

<!-- Abstract. -->

|

| 121 |

+

<div class="columns is-centered has-text-centered">

|

| 122 |

+

<div class="column is-four-fifths">

|

| 123 |

+

<div class="content has-text-justified">

|

| 124 |

+

<h2 class="title is-3">Context</h2>

|

| 125 |

+

<p>

|

| 126 |

+

Grounded Question Answering (QA) is usually the last step of a RAG pipeline: given a question and a set of

|

| 127 |

+

documents retrieved from the corpus, an LLM must generate an answer. We expect the LLM to cite which

|

| 128 |

+

document each piece of information is coming from, as depicted below. When no precise answer is in the

|

| 129 |

+

documents, the LLM should indicate it in its answer. In that case, if some related information is

|

| 130 |

+

available in the documents, the LLM can add it to the answer to show the corpus is not completely

|

| 131 |

+

off-topic with respect to the question.

|

| 132 |

+

</p>

|

| 133 |

+

<p align="center">

|

| 134 |

+

<img src="./static/images/grounded_qa_cases.png"

|

| 135 |

+

alt="Schema showing an example depending on whether the references contain a precise answer, only related information or no information. For each case there is an example of references and ground truth answer. The question is common to the three cases : What is the relationship between Pluto and Neptune. Case 1 : the references contain a precise answer. Reference 1 : More than 200 objects in 2:3 resonance are known (meaning they complete exactly 2 revolutions around the Sun when Neptune completes 3), among which are Pluto and its moons. Reference 2 : Pluto’s axis of rotation is tilted at 57.5 degrees relative to its orbital plane, which is quite high and unusual in the Solar System. Reference 3 : On the left: view of a cardiac cycle, of a systolic-diastolic oscillating flow, characteristic of circulatory arrest. Ground truth answer : The 3:2 orbital resonance relationship between Pluto and Neptune means that for every 3 revolutions of Neptune around the Sun, Pluto completes 2 [reference 1 citation]. Case 2 : References only contain related information. The reference 1 containing a precise information was removed, the two others are left. Ground truth answer : No document seems to precisely answer your question. However, the documents indicate that : Pluto’s axis of rotation is tilted at 57.5 degrees [reference 2 citation]. Case 3 : References contain no answer nor related information. Reference 1 and 2 were removed, only reference 3 which is off topic if left. Ground truth answer : No document seems to precisely answer your question."

|

| 136 |

+

style="width:800px;" />

|

| 137 |

+

</p>

|

| 138 |

+

<p>

|

| 139 |

+

This task is difficult to evaluate due to the wide variety of errors an answer can contain, such as

|

| 140 |

+

superfluous information, missing relevant details from references, incorrect claims that no document

|

| 141 |

+

answers the question, citation mistakes and so on. Some attempts to define metrics and automatize the

|

| 142 |

+

evaluation of this task have been made (RAGAS, DeepEval), however, these approaches didn't cover all the

|

| 143 |

+

failure modes we were interested in.

|

| 144 |

+

</p>

|

| 145 |

+

<p>

|

| 146 |

+

Most of these approaches rely heavily on the <em>LLM-as-a-Judge</em> method. While this technique can be

|

| 147 |

+

powerful, it is crucial to first assess the ability of an LLM to accurately evaluate this Grounded QA task

|

| 148 |

+

with respect to our metrics.

|

| 149 |

+

</p>

|

| 150 |

+

<p>

|

| 151 |

+

It is tempting to consider using an LLM to verify the evaluations generated by an evaluator LLM (which was

|

| 152 |

+

assessing LLM's answers). However, this could quickly lead down a rabbit hole of endless AI-on-AI

|

| 153 |

+

evaluations. This is why we developed <strong>GroUSE: a unit testing suite designed to evaluate the

|

| 154 |

+

evaluators</strong>

|

| 155 |

+

<em>(pronounced "graouse")</em>.

|

| 156 |

|

| 157 |

+

</p>

|

| 158 |

+

<p align="center">

|

| 159 |

+

<img src="./static/images/meme.jpeg"

|

| 160 |

+

alt="This image is a two-panel meme: on the left panel, a man labeled 'Grounded QA LLM,' stands confidently. Behind him, a menacing muscular figure labeled 'Evaluator LLM' is holding a bat. On the right panel: the same 'Grounded QA LLM' and 'Evaluator LLM' appear again, but now they are dwarfed by an even larger, more muscular figure labeled 'Grouse'."

|

| 161 |

+

style="width:450px;" />

|

| 162 |

+

</p>

|

| 163 |

+

</div>

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 164 |

</div>

|

| 165 |

</div>

|

| 166 |

+

</section>

|

|

|

|

|

|

|

|

|

|

| 167 |

|

| 168 |

+

<section class="section">

|

| 169 |

+

<div class="container is-max-desktop">

|

| 170 |

+

<div class="columns is-centered has-text-centered">

|

| 171 |

+

<div class="column is-four-fifths">

|

| 172 |

+

<div class="content has-text-justified">

|

| 173 |

+

<h2 class="title is-3">The GroUSE dataset</h2>

|

| 174 |

+

<p>

|

| 175 |

+

GroUSE (Grounded QA Unitary Scoring of Evaluators) is a dataset of unitary tests used to check if a

|

| 176 |

+

Grounded QA evaluator is giving the scores we expect. Each test contains:

|

| 177 |

+

</p>

|

| 178 |

+

<ul>

|

| 179 |

+

<li>a Grounded QA sample (consisting of a question and references),</li>

|

| 180 |

+

<li>a ground truth answer to the question,</li>

|

| 181 |

+

<li>an answer to evaluate (which may or may not contain an error),</li>

|

| 182 |

+

<li>a list of expected grades.</li>

|

| 183 |

+

</ul>

|

| 184 |

+

|

| 185 |

+

<p align="center">

|

| 186 |

+

<img src="./static/images/one_sample.png"

|

| 187 |

+

alt="This image is titled 'A simplified sample of GroUSE' and showcases an example of how to evaluate the accuracy and relevance of an answer. The structure is divided into five main sections: 1) Question: 'What is the relationship between Pluto and Neptune?'. 2) A list of two references. Reference 1: 'More than 200 objects in 2:3 resonance are known (meaning they complete exactly 2 revolutions around the Sun when Neptune completes 3), among which are Pluto and its moons.' Reference 2: 'Pluto rotates on its axis every 6.387 days, which is 6 days, 9 hours, and 17 minutes. Its axis of rotation is tilted at 57.5 degrees relative to its orbital plane, which is quite high and unusual in the Solar System.' 3) Ground Truth Answer: 'The 3:2 orbital resonance relationship between Pluto and Neptune means that for every 3 revolutions of Neptune around the Sun, Pluto completes 2 [reference 1 citation].' 4) Answer to Evaluate: 'The 3:2 orbital resonance relationship between Pluto and Neptune means that for every 3 revolutions of Neptune around the Sun, Pluto completes 2 [reference 1 citation]. Pluto’s axis of rotation is tilted at 57.5 degrees [reference 2 citation].' 5) Expected Notes: A rating system different evaluation criteria: Answer Relevancy: less than 4 (indicated in orange). Completeness: 5 (green). Useful: undefined (grey). Faithfulness: 1 (green). Positive Acceptance: undefined (grey). Negative Rejection: undefined (grey)."

|

| 188 |

+

style="width:600px;" />

|

| 189 |

+

</p>

|

| 190 |

+

<p>

|

| 191 |

+

In our framework, judge LLMs evaluate the quality of a grounded QA answer according to 6 metrics intended

|

| 192 |

+

to capture all the failure modes of the task:

|

| 193 |

+

</p>

|

| 194 |

+

<p>

|

| 195 |

+

<ul>

|

| 196 |

+

<li><strong>Answer relevancy</strong> assesses the relevance of the information provided in the answer

|

| 197 |

+

regarding the question, using a Likert scale (1 to 5).</li>

|

| 198 |

+

<li><strong>Completeness</strong> also uses a Likert scale to evaluate whether all relevant information

|

| 199 |

+

from

|

| 200 |

+

the documents is present in the answer.</li>

|

| 201 |

+

<li><strong>Faithfulness</strong> is a binary score that checks if all facts in the answer are accurate

|

| 202 |

+

and

|

| 203 |

+

correctly attributed to the corresponding document.</li>

|

| 204 |

+

<li><strong>Usefulness</strong> is only evaluated when the answer states there is no precise answer in the

|

| 205 |

+

references and provides related information. It is a binary score that determines if the additional

|

| 206 |

+

information is indeed useful and relevant to the question.</li>

|

| 207 |

+

<li><strong>Positive Acceptance</strong> and <strong>Negative Rejection</strong> are binary scores

|

| 208 |

+

indicating a true positive and a true negative respectively in identifying whether the question is

|

| 209 |

+

answerable.</li>

|

| 210 |

+

</ul>

|

| 211 |

+

</p>

|

| 212 |

+

<p>

|

| 213 |

+

The GroUSE dataset comprises <strong>144 samples organized into 9 sets</strong>. Each set addresses the

|

| 214 |

+

same question

|

| 215 |

+

and draws from largely similar references, with slight variations in the answers. These small

|

| 216 |

+

modifications are tailored to fit a predefined typology of 16 test types, which are designed to assess

|

| 217 |

+

whether an evaluator correctly penalizes all failure modes and rewards accurate answers across a diverse

|

| 218 |

+

range of scenarios. The image below displays four samples along with their corresponding test types. For

|

| 219 |

+

instance, test type 14 assesses whether the faithfulness score is set to 0 when there is a citation

|

| 220 |

+

mistake.

|

| 221 |

+

</p>

|

| 222 |

+

<p align="center">

|

| 223 |

+

<img src="./static/images/schema_unit_test_blog.png"

|

| 224 |

+

alt="Schema showing four type of tests. Type 1: A perfect answer should get the highest notes. Type 2: Related information is not mandatory to get the highest notes. Type 9: Answering in an adversarial situation should result in low negative rejection. Type 14: An error in the citations leads to minimal faithfulness."

|

| 225 |

+

style="width:1000px;" />

|

| 226 |

+

</p>

|

| 227 |

+

<p>

|

| 228 |

+

GroUSE includes an additional set of tests meant to help users engineer their prompts and try to obtain

|

| 229 |

+

the best evaluator possible before checking its performances on the 9 other sets. Using this "train set",

|

| 230 |

+

we iterated on the prompts, making our best effort to craft the best prompts possible for each of the

|

| 231 |

+

tested models before measuring how many tests they passed. The "train set" is kept small to imitate the

|

| 232 |

+

real-world scenario where the user has a limited number of samples to optimize its prompts.

|

| 233 |

+

</p>

|

| 234 |

+

</div>

|

| 235 |

+

</div>

|

| 236 |

+

</div>

|

| 237 |

+

</section>

|

| 238 |

<div class="container is-max-desktop">

|

|

|

|

| 239 |

<div class="columns is-centered has-text-centered">

|

| 240 |

<div class="column is-four-fifths">

|

|

|

|

| 241 |

<div class="content has-text-justified">

|

| 242 |

+

<h2 class="title is-3">Benchmarking the evaluation abilities of models</h2>

|

| 243 |

<p>

|

| 244 |

+

The structure of the GroUSE dataset allows for presenting a model's results in a matrix format, where each

|

| 245 |

+

row represents the model's performance on a specific test type, and each column corresponds to its

|

| 246 |

+

performance on a particular question. This format reveals, for example, that GPT-4 struggles with test type

|

| 247 |

+

16, which involves an answer containing information that distorts one of the references, leading to a low

|

| 248 |

+

expected faithfulness but good relevancy and good completeness. Moreover, Llama-3 70B struggles the most

|

| 249 |

+

with test type 7, a test in which we include an *absurd* fact in the references and mention this fact in the

|

| 250 |

+

answer. Despite the fact seeming incorrect, since it's present in the references, high scores are expected.

|

| 251 |

+

Test type 7 allows to check that the model doesn't use its internal knowledge and refers solely to the

|

| 252 |

+

references to evaluate the metrics.

|

| 253 |

</p>

|

| 254 |

+

<p align="center">

|

| 255 |

+

<img src="./static/images/matrices.png"

|

| 256 |

+

alt="12 matrices arranged in two rows of six. The two rows correspond to GPT-4 and LLama-3 70b, the six columns represent the six metrics: answer relevancy, completeness, usefulness, faithfulness, positive acceptance and negative rejection. Each matrix contains 144 small squares, blue if the test passed, red if the test failed, orange if the output was not parsable. We can see that there are more red squares on Llama-3 70b row, especially in the completeness column."

|

| 257 |

+

style="width:1000px;" />

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 258 |

</p>

|

| 259 |

<p>

|

| 260 |

+

For a more compact view, we can also calculate the percentage of tests each model passes for each metric:

|

| 261 |

+

</p>

|

| 262 |

+

<p align="center">

|

| 263 |

+

<img src="./static/images/result_table.png"

|

| 264 |

+

alt="A table with the agreement rate of each metric, for a list of models." style="width:800px;" />

|

|

|

|

|

|

|

|

|

|

|

|

|

| 265 |

</p>

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 266 |

<p>

|

| 267 |

+

The strongest evaluator models are GPT-4 for closed-weights models, with a pass rate of 95%, and Llama-3 70b

|

| 268 |

+

for open-weights with 79%. The human performance on this dataset is 98%. The hardest metric to evaluate is

|

| 269 |

+

completeness, for LLMs and humans alike.

|

| 270 |

</p>

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 271 |

</div>

|

| 272 |

</div>

|

| 273 |

</div>

|

| 274 |

+

</section>

|

| 275 |

+

|

| 276 |

+

<section class="section">

|

| 277 |

+

<div class="container is-max-desktop">

|

| 278 |

+

<div class="columns is-centered has-text-centered">

|

| 279 |

+

<div class="column is-four-fifths">

|

| 280 |

+

<div class="content has-text-justified">

|

| 281 |

+

<h2 class="title is-3">Improving an open source model</h2>

|

| 282 |

+

<p>

|

| 283 |

+

To demonstrate the gap between open-weights and closed-weights models can be narrowed, we finetuned a

|

| 284 |

+

Llama-3 8b model on traces of evaluations by GPT-4. Aiming to develop a model capable of solving the

|

| 285 |

+

task in a single call, we concatenated the metric-specific responses from GPT-4 into a single output and

|

| 286 |

+

followed a similar process for the input, resulting in a dataset of 1200 samples. We finetuned the

|

| 287 |

+

Llama-3 8b on 1k samples of this dataset, and used the rest as a test set. We measured the model's

|

| 288 |

+

progression both on GroUSE and by measuring the correlation between GPT-4's grades and the finetuned

|

| 289 |

+

model's grades on the test set.

|

| 290 |

+

</p>

|

| 291 |

+

<p align="center">

|

| 292 |

+

<img src="./static/images/correlation_table.png"

|

| 293 |

+

alt="A table with the correlation between a list of models and GPT-4's evaluation."

|

| 294 |

+

style="width:700px;" />

|

| 295 |

+

</p>

|

| 296 |

+

|

| 297 |

+

<p>

|

| 298 |

+

Finetuning significantly enhances the evaluation capabilities of Llama-3, as evidenced by the

|

| 299 |

+

substantial improvement in pass rates, going from a 40% to a 83% test pass rate. A similar progress can

|

| 300 |

+

be seen on the correlation measures, however it is worth noting that the finetuned model has similar

|

| 301 |

+

correlation levels than the 0-shot Llama-3 8b with evaluating one metric per prompt. Although this

|

| 302 |

+

approach demonstrated significant improvements, it would be beneficial to explore the effects of

|

| 303 |

+

finetuning larger models, which could potentially yield even better performance.

|

| 304 |

+

</p>

|

| 305 |

</div>

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 306 |

</div>

|

| 307 |

</div>

|

| 308 |

+

</section>

|

| 309 |

+

|

| 310 |

+

<section class="section">

|

| 311 |

+

<div class="container is-max-desktop">

|

| 312 |

+

<div class="columns is-centered has-text-centered">

|

| 313 |

+

<div class="column is-four-fifths">

|

| 314 |

+

<div class="content has-text-justified">

|

| 315 |

+

<h2 class="title is-3">Improving an open source model</h2>

|

| 316 |

+

<p>

|

| 317 |

+

Our results reveal a discrepancy between GroUSE pass rates and correlation with GPT-4's grades. While

|

| 318 |

+

Prometheus 2 7b and finetuned Llama-3 8b show similar correlations with GPT-4 on answer relevancy, their

|

| 319 |

+

GroUSE pass rates differ significantly, with Llama-3 8b outperforming Prometheus 2 7b.

|

| 320 |

+

Confusion matrices reveal that Prometheus 2 has better overall agreement with GPT-4 but struggles with

|

| 321 |

+

extreme cases (1, 5 and NaN cases), while finetuned Llama-3 excels in extreme cases but lacks

|

| 322 |

+

correlation in intermediate ones.

|

| 323 |

+

</p>

|

| 324 |

+

<p align="center">

|

| 325 |

+

<img src="./static/images/confusion_matrixes.png" alt="" style="width:350px;" />

|

| 326 |

+

</p>

|

| 327 |

+

<p>

|

| 328 |

+

This finding suggests that a high correlation with GPT-4's judgments does not necessarily equate to a

|

| 329 |

+

high unit test pass rate. A judge model can share the same relative preferences as GPT-4 (indicated by

|

| 330 |

+

strong rank correlation) but still lack the same calibration on precise reference cases (very good

|

| 331 |

+

answers, subtle mistakes, etc.), resulting in poor performance on judgment unit tests.

|

| 332 |

+

</p>

|

| 333 |

+

</div>

|

| 334 |

+

</div>

|

| 335 |

</div>

|

| 336 |

+

</section>

|

| 337 |

+

|

| 338 |

+

<section class="section">

|

| 339 |

+

<div class="container is-max-desktop">

|

| 340 |

+

<div class="columns is-centered has-text-centered">

|

| 341 |

+

<div class="column is-four-fifths">

|

| 342 |

+

<div class="content has-text-justified">

|

| 343 |

+

<h2 class="title is-3">Conclusion</h2>

|

| 344 |

+

<p>

|

| 345 |

+

To conclude briefly:

|

| 346 |

+

</p>

|

| 347 |

+

<ul>

|

| 348 |

+

<li>GroUSE is a dataset that allows to check if a model attributes the expected score on a wide range of

|

| 349 |

+

cases.</li>

|

| 350 |

+

<li>Using the LLM-as-a-Judge approach, GPT-4 was the strongest closed weights evaluator and Llama-3 70B

|

| 351 |

+

the best open weights evaluator.</li>

|

| 352 |

+

<li>We demonstrated that a model evaluation abilities can improve with finetuning on a stronger model's

|

| 353 |

+

evaluations. </li>

|

| 354 |

+

<li>We showed that correlation with a strong evaluator does not necessarily imply a good score on the

|

| 355 |

+

unit tests. These measures are complementary: correlation with GPT-4 indicates agreement in relative

|

| 356 |

+

preference, while GroUSE pass rate measures precise calibration on practical reference cases.</li>

|

| 357 |

+

</ul>

|

| 358 |

+

<p>

|

| 359 |

+

If you want to evaluate your RAG pipeline with our GPT-4 prompts, or even meta-evaluate your RAG

|

| 360 |

+

evaluator on GroUSE, a python package is available at

|

| 361 |

+

<a href="https://github.com/illuin-tech/grouse">github.com/illuin-tech/grouse</a> !

|

| 362 |

+

</p>

|

| 363 |

+

</div>

|

| 364 |

+

</div>

|

| 365 |

</div>

|

| 366 |

+

</section>

|

| 367 |

+

|

| 368 |

+

|

| 369 |

+

<section class="section" id="BibTeX">

|

| 370 |

+

<div class="container is-max-desktop content">

|

| 371 |

+

<h2 class="title">BibTeX</h2>

|

| 372 |

+

<pre><code>@misc{muller2024grouse,

|

| 373 |

+

title={GroUSE: A Benchmark to Evaluate Evaluators in Grounded Question Answering},

|

| 374 |

+

author={Sacha Muller and António Loison and Bilel Omrani and Gautier Viaud},

|

| 375 |

+

year={2024},

|

| 376 |

+

eprint={2409.06595},

|

| 377 |

+

archivePrefix={arXiv},

|

| 378 |

+

primaryClass={cs.CL},

|

| 379 |

+

url={https://arxiv.org/abs/2409.06595},

|

| 380 |

+

}</code></pre>

|

| 381 |

</div>

|

| 382 |

+

</section>

|

|

|

|

| 383 |

|

| 384 |

+

<!-- <section class="section"></section>

|

| 385 |

+

<div class="container is-max-desktop">

|

| 386 |

+

<div class="columns is-centered has-text-centered">

|

| 387 |

+

<div class="column is-four-fifths">

|

| 388 |

+

<div class="content has-text-justified">

|

| 389 |

+

<h2 class="title is-3">Acknowledgments</h2>

|

| 390 |

+

|

| 391 |

+

</div>

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 392 |

</div>

|

| 393 |

</div>

|

| 394 |

+

</section> -->

|

|

|

|

| 395 |

|

|

|

|

|

|

|

| 396 |

|

| 397 |

+

<footer class="footer">

|

| 398 |

+

</footer>

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 399 |

|

| 400 |

</body>

|

| 401 |

+

|

| 402 |

+

</html>

|

static/images/confusion_matrixes.png

ADDED

|

static/images/correlation_table.png

ADDED

|

static/images/favicon.svg

DELETED

static/images/grounded_qa_cases.png

ADDED

|

static/images/interpolate_end.jpg

DELETED

|

Binary file (113 kB)

|

|

|

static/images/interpolate_start.jpg

DELETED

|

Binary file (117 kB)

|

|

|

static/images/matrices.png

ADDED

|

static/images/meme.jpeg

ADDED

|

static/images/{steve.webm → one_sample.png}

RENAMED

|

File without changes

|

static/images/result_table.png

ADDED

|

static/images/schema_unit_test.png

ADDED

|

static/images/schema_unit_test_blog.png

ADDED

|

static/interpolation/stacked/000000.jpg

DELETED

|

Binary file (128 kB)

|

|

|

static/interpolation/stacked/000001.jpg

DELETED

|

Binary file (128 kB)

|

|

|

static/interpolation/stacked/000002.jpg

DELETED

|

Binary file (128 kB)

|

|

|

static/interpolation/stacked/000003.jpg

DELETED

|

Binary file (128 kB)

|

|

|

static/interpolation/stacked/000004.jpg

DELETED

|

Binary file (128 kB)

|

|

|

static/interpolation/stacked/000005.jpg

DELETED

|

Binary file (129 kB)

|

|

|

static/interpolation/stacked/000006.jpg

DELETED

|

Binary file (129 kB)

|

|

|

static/interpolation/stacked/000007.jpg

DELETED

|

Binary file (129 kB)

|

|

|

static/interpolation/stacked/000008.jpg

DELETED

|

Binary file (129 kB)

|

|

|

static/interpolation/stacked/000009.jpg

DELETED

|

Binary file (129 kB)

|

|

|

static/interpolation/stacked/000010.jpg

DELETED

|

Binary file (129 kB)

|

|

|

static/interpolation/stacked/000011.jpg

DELETED

|

Binary file (129 kB)

|

|

|

static/interpolation/stacked/000012.jpg

DELETED

|

Binary file (130 kB)

|

|

|

static/interpolation/stacked/000013.jpg

DELETED

|

Binary file (130 kB)

|

|

|

static/interpolation/stacked/000014.jpg

DELETED

|

Binary file (130 kB)

|

|

|

static/interpolation/stacked/000015.jpg

DELETED

|

Binary file (130 kB)

|

|

|

static/interpolation/stacked/000016.jpg

DELETED

|

Binary file (130 kB)

|

|

|

static/interpolation/stacked/000017.jpg

DELETED

|

Binary file (131 kB)

|

|

|

static/interpolation/stacked/000018.jpg

DELETED

|

Binary file (131 kB)

|

|

|

static/interpolation/stacked/000019.jpg

DELETED

|

Binary file (130 kB)

|

|

|

static/interpolation/stacked/000020.jpg

DELETED

|

Binary file (130 kB)

|

|

|

static/interpolation/stacked/000021.jpg

DELETED

|

Binary file (131 kB)

|

|

|

static/interpolation/stacked/000022.jpg

DELETED

|

Binary file (131 kB)

|

|

|

static/interpolation/stacked/000023.jpg

DELETED

|

Binary file (131 kB)

|

|

|

static/interpolation/stacked/000024.jpg

DELETED

|

Binary file (131 kB)

|

|

|

static/interpolation/stacked/000025.jpg

DELETED

|

Binary file (131 kB)

|

|

|

static/interpolation/stacked/000026.jpg

DELETED

|

Binary file (131 kB)

|

|

|

static/interpolation/stacked/000027.jpg

DELETED

|

Binary file (131 kB)

|

|

|

static/interpolation/stacked/000028.jpg

DELETED

|

Binary file (131 kB)

|

|

|

static/interpolation/stacked/000029.jpg

DELETED

|

Binary file (131 kB)

|

|

|

static/interpolation/stacked/000030.jpg

DELETED

|

Binary file (131 kB)

|

|

|

static/interpolation/stacked/000031.jpg

DELETED

|

Binary file (131 kB)

|

|

|

static/interpolation/stacked/000032.jpg

DELETED

|

Binary file (131 kB)

|

|

|

static/interpolation/stacked/000033.jpg

DELETED

|

Binary file (131 kB)

|

|

|

static/interpolation/stacked/000034.jpg

DELETED

|

Binary file (131 kB)

|

|

|

static/interpolation/stacked/000035.jpg

DELETED

|

Binary file (131 kB)

|

|

|