Post

1630

🚀 Excited to Share Our Latest Work: 3DIS & 3DIS-FLUX for Multi-Instance Layout-to-Image Generation! ❤️❤️❤️

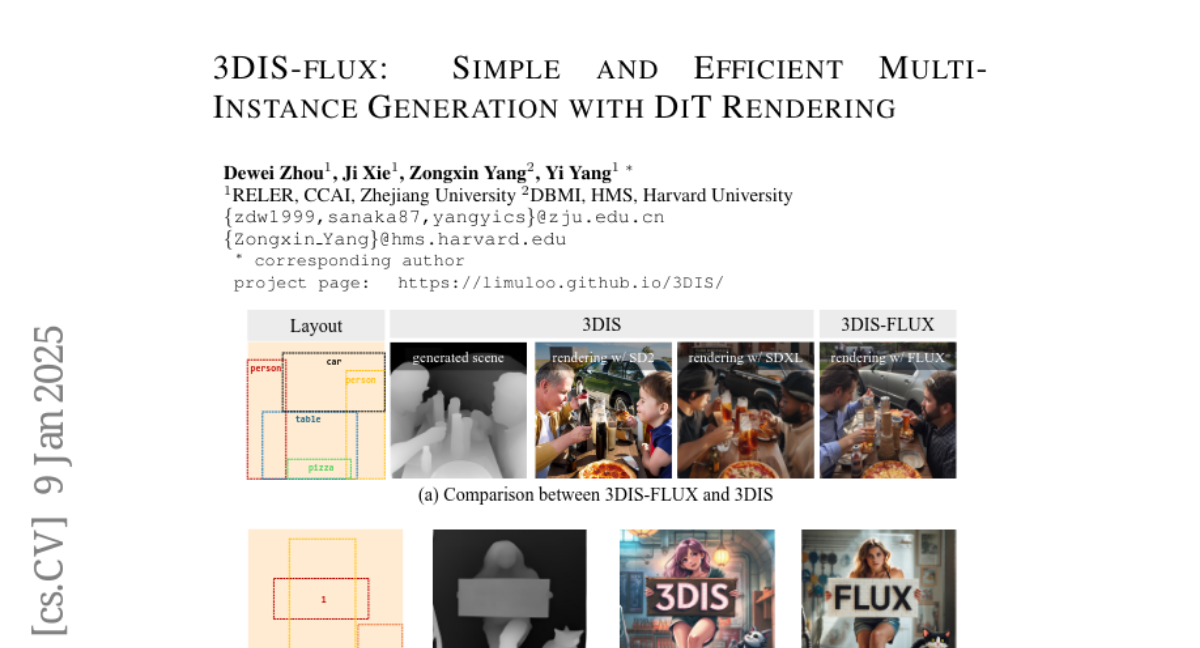

🎨 Daily Paper: 3DIS-FLUX: simple and efficient multi-instance generation with DiT rendering (2501.05131)

🔓 Code is now open source!

🌐 Project Website: https://limuloo.github.io/3DIS/

🏠 GitHub Repository: https://github.com/limuloo/3DIS

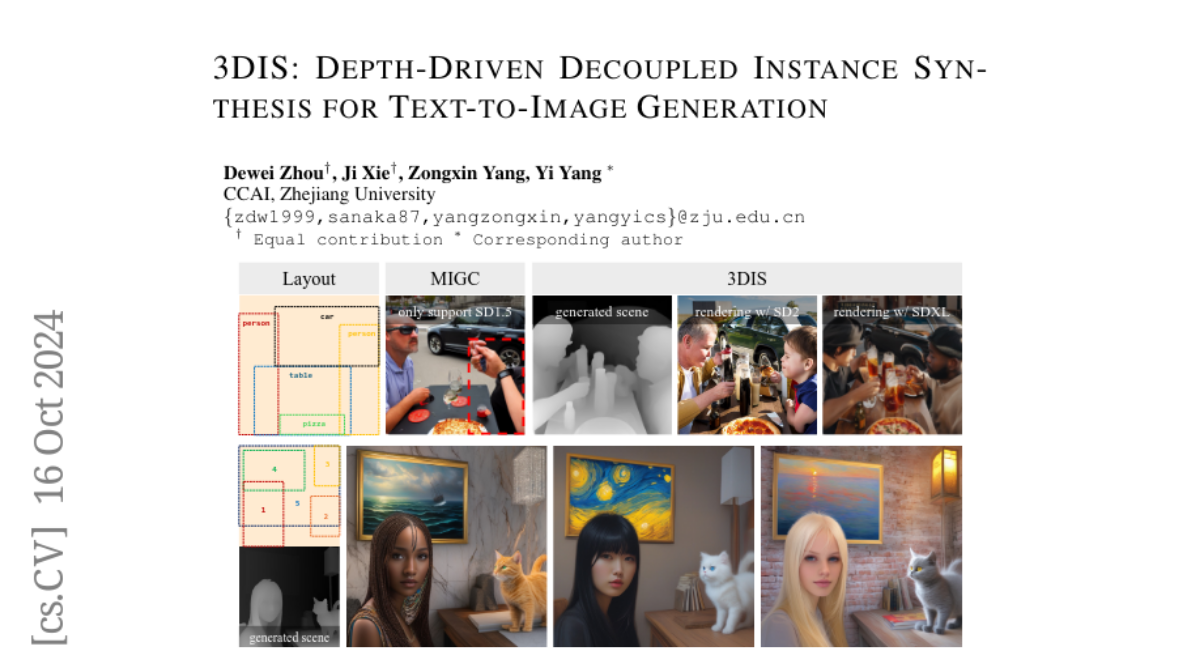

📄 3DIS Paper: https://arxiv.org/abs/2410.12669

📄 3DIS-FLUX Tech Report: https://arxiv.org/abs/2501.05131

🔥 Why 3DIS & 3DIS-FLUX?

Current SOTA multi-instance generation methods are typically adapter-based, requiring additional control modules trained on pre-trained models for layout and instance attribute control. However, with the emergence of more powerful models like FLUX and SD3.5, these methods demand constant retraining and extensive resources.

✨ Our Solution: 3DIS

We introduce a decoupled approach that only requires training a low-resolution Layout-to-Depth model to convert layouts into coarse-grained scene depth maps. Leveraging community and company pre-trained models like ControlNet + SAM2, we enable training-free controllable image generation on high-resolution models such as SDXL and FLUX.

🌟 Benefits of Our Decoupled Multi-Instance Generation:

1. Enhanced Control: By constructing scenes using depth maps in the first stage, the model focuses on coarse-grained scene layout, improving control over instance placement.

2. Flexibility & Preservation: The second stage employs training-free rendering methods, allowing seamless integration with various models (e.g., fine-tuned weights, LoRA) while maintaining the generative capabilities of pre-trained models.

Join us in advancing Layout-to-Image Generation! Follow and star our repository to stay updated! ⭐

🎨 Daily Paper: 3DIS-FLUX: simple and efficient multi-instance generation with DiT rendering (2501.05131)

🔓 Code is now open source!

🌐 Project Website: https://limuloo.github.io/3DIS/

🏠 GitHub Repository: https://github.com/limuloo/3DIS

📄 3DIS Paper: https://arxiv.org/abs/2410.12669

📄 3DIS-FLUX Tech Report: https://arxiv.org/abs/2501.05131

🔥 Why 3DIS & 3DIS-FLUX?

Current SOTA multi-instance generation methods are typically adapter-based, requiring additional control modules trained on pre-trained models for layout and instance attribute control. However, with the emergence of more powerful models like FLUX and SD3.5, these methods demand constant retraining and extensive resources.

✨ Our Solution: 3DIS

We introduce a decoupled approach that only requires training a low-resolution Layout-to-Depth model to convert layouts into coarse-grained scene depth maps. Leveraging community and company pre-trained models like ControlNet + SAM2, we enable training-free controllable image generation on high-resolution models such as SDXL and FLUX.

🌟 Benefits of Our Decoupled Multi-Instance Generation:

1. Enhanced Control: By constructing scenes using depth maps in the first stage, the model focuses on coarse-grained scene layout, improving control over instance placement.

2. Flexibility & Preservation: The second stage employs training-free rendering methods, allowing seamless integration with various models (e.g., fine-tuned weights, LoRA) while maintaining the generative capabilities of pre-trained models.

Join us in advancing Layout-to-Image Generation! Follow and star our repository to stay updated! ⭐