Update README.md

Browse files

README.md

CHANGED

|

@@ -11,42 +11,43 @@ pipeline_tag: visual-question-answering

|

|

| 11 |

---

|

| 12 |

|

| 13 |

|

| 14 |

-

[📃Paper] | [🌐Website](https://tiger-ai-lab.github.io/

|

| 15 |

|

| 16 |

|

| 17 |

-

,

|

| 22 |

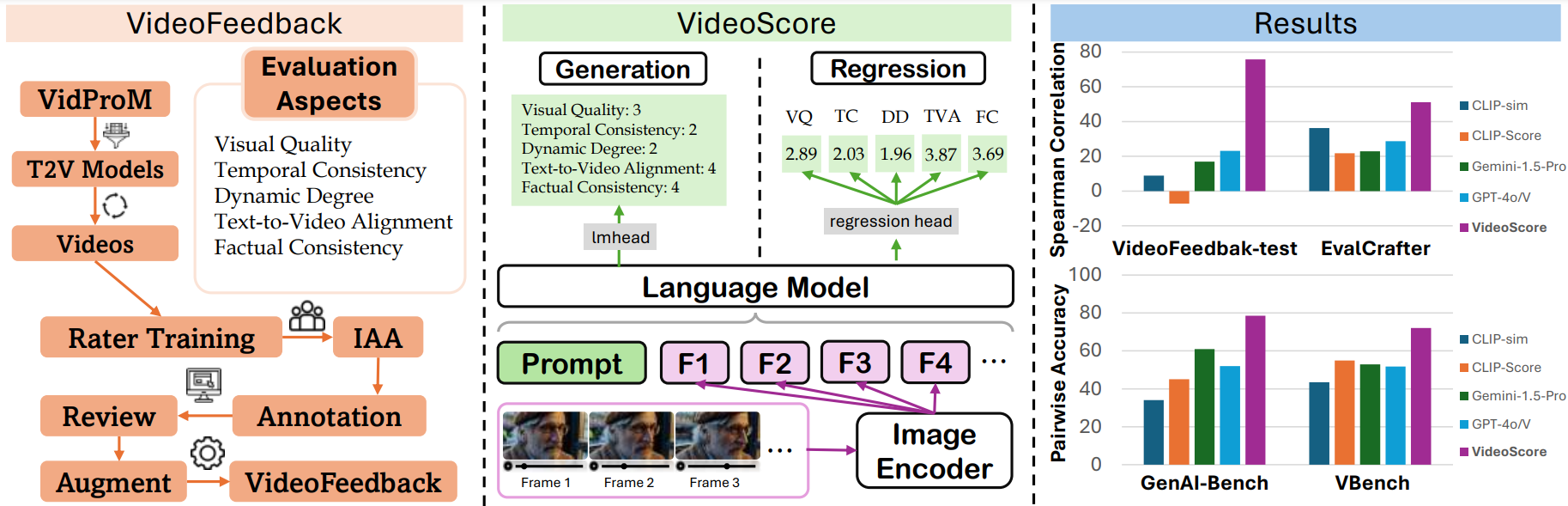

a large video evaluation dataset with multi-aspect human scores.

|

| 23 |

|

| 24 |

-

-

|

| 25 |

|

| 26 |

-

-

|

| 27 |

|

| 28 |

-

- **This is the regression version of

|

| 29 |

|

| 30 |

## Evaluation Results

|

| 31 |

|

| 32 |

-

We test our video evaluation model

|

| 33 |

For the first two benchmarks, we take Spearman corrleation between model's output and human ratings

|

| 34 |

averaged among all the evaluation aspects as indicator.

|

| 35 |

For GenAI-Bench and VBench, which include human preference data among two or more videos,

|

| 36 |

we employ the model's output to predict preferences and use pairwise accuracy as the performance indicator.

|

| 37 |

|

| 38 |

-

|

| 39 |

-

for VideoFeedback-test set, while for other three benchmarks

|

| 40 |

-

|

| 41 |

-

|

|

|

|

| 42 |

|

| 43 |

The evaluation results are shown below:

|

| 44 |

|

| 45 |

|

| 46 |

| metric | Final Avg Score | VideoFeedback-test | EvalCrafter | GenAI-Bench | VBench |

|

| 47 |

|:-----------------:|:--------------:|:--------------:|:-----------:|:-----------:|:----------:|

|

| 48 |

-

|

|

| 49 |

-

|

|

| 50 |

| Gemini-1.5-Pro | <u>39.7</u> | 22.1 | 22.9 | 60.9 | 52.9 |

|

| 51 |

| Gemini-1.5-Flash | 39.4 | 20.8 | 17.3 | <u>67.1</u> | 52.3 |

|

| 52 |

| GPT-4o | 38.9 | <u>23.1</u> | 28.7 | 52.0 | 51.7 |

|

|

@@ -67,20 +68,20 @@ The evaluation results are shown below:

|

|

| 67 |

| CogVLM | - | - | - | - | - |

|

| 68 |

| OpenFlamingo | - | - | - | - | - | -->

|

| 69 |

|

| 70 |

-

The best in

|

| 71 |

<!-- "-" means the answer of MLLM is meaningless or in wrong format. -->

|

| 72 |

|

| 73 |

## Usage

|

| 74 |

### Installation

|

| 75 |

```

|

| 76 |

-

pip install git+https://github.com/TIGER-AI-Lab/

|

| 77 |

# or

|

| 78 |

# pip install mantis-vl

|

| 79 |

```

|

| 80 |

|

| 81 |

### Inference

|

| 82 |

```

|

| 83 |

-

cd

|

| 84 |

```

|

| 85 |

|

| 86 |

```python

|

|

@@ -133,7 +134,7 @@ For this video, the text prompt is "{text_prompt}",

|

|

| 133 |

all the frames of video are as follows:

|

| 134 |

"""

|

| 135 |

|

| 136 |

-

model_name="TIGER-Lab/

|

| 137 |

video_path="video1.mp4"

|

| 138 |

video_prompt="Near the Elephant Gate village, they approach the haunted house at night. Rajiv feels anxious, but Bhavesh encourages him. As they reach the house, a mysterious sound in the air adds to the suspense."

|

| 139 |

|

|

@@ -187,15 +188,15 @@ text-to-video alignment, factual consistency, respectively

|

|

| 187 |

```

|

| 188 |

|

| 189 |

### Training

|

| 190 |

-

see [

|

| 191 |

|

| 192 |

### Evaluation

|

| 193 |

-

see [

|

| 194 |

|

| 195 |

## Citation

|

| 196 |

```bibtex

|

| 197 |

-

@article{

|

| 198 |

-

title = {

|

| 199 |

author = {He, Xuan and Jiang, Dongfu and Zhang, Ge and Ku, Max and Soni, Achint and Siu, Sherman and Chen, Haonan and Chandra, Abhranil and Jiang, Ziyan and Arulraj, Aaran and Wang, Kai and Do, Quy Duc and Ni, Yuansheng and Lyu, Bohan and Narsupalli, Yaswanth and Fan, Rongqi and Lyu, Zhiheng and Lin, Yuchen and Chen, Wenhu},

|

| 200 |

journal = {ArXiv},

|

| 201 |

year = {2024},

|

|

|

|

| 11 |

---

|

| 12 |

|

| 13 |

|

| 14 |

+

[📃Paper](https://arxiv.org/abs/2406.15252) | [🌐Website](https://tiger-ai-lab.github.io/VideoScore/) | [💻Github](https://github.com/TIGER-AI-Lab/VideoScore) | [🛢️Datasets](https://huggingface.co/datasets/TIGER-Lab/VideoFeedback) | [🤗Model](https://huggingface.co/TIGER-Lab/VideoScore) | [🤗Demo](https://huggingface.co/spaces/TIGER-Lab/VideoScore)

|

| 15 |

|

| 16 |

|

| 17 |

+

|

| 18 |

|

| 19 |

## Introduction

|

| 20 |

+

- VideoScore is a video quality evaluation model, taking [Mantis-8B-Idefics2](https://huggingface.co/TIGER-Lab/Mantis-8B-Idefics2) as base-model

|

| 21 |

and trained on [VideoFeedback](https://huggingface.co/datasets/TIGER-Lab/VideoFeedback),

|

| 22 |

a large video evaluation dataset with multi-aspect human scores.

|

| 23 |

|

| 24 |

+

- VideoScore can reach 75+ Spearman correlation with humans on VideoEval-test, surpassing all the MLLM-prompting methods and feature-based metrics.

|

| 25 |

|

| 26 |

+

- VideoScore also beat the best baselines on other three benchmarks EvalCrafter, GenAI-Bench and VBench, showing high alignment with human evaluations.

|

| 27 |

|

| 28 |

+

- **This is the regression version of VideoScore**

|

| 29 |

|

| 30 |

## Evaluation Results

|

| 31 |

|

| 32 |

+

We test our video evaluation model VideoScore on VideoEval-test, EvalCrafter, GenAI-Bench and VBench.

|

| 33 |

For the first two benchmarks, we take Spearman corrleation between model's output and human ratings

|

| 34 |

averaged among all the evaluation aspects as indicator.

|

| 35 |

For GenAI-Bench and VBench, which include human preference data among two or more videos,

|

| 36 |

we employ the model's output to predict preferences and use pairwise accuracy as the performance indicator.

|

| 37 |

|

| 38 |

+

- We use [VideoScore](https://huggingface.co/TIGER-Lab/VideoScore) trained on the entire VideoFeedback dataset

|

| 39 |

+

for VideoFeedback-test set, while for other three benchmarks.

|

| 40 |

+

|

| 41 |

+

- We use [VideoScore-anno-only](https://huggingface.co/TIGER-Lab/VideoScore-anno-only) trained on VideoFeedback dataset

|

| 42 |

+

excluding the real videos.

|

| 43 |

|

| 44 |

The evaluation results are shown below:

|

| 45 |

|

| 46 |

|

| 47 |

| metric | Final Avg Score | VideoFeedback-test | EvalCrafter | GenAI-Bench | VBench |

|

| 48 |

|:-----------------:|:--------------:|:--------------:|:-----------:|:-----------:|:----------:|

|

| 49 |

+

| VideoScore (reg) | **69.6** | 75.7 | **51.1** | **78.5** | **73.0** |

|

| 50 |

+

| VideoScore (gen) | 55.6 | **77.1** | 27.6 | 59.0 | 58.7 |

|

| 51 |

| Gemini-1.5-Pro | <u>39.7</u> | 22.1 | 22.9 | 60.9 | 52.9 |

|

| 52 |

| Gemini-1.5-Flash | 39.4 | 20.8 | 17.3 | <u>67.1</u> | 52.3 |

|

| 53 |

| GPT-4o | 38.9 | <u>23.1</u> | 28.7 | 52.0 | 51.7 |

|

|

|

|

| 68 |

| CogVLM | - | - | - | - | - |

|

| 69 |

| OpenFlamingo | - | - | - | - | - | -->

|

| 70 |

|

| 71 |

+

The best in VideoScore series is in bold and the best in baselines is underlined.

|

| 72 |

<!-- "-" means the answer of MLLM is meaningless or in wrong format. -->

|

| 73 |

|

| 74 |

## Usage

|

| 75 |

### Installation

|

| 76 |

```

|

| 77 |

+

pip install git+https://github.com/TIGER-AI-Lab/VideoScore.git

|

| 78 |

# or

|

| 79 |

# pip install mantis-vl

|

| 80 |

```

|

| 81 |

|

| 82 |

### Inference

|

| 83 |

```

|

| 84 |

+

cd VideoScore/examples

|

| 85 |

```

|

| 86 |

|

| 87 |

```python

|

|

|

|

| 134 |

all the frames of video are as follows:

|

| 135 |

"""

|

| 136 |

|

| 137 |

+

model_name="TIGER-Lab/VideoScore"

|

| 138 |

video_path="video1.mp4"

|

| 139 |

video_prompt="Near the Elephant Gate village, they approach the haunted house at night. Rajiv feels anxious, but Bhavesh encourages him. As they reach the house, a mysterious sound in the air adds to the suspense."

|

| 140 |

|

|

|

|

| 188 |

```

|

| 189 |

|

| 190 |

### Training

|

| 191 |

+

see [VideoScore/training](https://github.com/TIGER-AI-Lab/VideoScore/tree/main/training) for details

|

| 192 |

|

| 193 |

### Evaluation

|

| 194 |

+

see [VideoScore/benchmark](https://github.com/TIGER-AI-Lab/VideoScore/tree/main/benchmark) for details

|

| 195 |

|

| 196 |

## Citation

|

| 197 |

```bibtex

|

| 198 |

+

@article{he2024videoscore,

|

| 199 |

+

title = {VideoScore: Building Automatic Metrics to Simulate Fine-grained Human Feedback for Video Generation},

|

| 200 |

author = {He, Xuan and Jiang, Dongfu and Zhang, Ge and Ku, Max and Soni, Achint and Siu, Sherman and Chen, Haonan and Chandra, Abhranil and Jiang, Ziyan and Arulraj, Aaran and Wang, Kai and Do, Quy Duc and Ni, Yuansheng and Lyu, Bohan and Narsupalli, Yaswanth and Fan, Rongqi and Lyu, Zhiheng and Lin, Yuchen and Chen, Wenhu},

|

| 201 |

journal = {ArXiv},

|

| 202 |

year = {2024},

|